Written by:

Editorial Team

Editorial Team

For CIOs in regulated industries, new rules like the EU AI Act create a significant operational challenge. Manual compliance methods are no longer sufficient to manage the complexity and volume of today's regulatory landscape. Using AI for regulatory compliance has shifted from a novel technology to a core business solution. It provides a structured way to turn a defensive necessity into a measurable operational improvement.

Why AI Is Now Essential for Regulatory Compliance

The volume and pace of new regulations have made traditional compliance methods slow and inefficient. Your teams are likely managing compliance with manual checklists, spreadsheets, and a constant review of policy updates. This approach is not only time-consuming but also expensive and prone to human error.

A single missed requirement can lead to multi-million dollar fines and cause significant damage to a company's reputation.

Attempting to manage compliance manually is like navigating a complex environment with an outdated map. The tools are static and become obsolete quickly, leaving the organization reacting to issues rather than preventing them. AI for regulatory compliance changes this dynamic.

AI acts as a continuous monitoring system, scanning for risks and flagging them before they become liabilities. It transforms compliance from a reactive, manual exercise into a proactive, automated discipline.

This shift is not just about avoiding penalties; it is a key component of sustainable growth and innovation.

Moving From Reaction to Prevention

An architecture-first approach is key to gaining control in this regulatory environment. Instead of adding compliance tools as an afterthought, this strategy embeds governance directly into the AI development lifecycle from the beginning. This delivers clear, measurable results:

- Improved Auditability: Create a transparent, verifiable trail of every decision and data point, which simplifies audits.

- Reduced Operational Costs: Automate repetitive monitoring and reporting tasks. This allows skilled teams to focus on strategic initiatives. Some financial institutions have reported a reduction in manual audit preparation time by over 50% after implementing automated compliance solutions.

- Measurable ROI: Turn compliance spending from a cost center into a value driver. This occurs when AI models can be deployed faster and more safely, allowing them to generate business value without unnecessary delays.

To understand AI's protective capabilities, it is useful to review its broader applications, such as the role of Artificial Intelligence in enhancing cybersecurity. As regulations like the EU AI Act become fully enforceable, a proactive strategy is necessary. You can learn more about how to prepare by exploring our guide on achieving EU AI Act readiness.

Putting AI Regulations Into Practice: From Law to Code

It is one thing to read the EU AI Act or the NIST AI Risk Management Framework. It is a different challenge to translate those legal documents into specific tasks for engineering teams. The language of law and the language of technology do not always align, creating a gap where compliance efforts can fail.

This is where a methodical approach to AI for regulatory compliance becomes a valuable asset. The goal is to map abstract requirements—like ensuring fairness, transparency, and data quality—to specific, auditable controls within your AI architecture. You are turning legal obligations into a clear backlog of work that data scientists, engineers, and governance teams can execute.

This shift moves you from passively reading regulations to actively building compliance into your systems. Reactive methods are no longer adequate.

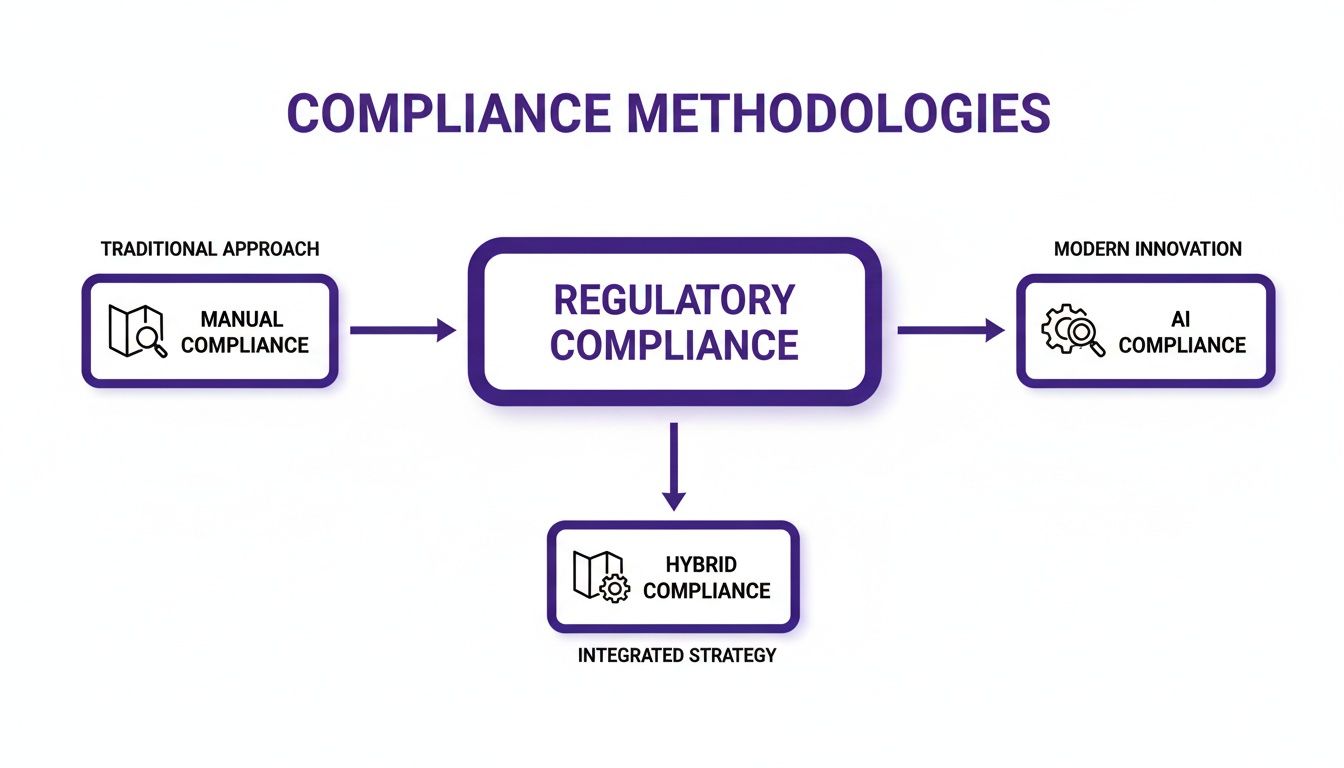

As the diagram shows, the traditional manual approach is static and retrospective. An AI-powered framework provides the continuous insight needed to keep pace with both regulatory changes and model behavior in production.

What Makes an AI System "High-Risk"?

A central focus of modern AI regulation is the concept of "high-risk AI systems." These are any AI applications where a failure could have a serious impact on an individual's safety, fundamental rights, or opportunities. Examples include AI used for credit scoring, hiring decisions, or managing critical infrastructure.

For these systems, the rules are strict. It is productive to treat these rules as a checklist for building robust and trustworthy AI.

- Data and Data Governance: Regulators require high-quality, relevant training data to prevent bias. This means you need controls like data lineage tracking, automated data quality checks, and versioned datasets to trace everything for an audit.

- Transparency and Explainability: Users and regulators need to understand how an AI system makes its decisions. This requires technical controls, such as generating model cards, using explainability tools like SHAP or LIME, and keeping detailed logs of predictions.

- Human Oversight: A human must be able to intervene in the operation of high-risk systems. That translates to building "human-in-the-loop" workflows, designing clear dashboards for intervention, and setting up automated alerts that flag high-impact decisions for human review.

The pressure to implement these controls is increasing. Gartner predicts that by 2026, half of the world's governments will have enacted specific AI legislation. This is echoed in FINRA's oversight reports, which identify generative AI and vendor risk as top concerns, linking them to existing rules like Regulation S-P for data protection.

Mapping EU AI Act Requirements to Enterprise AI Controls

So, how do you make this practical? Let's break down key articles from the EU AI Act for high-risk systems and map them to real-world controls. This table connects the legal text to concrete engineering actions.

Mapping EU AI Act Requirements to Enterprise AI Controls

| EU AI Act Requirement (Article) | Required Enterprise AI Control | DSG.AI Solution Component | Example KPI |

|---|---|---|---|

| Article 10 (Data & Data Governance) | Implement automated data quality validation pipelines. Establish clear data lineage and versioning for all training, validation, and testing datasets. | Data Catalog & Lineage Tracking | Data Quality Score > 95%; 100% of high-risk models have auditable data lineage. |

| Article 13 (Transparency) | Auto-generate model cards for every production model. Implement explainability dashboards to interpret individual model predictions. | Model Inventory & Explainability Toolkit | 100% of deployed models have a complete and versioned model card. |

| Article 14 (Human Oversight) | Design and implement "human-in-the-loop" review workflows for decisions exceeding a defined risk threshold. | Governance Workflow Automation | Manual override rate below 5%; Average review time for flagged decisions < 24 hours. |

| Article 15 (Accuracy, Robustness & Cybersecurity) | Conduct continuous performance monitoring for drift and degradation. Integrate adversarial testing and security scans into the CI/CD pipeline. | Continuous Model Monitoring & Security Module | Model accuracy drift < 2% from baseline per quarter; Zero critical vulnerabilities found in penetration tests. |

This mapping creates a clear, auditable trail between your actions and legal obligations. It also gives your teams a practical blueprint for building compliant AI from the start.

Implementing controls is only part of the process; you must also prove they work. For instance, incorporating automated AI pentesting is a way to continuously verify that you are meeting the security and robustness mandates of the EU AI Act's Article 15. It turns a static requirement into a continuous verification process.

Designing Your Compliance-Ready AI Architecture

For any CIO, turning regulatory theory into architectural reality is a primary task. A solid blueprint prevents future compliance issues and creates a scalable system for deploying AI safely. It is about moving past a patchwork of tools and designing a cohesive architecture where compliance is an integral component.

The core of this architecture is a blend of modern MLOps (Machine Learning Operations) and established GRC (Governance, Risk, and Compliance) principles. Each stage, from data ingestion to model deployment, must have automated checkpoints that enforce the rules. This creates a transparent, auditable trail.

This is a strategic necessity. The global RegTech market, which utilizes AI for regulatory compliance, is growing. It is projected to reach USD 19.5 billion by 2026, with a compound annual growth rate of 20.8%, according to MarketsandMarkets. This growth reflects the urgent need for enterprises to automate compliance as regulations like the EU AI Act become effective. You can get a closer look at these market dynamics in this detailed RegTech market report.

Core Components of a Compliant AI Stack

A robust, compliance-ready architecture is about having the right functional layers in place, not specific technologies. This approach avoids vendor lock-in and ensures the system integrates with your existing enterprise infrastructure.

-

Secure Data Ingestion and Processing: All data enters through a secure environment. Here, automated checks validate data quality, while sensitive information is anonymized or pseudonymized to meet privacy standards.

-

Centralized Model Registry and Inventory: This is the single source of truth for every AI model. Each entry includes metadata: model cards, training data lineage, version histories, and performance metrics. This simplifies audit requests.

-

Automated Governance and Monitoring Framework: This layer is the compliance engine. It continuously monitors live models for performance dips, data drift, or emerging bias. If a key metric deviates from a predefined threshold, it automatically flags the issue for human review.

The Compliant Data and Model Workflow

Understanding how data and models move through this architecture helps clarify the concept. It is a multi-stage pipeline where governance is integrated at every step.

A compliance-ready architecture treats regulations as system requirements. You must design for auditability and fairness just as you design for scalability and performance.

Here is a simplified, synthetic example of this workflow:

- Data Ingestion: Raw transaction data flows into the system via a secure pipeline like Apache Kafka. An initial validation check rejects any records with missing fields.

- Feature Engineering: The clean data is transformed into features for an anti-money laundering (AML) model. During this process, all personally identifiable information is masked.

- Model Training and Validation: A gradient boosting model is trained on these features. Its performance and fairness scores are logged in the central model registry. An independent validation team reviews the model against internal policies before further steps.

- Deployment: Once approved, the model is deployed as a microservice and registered in the live model inventory, which links it to the monitoring framework.

- Continuous Monitoring: The model’s predictions are tracked in real-time. If its false positive rate increases by more than 5% from its baseline, an alert is automatically sent to the compliance team.

This type of structured process is the backbone of effective AI orchestration. It ensures that every model in production is both effective and demonstrably compliant.

Putting Practical AI Governance and Risk Patterns into Play

A well-designed architecture is the skeleton; the muscle of AI governance comes from practical, repeatable patterns. We need to translate abstract principles like "fairness" and "transparency" into concrete actions. These are the techniques that bridge the gap between policies and production AI systems.

This means establishing clear oversight, integrating privacy controls into workflows, and committing to regular model audits. It is about turning AI for regulatory compliance into an active, operational discipline.

This hands-on approach is becoming necessary. The market for AI governance compliance software is projected to reach approximately USD 1.4 billion by 2025, according to UMU research. This growth is fueled by regulatory pressure. These tools help organizations meet ethical standards and legal mandates, like the EU AI Act's rules for high-risk systems. Looking further, some projections show the broader AI governance market could reach USD 3.6 billion by 2033. You can read the full research about these AI governance market trends to get the bigger picture.

Establishing an AI Review Board

A foundational governance pattern is creating an AI Review Board (ARB). This cross-functional group brings together leaders from legal, compliance, data science, and business units for oversight. The ARB is a strategic body that ensures AI initiatives align with regulatory duties and company ethics before deployment.

The ARB's core responsibilities typically include:

- Risk Assessment: Evaluating the potential impact of new AI systems and classifying them by risk level (e.g., low, high), as defined by regulations like the EU AI Act.

- Policy Enforcement: Ensuring every proposed model adheres to internal policies and external laws.

- Approval Gateway: Serving as the final checkpoint for deploying high-risk AI applications, confirming that all necessary controls and monitoring plans are in place.

Key Governance Patterns in Action

Beyond the ARB, several specific patterns are essential for managing risk. These are practical techniques you can build directly into your MLOps pipelines.

Effective governance is about building guardrails that allow teams to innovate faster and more safely, with confidence that their work is compliant.

Let’s walk through two critical patterns using a bank's AI model for loan approvals, a high-risk system.

1. Human-in-the-Loop (HITL) for High-Stakes Decisions This pattern ensures a human expert has the final say in critical decisions. It is a direct response to the human oversight requirements in many regulations.

- How it Works (Synthetic Example): A loan model might automatically approve applications with very high scores and deny those with very low scores. For applications in a middle range—say, between a 620 and 680 score—it flags them for manual review. A human loan officer then reviews the application, considers contextual details the AI might miss, and makes the final decision. This prevents the model from unfairly rejecting borderline applicants and creates a clear audit trail of human oversight.

2. Continuous Model Monitoring An AI model's performance can degrade over time as data changes, a problem known as model drift. Continuous monitoring is an early-warning system to catch this before it creates a compliance issue.

- How it Works (Synthetic Example): A bank’s monitoring system tracks the loan model’s approval rates across different demographic groups in real-time. If the system detects that the approval rate for a protected group drops by more than 5% from its baseline, it triggers an alert. This prompts the data science team to investigate for algorithmic bias before it leads to discriminatory outcomes and regulatory penalties.

Measuring Success with Compliance KPIs

A compliance program without metrics is a collection of intentions. To make an AI for regulatory compliance program effective, you need to measure what matters. Key Performance Indicators (KPIs) provide concrete proof to regulators, boards, and business leaders that your governance framework is an active, effective system.

The idea is to set a clear baseline for model behavior and then monitor it with live dashboards. This data-driven approach turns abstract compliance goals into tangible operational improvements.

From Model Health to Business Impact

Good KPIs for AI compliance cover everything from technical model performance to business impact. They connect statistical measures to real-world outcomes. Your metrics need to answer two critical questions: "Is the model working correctly?" and "Is the model working fairly?"

Here are a few important KPIs to track:

- Model Fairness Score: This measures for bias. It assesses if a model's decisions are consistent across different demographic groups. Using standard metrics like the Disparate Impact Ratio, you can set an acceptable range (a common target is 0.8 to 1.25) and receive an alert if the model deviates.

- Data and Concept Drift Percentage: Models can become less effective if the live data they see no longer resembles their training data. You must track this. Monitoring for data drift (changes in input data) and concept drift (changes in the relationships between inputs and outputs) is necessary. A KPI like, "Keep data drift under a 5% deviation from the baseline each month," provides a clear guardrail.

- Time to Remediate Audit Findings: This measures operational efficiency. Tracking the average time from when an issue is flagged by an auditor to when it is resolved shows the agility of your compliance process. A lower number indicates a responsive system.

Quantifying the Return on Compliance

Technical metrics are essential, but the C-suite wants to see the bottom-line impact. A well-run AI compliance program delivers tangible returns linked to risk reduction and operational efficiency.

A data-driven compliance strategy is not just a cost center; it is a competitive advantage. It helps you deploy AI faster and more safely, unlocking business value while protecting the company from legal and reputational risk.

Let's look at two synthetic examples:

Example 1: Anti-Money Laundering (AML)

- The Problem: A bank’s rule-based AML system was flagging too many non-suspicious transactions, creating a high volume of false positives. The compliance team spent significant time on manual reviews.

- The AI Solution: They implemented an AI model that could analyze transaction patterns with greater accuracy.

- KPIs and Outcomes:

- False Positive Reduction: Within three months, the new AI system reduced false positive alerts by 25% compared to the previous system's Q4 baseline.

- Investigator Efficiency: This change freed up an estimated 4,000 analyst hours per year, allowing the team to focus on genuinely high-risk cases.

Example 2: Credit Scoring Model

- The Problem: A financial institution needed to update its credit scoring model for better accuracy while ensuring it did not discriminate against protected consumer groups.

- The AI Solution: They built a new model with fairness guardrails and continuous monitoring controls integrated from the start.

- KPIs and Outcomes:

- Model Accuracy: The new model was 8% more accurate in its predictions than the previous version, leading to better lending decisions.

- Fairness Score: The Disparate Impact Ratio was held steady within a 0.9 to 1.1 range, providing a clear, auditable trail to demonstrate fairness to regulators.

A Practical Six-Week Roadmap to Implementation

A good strategy requires a clear execution plan. For AI for regulatory compliance, a defined, time-boxed plan is an effective approach. This roadmap breaks the project into six manageable, one-week sprints. It is designed to create a transparent project plan where each step delivers tangible progress.

This six-week timeline is an approach for moving from initial discovery to an operational system. The focus is on iterative development, with governance and compliance integrated from day one.

Weeks 1-2: Discovery and Architectural Design

The first two weeks are for laying a solid foundation. This involves understanding your specific environment and designing an architectural blueprint. This phase is critical to ensure the technical solution addresses your business needs and regulatory obligations.

-

Week 1: Discovery and Risk Assessment: We begin by cataloging your current AI models, data sources, and technology stack. This includes stakeholder interviews to map existing compliance workflows and identify high-risk use cases. The key output of this week is a risk matrix to prioritize systems based on regulatory exposure.

-

Week 2: Architectural Blueprint: Using insights from week one, we design a compliance-ready AI architecture tailored to your environment. We define data workflows, select components for the model registry, and map out the monitoring framework. The design is technology-agnostic to avoid vendor lock-in.

Weeks 3-4: Prototyping and Control Implementation

With the blueprint approved, we shift from planning to building. We develop a working prototype of the core governance platform and start implementing the specific compliance controls identified during discovery. This approach allows for continuous feedback.

The goal here is to get a minimum viable product (MVP) of your governance framework running. This demonstrates functionality quickly and proves that the core architectural components work together.

A major focus during these two weeks is setting up automated checks for data quality, model fairness, and performance monitoring. By the end of this phase, you will have a functional system capable of tracking a pilot set of AI models.

Weeks 5-6: Deployment and Operational Handover

The final phase focuses on moving the validated prototype into a live production environment and preparing your team to manage it. We make the system scalable, secure, and ready for enterprise-wide use.

-

Week 5: Production Hardening and Deployment: We rigorously test the platform for security vulnerabilities, scalability, and reliability. We also integrate it with your existing CI/CD pipelines and other enterprise systems. Once testing is complete, the solution is deployed to your production environment.

-

Week 6: Operationalization and Knowledge Transfer: The final week is about a smooth handover. We provide comprehensive training and documentation, including hands-on workshops with your GRC, data science, and MLOps teams. This ensures you have the in-house expertise to manage your AI compliance program. You receive full IP ownership and the complete source code.

You can get a better sense of our structured project approach at DSG.AI Projects.

Frequently Asked Questions

When implementing AI for regulatory compliance, leaders often have similar questions. Here are straightforward answers.

How Do We Get Started with AI Compliance if We Don't Have the In-house Expertise?

The best way to begin is by working with a partner focused on knowledge transfer. The first step is a discovery phase to map your regulatory challenges—like preparing for the EU AI Act—to your current data and systems.

A partner can lead the initial design and setup of key controls while training your people. This approach builds your team’s skills as the project progresses. It reduces the risk of your first steps into AI compliance and ensures that at the end of the project, you have a production-ready system, you own the intellectual property, and your team can manage it.

What's the Real ROI on an AI GRC Platform?

The return on an AI GRC platform can be measured in three key areas. First is avoiding fines. With regulations like the EU AI Act, penalties for non-compliance can be as high as 7% of global turnover.

Second is operational efficiency. Automating compliance tasks can reduce the time your team spends on manual audit preparation by more than 50% and lower day-to-day operational costs. Finally, you get risk reduction. The platform continuously monitors for problems like model bias or performance drift, protecting you from legal issues and reputational damage. This turns a compliance requirement into a business advantage.

An AI GRC platform transforms compliance from a necessary expense into a source of value by turning risk management into a driver of operational efficiency and faster innovation.

How Is an Architecture-First Approach Different from Buying Off-the-Shelf Software?

Most off-the-shelf compliance tools require you to adapt your processes to their software. This often creates integration challenges. An architecture-first approach is different. It starts by designing a solution built for your specific data, existing technology, and regulatory needs.

Instead of a "black box" system, you get a transparent system custom-built to fit into your tech stack. This strategy frees you from vendor lock-in because you own the source code. It gives you a scalable foundation that can adapt to new business needs and future regulations.

Ready to replace compliance uncertainty with a clear, ROI-focused plan? DSG.AI partners with enterprises to design, build, and operationalize compliant AI systems that deliver measurable value. Explore our structured project approach at https://www.dsg.ai/projects.