Written by:

Editorial Team

Editorial Team

AI governance, risk, and compliance (GRC) is the framework of rules, roles, and processes for managing artificial intelligence. It guides how AI systems are developed, deployed, and monitored to ensure they operate safely, ethically, and in alignment with business objectives and legal requirements.

This oversight is necessary to manage the risks inherent in AI while enabling innovation. Without a GRC framework, an AI initiative operates without controls, exposing the organization to financial, reputational, and regulatory consequences.

The Need for AI Governance

Deploying AI without a formal governance structure is like operating a high-performance engine without a steering wheel or brakes. The power is significant, but so is the potential for failure. AI GRC is not just a technical or legal requirement—it is a core business function for sustainable growth.

The push to innovate often sidelines caution. A 2024 report from an enterprise survey (N=500) found that 72% of companies are already using AI, with many reporting revenue increases. However, this rapid adoption introduces new risks, from biased algorithms and data leaks to regulatory fines.

The Three Pillars of AI GRC

A successful ai governance risk and compliance program is built on three interconnected pillars. Each is critical for a responsible and effective AI strategy.

- Governance: This pillar establishes policies and lines of accountability for every AI system. It defines who is responsible for specific outcomes.

- Risk Management: This involves identifying, assessing, and mitigating the unique risks associated with AI, such as a model's performance degrading over time (model drift) or security threats like data poisoning.

- Compliance: This pillar ensures every AI activity adheres to laws, regulations, and industry standards. With regulations like the EU AI Act establishing fines up to €35 million or 7% of global revenue, compliance is non-negotiable.

When these pillars work together, they transform AI from a high-risk initiative into a managed, strategic asset. The objective is to create an environment where innovation can proceed within safe, ethical, and legally sound boundaries.

For a GRC Director, the challenge is building a program that enables fast-paced development while enforcing strong controls. This requires a shift from reacting to incidents to proactively engineering systems that prevent them. To understand these oversight principles, it can be helpful to review the fundamentals of corporate governance.

Implementing a formal AI GRC program is about more than avoiding fines. It is about earning customer trust, protecting the brand, and ensuring that AI delivers measurable business value. Without it, even the most advanced AI projects are built on an unstable foundation.

Navigating the Global AI Regulatory Landscape

The rapid adoption of AI has initiated a global effort to regulate it. For any leader overseeing AI governance, risk, and compliance, this changing legal environment is a significant challenge. The only way to prepare is to understand the emerging regulations and build a strategy that can adapt to change.

The European Union is leading this effort. The EU AI Act is the world's first comprehensive, legally binding framework for artificial intelligence.

Its influence extends beyond Europe, affecting global supply chains and setting new expectations for businesses and regulators worldwide.

A Closer Look at the EU AI Act

The EU AI Act uses a risk-based approach to categorize AI systems. This tiered model means the level of regulation corresponds directly to the potential for harm, helping businesses focus their compliance efforts where they are most needed.

Here is the breakdown:

- Prohibited AI: This category includes systems deemed an unacceptable threat to fundamental rights, such as government-run social scoring or real-time biometric scanning in public places. These are banned.

- High-Risk AI: This is where most large enterprises will focus. It covers AI used in critical areas like hiring, credit scoring, medical devices, and infrastructure management—applications where a malfunction could have severe consequences.

- Limited-Risk AI: These systems require transparency. For example, users must be informed they are interacting with an AI, such as a chatbot, not a human.

- Minimal-Risk AI: This category includes the majority of AI tools, like spam filters and AI in video games. Under the Act, these face no new specific legal obligations.

For the high-risk category, compliance requires detailed documentation, strong data governance, activity logs, and meaningful human oversight to maintain control over these systems.

Organizations are required to build and maintain a complete risk management system covering the entire lifecycle of their high-risk AI. Failure to do so can lead to large fines and brand damage. A thorough analysis of an organization's current state is the first step toward EU AI Act readiness.

The Act's Global Reach and the US Response

The EU AI Act is setting a global benchmark. It fully takes effect on August 1st, 2026, and it will change how enterprises worldwide approach governance. Data already indicates a trend: organizations outside the EU lag 22-33 percentage points behind their European counterparts on every major AI control metric, from purpose limitation to having a "kill switch." This suggests the Act is already functioning as an unofficial global standard.

Meanwhile, the United States has not passed a single federal law comparable to the EU AI Act. Instead, a patchwork of state laws is emerging. States like Colorado and Illinois are already addressing algorithmic bias in specific sectors like hiring and insurance.

This creates a complex compliance map for any company operating across the country. Navigating a growing web of state-specific rules makes a flexible governance framework a necessity.

A practical strategy is to build a compliance program around the highest regulatory standard. By aligning internal AI governance with the principles of the EU AI Act, an organization not only prepares for its impact but also builds a foundation that can adapt to new rules as they emerge in the U.S. and other regions.

Building Your AI Governance Framework

An AI governance framework is the blueprint that translates regulatory principles into operational practice. It is the internal operating system for responsible AI, defining the people, policies, and processes required.

This is similar to constructing a building. Construction does not begin without architectural plans, a general contractor, and clear rules for the crew. An AI framework serves the same purpose: it clarifies who is in charge, what the rules are, and how critical decisions are made. It ensures everything built stands on a solid, trustworthy foundation.

Defining Key Roles and Responsibilities

The lack of clear ownership is a common failure point for AI governance, risk, and compliance. When responsibility is diffuse, accountability is often absent. The first step is to establish specific roles.

An effective structure requires a mix of high-level strategic oversight and hands-on management. While every company's structure will differ, most effective frameworks include these roles:

- AI Review Board (or Council): A cross-functional team of leaders from legal, compliance, data science, and key business units. They provide strategic direction, approve high-risk projects, and set company-wide AI policies.

- Model Owner: For each AI model, one person is ultimately responsible for its performance, risk, and compliance, from development to retirement. This role creates a single point of accountability.

- Data Steward: This person is responsible for the data that powers the AI. They are the expert on its quality, integrity, and ethical use, ensuring it is fit-for-purpose and compliant with privacy rules.

These roles form a system of checks and balances, preventing risky, biased, or unvetted models from being deployed. Exploring existing data governance framework examples can provide ideas for structures that can be adapted for AI.

Establishing Core Policies and Processes

Once people are in place, they need a clear playbook. This is the role of policies and standardized processes. These documents are the official rules for how AI is built, deployed, and managed.

Policies must be direct and actionable, specifying rules for ethical AI principles, data handling standards, and model transparency requirements. They provide written evidence of the company's commitment.

Policies are ineffective without processes to enforce them. Standardized processes must be integrated directly into the AI lifecycle to make governance an operational habit.

- Model Intake and Risk Assessment: Before work begins, every new AI project must be registered and undergo a risk assessment. This initial screening determines the required level of scrutiny.

- Development and Validation: This involves a clear set of technical standards and testing protocols to ensure models are accurate, fair, and secure before deployment. This stage includes bias tests and security checks.

- Deployment and Monitoring: This is a continuous loop of tracking live model performance, detecting drift or decay, and having a response plan for when issues arise. Automated alerts are essential here.

A well-designed governance framework connects policy to practice. It is not a theoretical exercise but a functional system for embedding responsibility and control directly into MLOps workflows.

The goal is to make compliance the default path for technical teams. Embedding these controls can be achieved by managing your AI model portfolio with an integrated platform.

Mind the Governance-Containment Gap

Many organizations are falling behind. A 2026 forecast from a market analysis firm indicates that 54% of corporate boards will not list AI governance among their top five priorities. This leaves them lagging 26-28 percentage points behind their more proactive peers on capabilities like model visibility and containment controls.

This creates a "governance-containment gap," where companies invest in monitoring tools but lack the governance structure to act on the alerts.

A robust framework closes this gap. It ensures that when a tool flags a serious issue, like a model showing bias or a drop in performance, there is a clear process and a designated owner ready to respond immediately.

Identifying and Managing AI-Specific Risks

Effective AI governance, risk, and compliance is not about using a generic checklist. It requires understanding the unique threats that AI systems introduce. Unlike traditional software, AI models are not static; they learn, change, and can fail in novel ways. The first step in building a solid program is understanding these risks.

These threats should be sorted into distinct categories, each with its own plan for controls and mitigation. This approach organizes the effort and helps ensure no vulnerability is overlooked.

The Four Core Categories of AI Risk

Categorizing AI risk helps teams avoid becoming overwhelmed. It creates a clear framework to identify, assess, and control risks, breaking a large problem into manageable tasks.

Here are the main risk domains to monitor:

- Operational Risk: This concerns what happens when a model fails in a live environment. Model drift is a common example, where performance degrades because live data no longer resembles the training data. For instance, a logistics model trained before the pandemic would be ineffective at predicting today's shipping patterns, leading to inefficient routes and higher costs.

- Ethical Risk: This category covers issues like unfairness, discrimination, and lack of transparency. Algorithmic bias is a significant concern, where a model consistently makes prejudiced decisions against certain groups. A hiring tool trained on historical data from a male-dominated field might unfairly penalize qualified female candidates, creating legal and reputational risks.

- Security Risk: AI systems introduce new vulnerabilities. Adversarial attacks, for example, involve feeding a model deliberately manipulated data to cause it to misbehave. A subtle alteration to a digital image could trick a self-driving car into misidentifying a stop sign, with potentially catastrophic consequences.

- Compliance Risk: This is about adhering to legal and regulatory requirements. With regulations like the EU AI Act establishing strict rules, deploying an AI system without proper documentation or human oversight can result in large fines. A bank using an unvalidated AI for credit scoring could violate fair lending laws.

From Spotting Risks to Stopping Them

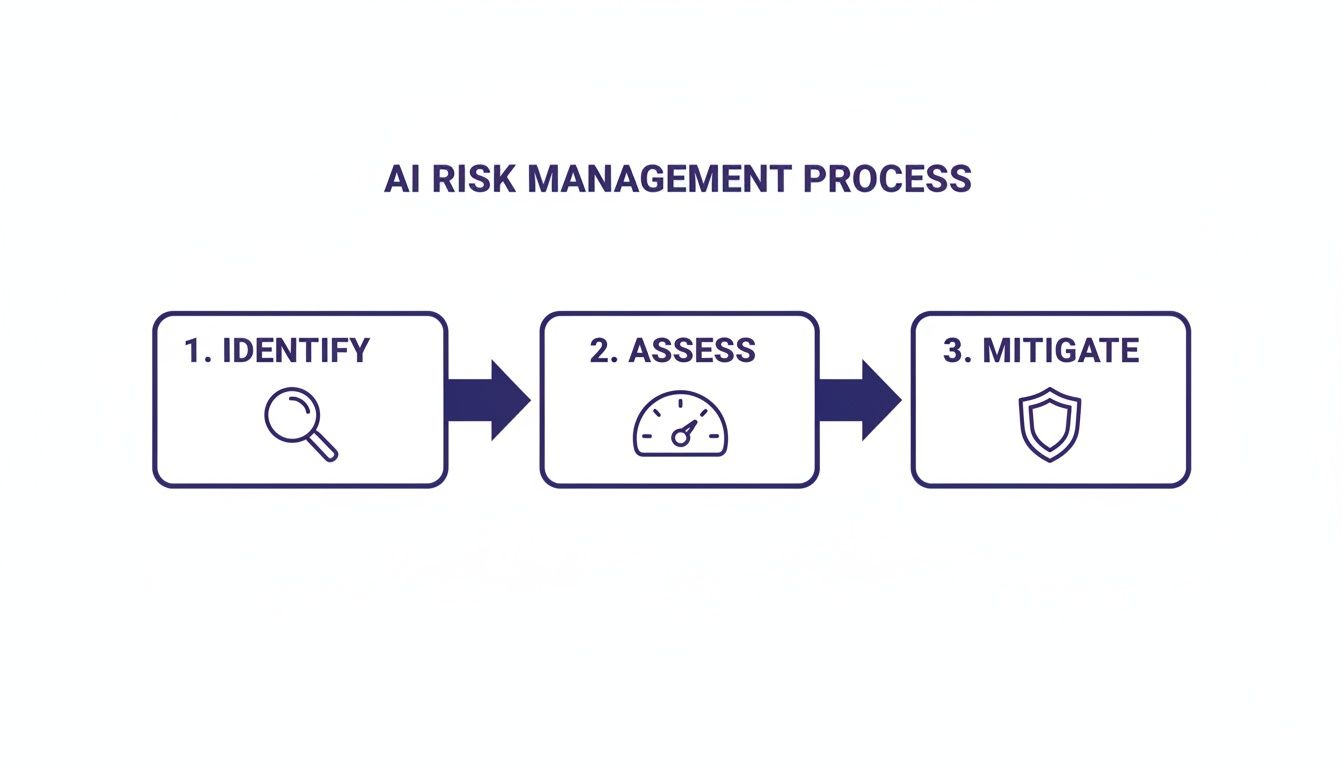

Identifying risks is not enough; a process for managing them is required. This means creating a continuous cycle of identifying, assessing, and controlling risks that is integrated into the AI development and deployment lifecycle, not added as an afterthought.

A good starting point is creating a comprehensive AI model inventory. This inventory serves as a single source of truth, tracking every model in development or production. For each model, it should document its owner, purpose, data sources, and risk profile. Without this central view, managing AI risk across a large organization is difficult. Once the inventory is in place, systematic checks can begin. You can learn more about how to assess your AI model portfolio to pinpoint and prioritize these critical risks.

As AI moves from research to core business operations, the stakes increase. Companies are now working to strengthen model risk management, set up real-time monitoring, and draft contingency plans for AI failures.

This trend is supported by data. According to the Allianz Risk Barometer 2026, Artificial Intelligence has risen to become the #2 global business risk, cited by 32% of organizations. This shift highlights the pressure to balance AI innovation with strong governance. You can read more about how AI is reshaping the global risk landscape on commercial.allianz.com. Managing AI-specific risks has moved from a niche IT problem to a central pillar of enterprise risk management.

Your Practical Playbook for Implementing AI GRC

Moving from theory to action requires a clear, structured plan. An effective AI governance, risk, and compliance program is not built overnight; it is rolled out in deliberate phases that embed responsible practices into daily operations.

This process is a flexible roadmap. Each phase builds on the last, creating momentum and ensuring governance becomes a natural part of the AI lifecycle, not an obstacle.

Phase 1: Assessment and Discovery

You cannot govern what you cannot see. The first step is to get a complete and accurate picture of your organization's AI footprint. This phase is about visibility and triage, helping to focus resources where they are most needed.

The main goal is to establish a comprehensive AI model inventory. This inventory becomes a single source of truth, cataloging every model in use or development. For each one, you need to document its purpose, owner, data sources, and dependencies.

With your inventory in hand, you can begin classifying risk. Not all AI systems carry the same level of risk. A model that optimizes internal supply chains has a different risk profile than one used for credit scoring or medical diagnostics. By classifying each model based on its potential business, ethical, and regulatory impact, you can prioritize governance efforts.

- Key Question for Leaders: Do we have a complete, up-to-date inventory of every AI model operating or in development across the entire enterprise?

Phase 2: Framework Design

Once you know what AI assets you have, you can design the rules to manage them. This phase involves building the core components of your GRC framework—the roles, policies, and committees that provide structure and accountability. This is where you translate high-level principles into documented procedures.

Start by establishing an AI Review Board or a similar cross-functional oversight committee. This group should include leaders from legal, compliance, data science, IT, and key business units. Their role is to provide strategic direction, review and approve high-risk AI projects, and act as the final authority on AI governance matters.

Next, draft your core AI policies. These should be clear, enforceable rules that cover critical areas.

- Acceptable Use Policy: Defines what AI can and cannot be used for within the organization.

- Data Governance Policy: Outlines the standards for data quality, privacy, and security for training and operating AI models.

- Model Risk Management Policy: Details the required processes for model validation, testing, and continuous performance monitoring.

These documents form the backbone of your AI governance risk and compliance program, making expectations clear for all teams involved.

Phase 3: Operationalization

A framework is only useful when put into practice. The operationalization phase is about integrating your new governance controls directly into the MLOps (Machine Learning Operations) pipeline. The goal is to make compliance the path of least resistance for your technical teams.

This means embedding GRC checkpoints at key stages of the AI lifecycle. For instance, a mandatory risk assessment must be completed before a new model project can begin. Similarly, a model cannot be deployed into production without passing a series of automated fairness and security tests.

This process can be summarized by a core risk management flow that should be embedded in your operations.

This Identify, Assess, and Mitigate loop becomes a repeatable, often automated, part of the deployment process. It ensures no high-risk model is deployed without proper oversight.

Synthetic Example: A multinational bank implemented this playbook to govern its new AI-powered fraud detection system. By integrating automated bias checks and drift monitoring directly into their MLOps pipeline, they achieved a 99% audit pass rate for the high-risk model and reduced their compliance reporting time by 30% compared to their Q4 baseline. This shows how operationalizing GRC can produce measurable efficiency gains.

Phase 4: Continuous Improvement

AI governance is an ongoing program that must adapt. The final phase focuses on monitoring, learning, and refining your GRC framework over time. The AI landscape and its associated risks are constantly changing, and your governance must keep pace.

Establish a formal incident response protocol specifically for AI-related events. When a model behaves unexpectedly or shows bias, your team needs a clear plan to detect, contain, and resolve the issue quickly. This plan must define roles, communication channels, and escalation paths.

Regular audits and reviews are also critical. Schedule periodic assessments of your high-risk models and the overall GRC program to identify gaps and areas for improvement. These audits provide assurance to the board and regulators that your controls are working as intended. This iterative approach ensures your AI governance risk and compliance framework remains robust and effective long after its initial rollout.

AI GRC: Your Questions Answered

When implementing AI governance, risk, and compliance (GRC), several practical questions often arise. Here are straightforward answers to common challenges business leaders face.

We Have Limited Resources. Where Do We Even Begin with AI Governance?

With limited resources, focus on the highest risks first.

The initial step is to know what AI systems you have. Create a complete inventory of every AI model your organization is using or testing. You cannot govern what you do not see.

Once you have this list, classify each model by its potential impact. A model that determines customer credit scores carries a much higher risk than one that optimizes an internal warehouse route.

Select the two or three highest-risk systems and focus on them. For these models, you should:

- Document what they do and the data they use.

- Assign a single person as the clear owner, responsible for the model's lifecycle.

- Run a baseline assessment to check for obvious bias or fairness issues.

This focused approach addresses your most critical vulnerabilities first and provides the greatest risk reduction for the effort invested.

How Can I Justify the Cost? What's the ROI on an AI GRC Program?

Measuring the return on investment for an AI governance, risk, and compliance program involves both protecting the bottom line and creating new value. This can be tracked with financial and operational metrics.

First, consider cost avoidance. Calculate the potential fines from regulations like the EU AI Act or the revenue loss if a critical model fails. This number represents the direct financial impact your GRC program is designed to prevent.

Next, identify efficiency gains. A solid GRC program can automate many manual audit and compliance reporting tasks. A reduction of 15% to 25% in audit preparation time is a realistic target.

Finally, good governance builds trust, which allows you to deploy new AI models faster and turn innovation into business growth. Track the time it takes for a properly governed model to move from development to production.

Wait, Isn't This Just MLOps? What's the Difference?

MLOps and AI Governance are related but distinct concepts.

MLOps is the technical framework. It is the set of practices that automates the deployment, monitoring, and maintenance of machine learning models. It is the engine that makes the system run.

AI Governance, on the other hand, is the complete control system. It is the steering wheel, the brakes, and the navigation that ensures the system operates safely, follows rules, and achieves its intended purpose without causing harm.

MLOps is the how—how to run a model efficiently. AI Governance is the what and why—what rules the model must follow and why it aligns with business and ethical standards. Both are necessary to build an AI strategy that is not just powerful, but also responsible and sustainable.

At DSG.AI, we provide an integrated suite of Responsible AI and GRC products to help you build, monitor, and manage a robust governance framework that meets emerging regulatory standards. Learn more about our enterprise-grade AI solutions.