Written by:

Editorial Team

Editorial Team

Artificial intelligence (AI) inventory management uses machine learning to analyze data, predict future demand, and automate ordering decisions. This approach allows businesses to move beyond simple historical averages and create a dynamic inventory plan. The result is a reduction in both stockouts and excess inventory.

Why Traditional Inventory Systems No Longer Work

Empty shelves for popular items and dusty corners filled with slow-moving products are common inventory challenges. This imbalance between too much and not enough signals an outdated inventory system.

Legacy methods like spreadsheets and fixed reorder points were designed for a more predictable business environment. They rely on historical data, assuming future demand will mirror the past. This model is ineffective in today's volatile supply chains.

The High Cost of Small Errors

A small forecasting error can have a significant financial impact. Consider this synthetic example of a national consumer goods company.

- The Scenario: An existing system forecasts demand for a popular snack product based on the previous year's sales. It fails to account for a competitor's new promotional campaign and a sudden social media trend. The forecast is off by 5%.

- The Impact: This miscalculation leads to a stockout during a peak sales week. The company loses an estimated $1.5 million in sales for that single product and damages its brand reputation.

- The Aftermath: To prevent another stockout, the team orders excess safety stock for the next quarter. This reaction results in $800,000 in additional carrying costs for slow-moving inventory.

This example illustrates how rule-based systems struggle with market disruptions. They are too rigid to anticipate changes before they occur.

An AI-powered approach changes the dynamic. Instead of reacting to past sales, it predicts future demand. By analyzing hundreds of variables—such as weather forecasts, competitor pricing, and shipping lane delays—AI provides a predictive method for managing inventory.

The objective is to build a more resilient and profitable supply chain. Using AI inventory management replaces guesswork with data-driven precision, turning an operational challenge into a competitive advantage.

The AI Models That Power Smart Inventory Decisions

AI inventory management relies on specific machine learning models designed to solve business problems. These algorithms analyze complex data to provide predictive insights.

Traditional forecasting is like driving while only looking in the rearview mirror. You can see where you have been, but you cannot see what is ahead. AI models are like a GPS with live traffic updates, analyzing the entire system to find the best path forward.

Instead of only reacting to last month's sales, these systems analyze hundreds of variables to anticipate market changes. The three core models are demand forecasting, replenishment optimization, and anomaly detection.

Advanced Demand Forecasting

Effective AI inventory management begins with accurate demand forecasting. AI models analyze large datasets to identify subtle patterns that are difficult for humans to detect.

These models consider a wide range of data:

- Internal Data: Point-of-sale (POS) records, sales history, seasonality, and the impact of past promotions.

- External Signals: Competitor pricing, local weather forecasts, economic trends, and social media activity.

- Product Attributes: Product lifecycle stage and price sensitivity.

By evaluating these variables, AI can generate more accurate forecasts. Multiple industry analyses have shown that AI can increase forecast precision by 20% to 30% over a 12-month period compared to baseline legacy methods. This improvement directly reduces stockouts and overstock. This level of detail enables a business to shift from quarterly planning to near-real-time demand sensing.

Replenishment and Safety Stock Optimization

Replenishment optimization models calculate the most profitable reorder points and quantities for each stock keeping unit (SKU). These algorithms balance competing objectives:

- Reduce Carrying Costs: Inventory ties up capital and incurs costs for storage, insurance, and potential obsolescence.

- Maintain Service Levels: Out-of-stock items lead to lost sales and decreased customer loyalty.

- Account for Variability: The models incorporate supplier lead times and demand volatility to calculate optimal safety stock levels.

This capability is particularly useful for businesses that need to improve inventory forecasting across multiple channels, where demand signals can be fragmented.

Synthetic Example: A national grocery chain uses an AI replenishment model for perishable dairy products. The algorithm analyzes store-level sales data, local weather, and supplier delivery schedules. It automatically adjusts daily orders for each store. Based on a pilot across 50 stores in Q1, this leads to an 8% to 15% reduction in spoilage and a 5% increase in on-shelf availability compared to the Q4 baseline.

Anomaly Detection for Supply Chain Resilience

Anomaly detection models act as a constant monitoring system for your supply chain. These algorithms scan data streams, such as shipping manifests and supplier communications, to identify unusual patterns. This allows teams to address potential disruptions before they escalate.

This model is trained to detect early warning signs, including:

- An unexpected increase in lead time from a key supplier.

- Unusual shipping delays on a primary logistics route.

- A sudden decrease in product quality reported from a specific factory.

When the system flags an anomaly, it sends an alert to the supply chain team. This enables them to find an alternate supplier, reroute a shipment, or adjust a production plan. By identifying these issues early, you build resilience into your operations.

Laying the Groundwork for AI Success

An AI model's effectiveness depends on the quality of its data and the system it operates on. A solid technical foundation is the most critical factor for successful AI inventory management. A well-designed system integrates with and enhances existing operations.

Designing a Scalable AI Architecture

An AI project is not a one-time software purchase; it is an integrated capability that must communicate with core business systems. A modern, tech-agnostic architecture provides flexibility and avoids vendor lock-in.

A modular architecture includes several components:

- Data Ingestion Layer: This component pulls raw data from various sources, including Enterprise Resource Planning (ERP) and Warehouse Management Systems (WMS).

- Data Lake or Warehouse: This central repository stores raw data, which is essential for historical trend analysis and model training.

- AI/ML Platform: This is where algorithms are built, trained, and tested. It provides the necessary computing power and tools.

- Integration and API Layer: This layer pushes the model's output, such as a forecast or replenishment order, back into operational systems for execution.

This modular design allows for individual components to be upgraded without overhauling the entire system.

The Data Streams That Fuel Predictive Accuracy

High-quality, granular data is essential for any AI inventory system. The more comprehensive the data inputs, the more accurate the outputs will be.

A successful implementation requires a shift from data collection to strategic data curation. The goal is to build a complete view of all factors that influence inventory movement.

Several key data types are required:

- Transactional Data: Detailed POS data, order histories, and returns information, broken down by SKU, location, and time.

- Operational Data: Current stock levels, supplier lead times, inbound shipment schedules, and production calendars from your WMS and ERP.

- External Signals: Competitor pricing data, local weather forecasts, regional economic indicators, and social media data about your products.

After acquiring this data, it must be prepared for the AI model. You can begin by assessing your organization's AI readiness.

From Raw Data to Powerful Features

Raw data alone is not useful to a machine learning model. Feature engineering is the process of transforming raw inputs into predictive signals, or "features."

For example, a log of raw sales data can be transformed into more useful features:

- Moving Averages: A 7-day or 30-day rolling sales average helps to smooth out random daily fluctuations.

- Seasonality Indicators: A binary flag can inform the model of an upcoming holiday or seasonal event.

- Promotional Impact: A binary feature (1 or 0) can indicate whether a product was on promotion during a specific week.

This process involves cleaning, combining, and reshaping data to provide the AI with the clearest possible picture. While time-consuming, it is essential for building a foundation that delivers results.

An Enterprise Roadmap From Pilot to Production

Implementing an AI inventory management system is a phased journey. Moving from a pilot to an enterprise-wide solution with a clear approach de-risks the project and ensures the final system meets business needs.

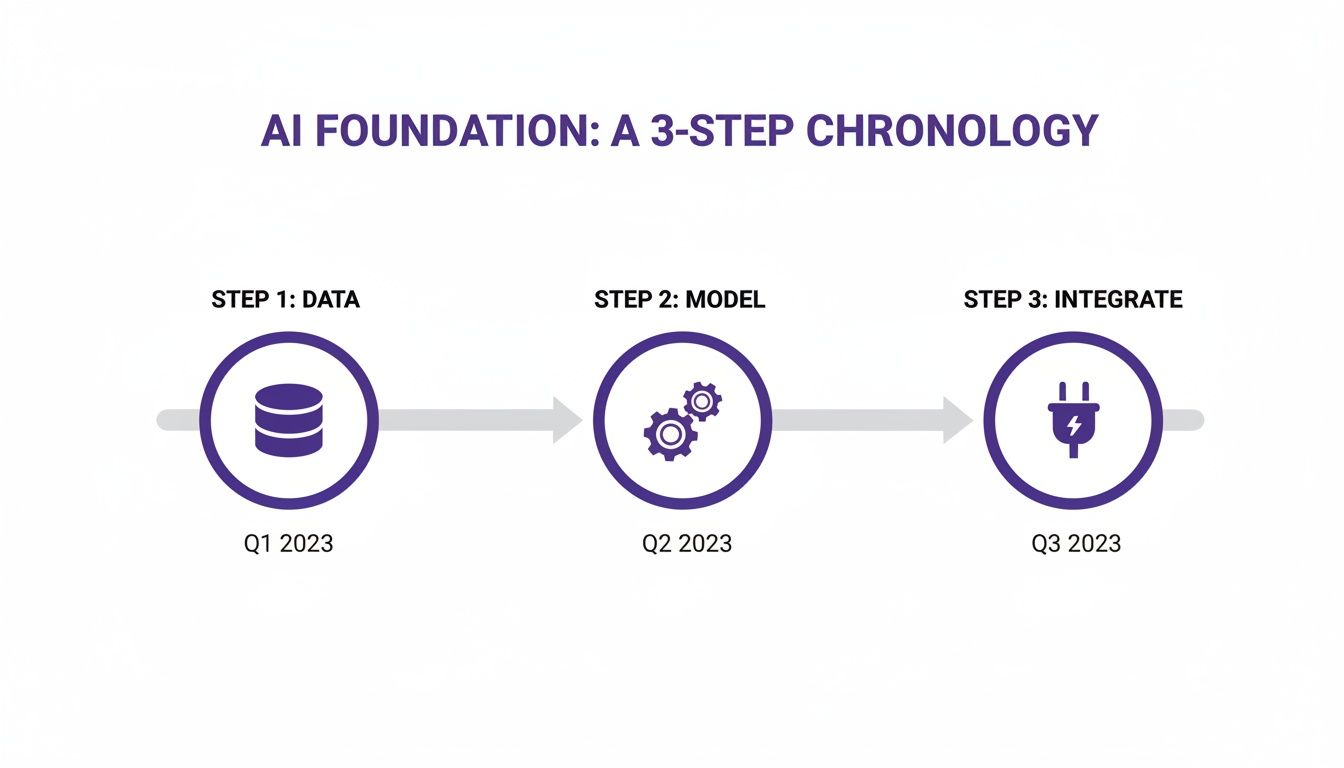

A typical enterprise project can begin with a six-week pilot designed to deliver results quickly. This is often broken down into three two-week sprints: Discovery & Data, Model Development, and Integration & Deployment.

This process follows a logical sequence: secure quality data, build a robust model, and then integrate it into existing systems.

These phases are interdependent. A powerful model requires solid data, and business value is only realized after the model is integrated.

Weeks 1-2: Discovery and Data Assessment

The first two weeks focus on understanding requirements and establishing the technical groundwork. Workshops with stakeholders from operations, finance, and IT are conducted to define a tightly scoped pilot project with clear success metrics. For example, a pilot could focus on optimizing inventory for a single high-value product category in a specific region.

Key activities during this phase include:

- Defining the Pilot Scope: Identify a specific, high-impact business problem, such as reducing spoilage for perishable goods by 10% compared to the previous quarter's baseline.

- Aligning on Success Metrics: Agree on the key performance indicators (KPIs) that will define success, such as a target reduction in carrying costs or an improvement in on-shelf availability.

- Identifying Data Sources: Map all necessary data streams, from ERP transaction logs to external supplier data, and identify any gaps.

- Conducting a Technical Feasibility Study: Assess the quality and accessibility of data to confirm project viability.

The outcome of this phase is a comprehensive project charter that outlines the pilot's objectives, scope, timeline, and data requirements.

Weeks 3-4: Iterative Model Development and Validation

The focus then shifts to building and refining the machine learning model. This is an iterative process. Data scientists and ML engineers start with a baseline model and systematically improve its performance.

An initial model might achieve a 15% reduction in forecast error over the existing method. By engineering new features or testing different algorithms, this could be improved to 25%. Regular check-ins with stakeholders are held to review progress and ensure the model's logic is sound.

By the end of this phase, you have a validated, high-performing model that has been rigorously tested against your historical data. It is a production-ready asset proven to outperform existing methods.

Weeks 5-6: Integration, Deployment, and Handover

In the final two weeks, the validated AI model is connected to live operational systems. The goal is to move it from a development environment to a production setting. This typically involves setting up API connections that allow the model to pull fresh data from the WMS and push its outputs, such as optimized order quantities, back into the ERP.

After deployment, continuous monitoring is established. Dashboards are configured to track the model's accuracy and business impact, a critical part of our integrated AI monitoring solutions.

The project concludes with training and documentation for your internal teams, empowering them for long-term ownership. This includes protocols for retraining the model as market conditions change.

AI Inventory Management Implementation Timeline (DSG.AI 6-Week Model)

| Phase | Weeks | Key Activities | Primary Outcome |

|---|---|---|---|

| Discovery | 1-2 | Stakeholder workshops, scope definition, KPI alignment, data source mapping, technical feasibility assessment. | A signed-off Project Charter with clear objectives and a solid data plan. |

| Development | 3-4 | Data ingestion & cleaning, feature engineering, iterative model building, backtesting, and performance validation. | A Validated ML Model proven to outperform baseline methods on historical data. |

| Deployment | 5-6 | API integration, production deployment, performance monitoring dashboard setup, team training, and handover. | A Live, Operational AI System delivering business value, with a fully enabled internal team. |

This timeline ensures a move from concept to a value-generating asset in six weeks, preparing for a full enterprise-wide expansion.

Measuring Real-World ROI and Ensuring AI Governance

Demonstrating the effectiveness of an AI inventory system and maintaining control over it are critical. The connection between the AI's recommendations and the company's bottom line must be clear.

At the same time, giving an algorithm control over millions of dollars in inventory introduces new risks. Good governance ensures the system is trustworthy, operating fairly, transparently, and predictably.

Defining and Tracking Key Performance Indicators

To justify the investment, you must track relevant business KPIs. While model accuracy is important, the true measures of success are financial and operational efficiency improvements.

Key metrics to monitor include:

- Inventory Turnover: This measures how quickly stock is sold and replaced. A higher number indicates efficient inventory management.

- Gross Margin Return on Inventory (GMROI): This KPI measures the profit generated for every dollar invested in inventory.

- Reduction in Spoilage and Scrap: For perishable or short-lifecycle products, this is a direct measure of ROI. A realistic goal is an 8% to 15% reduction in scrap compared to a pre-AI baseline.

- Stockout Rate: This measures the frequency of out-of-stock events. Improved forecasting and replenishment should lower this rate.

These metrics provide a quantifiable story about the return on investment, linking the AI's decisions to financial results.

The Critical Role of Responsible AI Governance

As AI becomes more integrated into operations, oversight is essential. A framework for Responsible AI ensures that models are transparent, fair, and auditable.

The core principle of AI governance is the ability to explain why a model made a specific recommendation. A lack of explainability means a loss of control over a critical business process.

This involves establishing clear policies for model development, monitoring, and accountability. According to market analysis by Mordor Intelligence, the AI in supply chain market is projected to grow from $7.38 billion in 2024 to $27.23 billion by 2029. This growth is driven by AI's ability to reduce stockouts by up to 30% and increase productivity by 25% year-over-year.

A key part of AI governance is identifying and correcting bias. You can learn about available AI model bias detection tools to help ensure your system operates ethically. Integrated monitoring provides visibility into the model's operations, which is essential for maintaining compliance and trust.

For more information, see how integrated monitoring ensures AI solution visibility.

Turning Inventory Into a Competitive Advantage

We have covered the limitations of legacy inventory systems and the capabilities of machine learning models. Success depends on a solid data foundation and a structured implementation plan.

It is time to evaluate how AI can reshape your operations. The right approach can guide you through building an enterprise-grade solution that delivers a competitive edge.

An AI-driven supply chain provides the ability to anticipate market shifts, optimize capital, and meet customer demand. It transforms a cost center into a source of strategic value.

A correctly executed AI project delivers more than a new tool; it provides a clear path to value and full system ownership. It is about rethinking how inventory drives the business forward. By turning raw data into automated decisions, you build an operation that is more efficient, intelligent, and prepared for the future.

Your Top Questions About AI Inventory Management, Answered

When leaders explore AI for inventory management, they often move from theoretical questions to practical implementation concerns. Here are answers to common questions from enterprise teams.

How Does an AI System Actually Connect to Our ERP?

An AI inventory system acts as an intelligence layer on top of your existing ERP, such as SAP or Oracle. The connection is made through secure APIs that allow for two-way data flow.

The AI platform pulls necessary data—historical sales, stock levels, supplier lead times—from your ERP. After the algorithms generate an optimized forecast or replenishment orders, these recommendations are sent back to the ERP. Your team can then review and execute them within their familiar system. This process enhances existing workflows without disruption.

What Kind of Team Do We Need to Run This Day-to-Day?

You do not need to hire a full team of machine learning specialists to manage the system post-launch. While data scientists and ML engineers handle the initial build, your team's role shifts to strategic oversight.

The required skills include:

- Subject Matter Experts: Your supply chain planners and inventory managers remain critical. They apply business context to the AI's recommendations, manage exceptions, and make final strategic decisions.

- A "Model Monitor": It is beneficial to have a data analyst or BI professional monitor the model's performance dashboards. Their role is not to rebuild algorithms but to identify trends and alert the team if the model requires retraining due to significant market shifts.

Realistically, How Long Until We See a Return on This?

A tangible return on investment can often be seen within the first quarter after launching a focused pilot project. A six-week pilot is designed to demonstrate value quickly on a small scale.

For instance, a pilot aiming for a 10% reduction in spoilage for one product category can show a clear financial benefit weeks after go-live. A successful pilot builds momentum for scaling the solution to other product lines or warehouses. Broader improvements to KPIs like inventory turnover and GMROI typically become evident within six to nine months.

Ready to turn your inventory data into a real competitive advantage? The team at DSG.AI specializes in building and deploying enterprise-grade AI solutions that deliver measurable results. Explore our successful AI projects and see how we can help you build a more resilient and profitable supply chain.