Written by:

Editorial Team

Editorial Team

As a CIO, you're tasked with leveraging technology for a competitive advantage. You see consumer AI tools like ChatGPT, but you need to understand how to deploy secure, scalable AI solutions for enterprise that solve specific business problems and deliver a measurable return on investment.

Enterprise AI is not a consumer app. It is a system engineered for security, scalability, and integration with core platforms like your ERP and CRM. Its purpose is to convert large datasets into actionable intelligence that improves operational efficiency, reduces costs, or increases revenue.

Defining Real-World AI Solutions for Enterprise

An enterprise AI solution is an operational capability, not just a piece of software. It should function like a new, intelligent component of your business process. For example, enterprise-ready platforms like the Azure OpenAI Service provide a secure foundation to embed AI models directly into core operations, rather than using a separate, standalone tool.

This changes the focus from the technology itself to the business problem you need to solve. The objective is not to adopt the latest AI trend but to build and deploy production-grade systems that deliver quantifiable results.

From Hype to Operational Reality

A functional AI system requires more than an algorithm. It needs a solid foundation built on several components. Without this structure, an AI model will not deliver consistent value in a production environment.

These components work together to turn an AI concept into a dependable business asset. Let's break down the pillars of a successful enterprise AI system.

Core Components of an Enterprise AI Solution

| Component | Business Implication | Example Metric (Synthetic) |

|---|---|---|

| Robust Architecture | Ensures the system handles enterprise-scale data and integrates without disrupting existing workflows. | System uptime of 99.95%; Processes 10,000 transactions per minute under peak load. |

| Clean Data Pipelines | Guarantees AI models are trained on accurate data, leading to reliable outputs. | Data validation error rate below 0.1%; 98% of data available for processing within 5 minutes of ingestion. |

| Clear Governance | Establishes rules for ethical use, mitigates bias, and ensures compliance with regulations like the GDPR or EU AI Act. | 100% of high-risk models audited quarterly; Achieved a 99% pass rate on the latest compliance checklist. |

These pillars separate a proof-of-concept from a true enterprise-grade AI solution.

The market has moved past experimentation. According to a 2023 survey by a major consulting firm, 87% of large enterprises are actively implementing AI solutions. This is a 23 percentage point increase from their 2021 survey. This growth is backed by significant investment, with the average organization in the survey spending $6.5 million annually on AI initiatives. The primary driver is process automation, with a reported 76% of companies using AI for this purpose, achieving an average 43% reduction in processing times for targeted tasks.

A production-ready AI solution is not a product you buy; it is a capability you build. It integrates deeply into business processes, using data to automate decisions, predict outcomes, and create a sustainable competitive advantage.

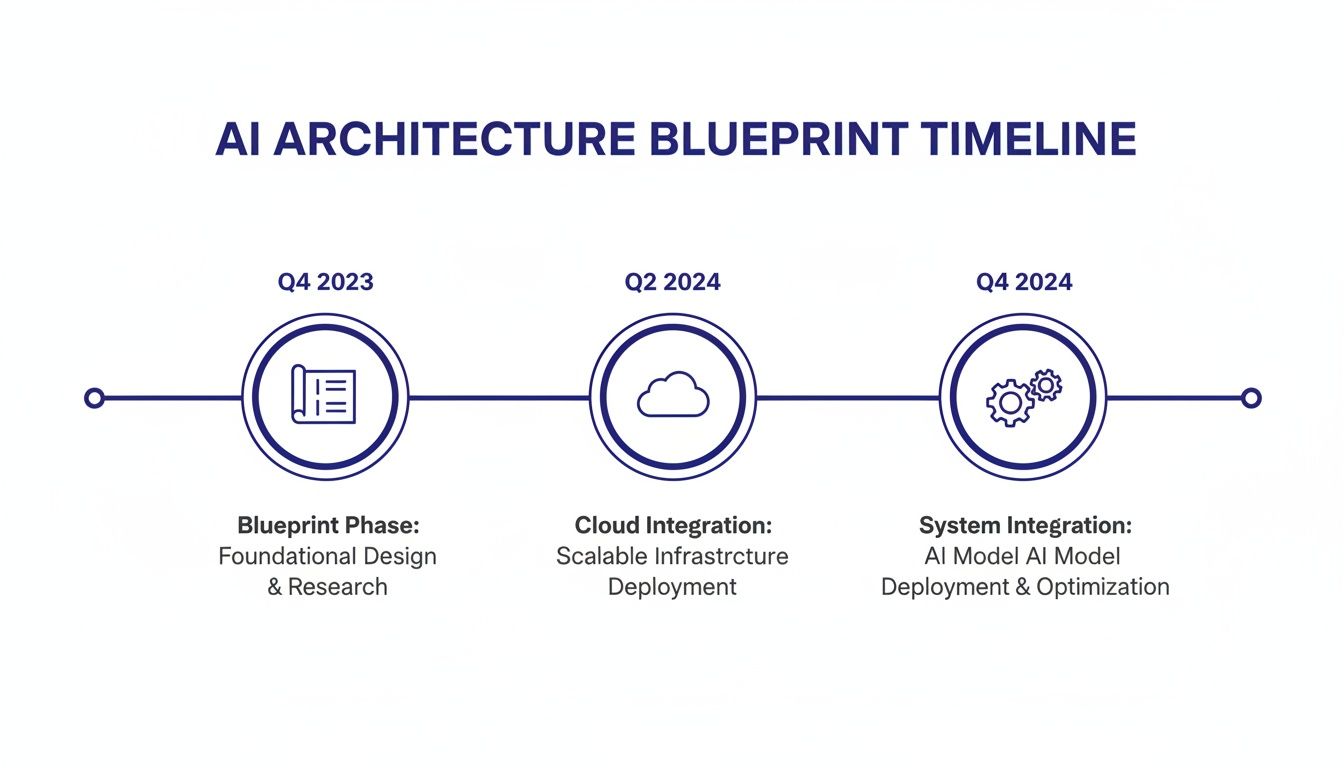

Your Blueprint for a Scalable AI Architecture

An AI project's success is determined by its architecture, not just its features. Deploying AI models without a solid architectural plan is like building a skyscraper without blueprints. The result is unstable, insecure, and unable to handle real-world demand. For any enterprise solution, an ‘architecture-first’ approach is non-negotiable.

This blueprint determines if your AI can scale with your business, integrate with existing systems, and withstand new security threats. For a CTO or CIO, this foundational planning is the only way to deploy resilient AI into a complex IT landscape.

Core Architectural Decisions for Enterprise AI

Before writing code, your team must make fundamental choices. The most significant is the hosting environment. Each option presents trade-offs regarding cost, control, and scalability.

- Cloud-Native: Provides high scalability and access to managed services. The trade-offs include potential data sovereignty issues and rising long-term costs.

- On-Premise: Often the choice for highly regulated industries. It offers maximum control over data and security but requires significant upfront investment and a skilled in-house team.

- Hybrid: Aims for a balance. It uses the cloud's flexibility for computation-heavy tasks while keeping sensitive data on-premise.

This diagram from AWS shows a reference architecture for machine learning operations (MLOps). It illustrates how different components connect in an integrated system.

The architecture is cyclical. Data preparation, model training, deployment, and monitoring are interconnected. This highlights the need for a robust, end-to-end framework.

Designing for Integration and Agility

Your AI solution must connect to and improve your core business operations. This requires designing for integration with your existing ERP, CRM, and other applications from the start.

A microservices-based architecture is an effective approach. Instead of building a single, monolithic application, you break the solution into smaller, independent services. This simplifies connecting each service to existing systems and reduces complexity. It also improves maintenance and resilience; if one component fails, it does not take down the entire system.

Adopting a technology-agnostic framework is crucial. This strategy prevents costly vendor lock-in by ensuring your architecture is not dependent on a single provider's proprietary tools, giving you the flexibility to adapt as new technologies emerge.

Managing the code for these systems is another critical piece. As you design the architecture, selecting the right tools to manage AI-generated code is essential. A guide to the best AI code review tools for 2025 can help you maintain code quality and security as AI-assisted development becomes more common.

Your Six-Week Roadmap to AI Implementation

A multi-year AI project with an uncertain outcome is a common concern for business leaders. This fear is based on an outdated model of technology deployment. An agile approach can deliver a production-ready solution in a shorter timeframe, reducing investment risk while delivering value quickly.

This six-week roadmap is a practical plan for breaking the process into weekly milestones. The goal is to take an enterprise AI solution from concept to a functioning system, demonstrating business impact without long timelines and budget overruns.

Week 1: Discovery and ROI Framing

The first week focuses on aligning stakeholders and defining the scope. This stage is about identifying a single, high-value problem to solve. We move from a general goal like "we should use AI" to a specific, measurable objective.

Success in this week means ending with a clear problem statement and an understanding of the expected return on investment (ROI). For example, a logistics company's goal might shift from "improving efficiency" to a specific target: "reduce manual email processing by 75% within Q3." This is a target that can support a business case.

Week 2: Data Assessment and Architecture Design

With a clear objective, the focus shifts to foundations. Week two involves a thorough assessment of your data. This includes identifying data sources, verifying quality and completeness, and mapping data flows for the new AI model.

Simultaneously, we design the high-level architecture. This involves more than selecting technology. It's about creating a blueprint that ensures the new solution integrates with existing systems like your ERP and CRM. Key decisions about cloud, on-premise, or hybrid models are made here to ensure scalability and security.

Weeks 3 & 4: Iterative Development and Prototyping

This two-week period is for development. Using an agile methodology, the team builds and refines the AI model in short cycles. This avoids a "big bang" release where stakeholders see no progress until the end.

Instead, you see progress incrementally.

- Sprint 1: Build a baseline model that solves the core problem. The focus is on function, not features.

- Sprint 2: Refine the model with additional data and features based on early stakeholder feedback.

- Sprint 3: Improve model accuracy and performance, measuring against the ROI metrics defined in week one.

By the end of week four, you have a working prototype that demonstrates the core concept and its business value.

Week 5: System Integration and User Acceptance Testing

An AI model that only works in a development environment is an experiment, not a business solution. Week five focuses on integrating the solution into your operational workflows. We connect the model to live production data and the business applications your team uses daily.

After integration, the system undergoes User Acceptance Testing (UAT). End-users test the tool to ensure it meets their needs and is easy to use. Their feedback is used for final adjustments.

An agile, six-week cycle is not about cutting corners. It's about maintaining an intense focus on a single, high-value business problem and shipping a production-ready solution that generates returns quickly.

Week 6: Deployment and Operational Handover

The final week is for deployment. We move the solution to the production environment, set up performance monitoring, and conduct a full handover to your internal teams. This includes documentation and training so your team can manage the system independently.

The project concludes with a complete transfer of the source code and intellectual property ownership to you, eliminating vendor lock-in. You now have an operational AI asset solving a real-world business problem in six weeks.

Measuring Business Value and Calculating AI ROI

An AI solution's value is determined by the business results it produces. To justify the investment, you must focus on tangible outcomes like cost reduction, revenue growth, or operational improvements. Measuring the return on investment (ROI) for AI solutions for enterprise is a structured process that links model performance to financial results.

It begins with establishing a performance baseline. You cannot measure improvement without knowing your starting point. For example, if your goal is to reduce equipment downtime with predictive maintenance, you must first calculate your average downtime rate over the last two quarters. This number becomes your benchmark.

Defining Your Key Performance Indicators

With a baseline established, the next step is to define the specific Key Performance Indicators (KPIs) that will measure the AI's effectiveness. These KPIs must be directly tied to the business problem. A vague goal like "improve customer satisfaction" is not useful. A better KPI is "reduce customer support ticket resolution time by 25%." This is a measurable target.

For most enterprise AI projects, KPIs fall into three categories:

- Efficiency Gains: Track operational speed and intelligence. Examples include reduced manual processing time, faster inventory turnover, or lower energy consumption.

- Cost Reduction: Measure direct financial impact. This could be a quantifiable decrease in material waste, lower fuel costs, or fewer labor hours on administrative tasks.

- Revenue Growth: Measure the AI's impact on top-line results. Examples include higher sales conversion rates from an AI-powered lead scoring system or increased customer lifetime value from personalized recommendations.

To see how your potential projects measure up, you can get a clear picture with a complimentary AI readiness assessment.

From Metrics to Monetary Value

Connecting operational KPIs to a dollar value is the core of an ROI calculation. It involves translating improvements—like faster processing or fewer errors—into financial terms. The calculation is straightforward if you have a clear understanding of your company's cost structure.

Let's review a few synthetic examples. This framework shows how to link an AI use case to a specific financial outcome.

Sample AI ROI Calculation Framework (Synthetic Examples)

| Use Case | Primary Metric | Baseline (Q2) | Target Improvement | Potential Annual Savings |

|---|---|---|---|---|

| Maritime Fuel Optimization | Fuel Consumption (tons/day) | 28 tons | 8% to 15% Reduction | $850,000 |

| Agricultural Yield Forecasting | Forecast Accuracy | 70% | 20 percentage point increase | $1.2 Million |

| Logistics Email Automation | Manual Processing Time (hours/week) | 200 hours | 75% Reduction | $450,000 |

This approach grounds the discussion in financial reality. It is no longer an abstract goal but a clear path to savings or growth.

This disciplined approach is becoming standard. According to a 2023 McKinsey report, 82% of leaders report using generative AI every week, and 72% are formally measuring its ROI by focusing on productivity and profit. The results are clear: three-quarters of these leaders see positive returns from their AI investments, a trend detailed in studies on enterprise AI adoption.

An ROI calculation is not a one-time event. Continuous monitoring is essential to ensure that AI models deliver sustained value and do not suffer from performance degradation or "model drift" over time.

By establishing baselines, defining specific KPIs, and translating them into financial impact, you build a defensible case for any enterprise AI initiative. This rigor ensures your technology investments drive business growth.

AI Governance and Regulatory Compliance: Your Guardrails for Innovation

Deploying powerful AI is one challenge. Ensuring it operates safely, fairly, and legally is another. With regulations like the EU AI Act becoming law, a solid governance framework is essential. It provides the structure to innovate confidently, turning compliance from a burden into a competitive advantage.

A lack of a unified approach creates friction. Companies with a formal AI strategy report an 80% success rate in adoption, while those without a strategy lag at 37%, according to a 2023 survey. The problem is often internal misalignment. The same survey found that 42% of C-suite executives say AI is "tearing companies apart" due to departmental conflicts. This is worsened by operational hurdles, with 68% citing friction with IT and 72% blaming siloed applications. You can learn more about how a clear strategy addresses enterprise adoption challenges.

Good AI governance, risk, and compliance (GRC) translates abstract principles like "fairness" into concrete actions. It provides the controls needed to deploy ai solutions for enterprise responsibly.

Building Your AI Governance Framework

The first step is to assemble an AI governance committee. This should be a cross-functional team with leaders from IT, legal, data science, and the relevant business units. Their primary role is to establish the rules for how your organization develops, deploys, and monitors AI.

This committee has three core responsibilities:

- Create the Rulebook: Establish clear guidelines for data usage, model validation, ethical boundaries, and acceptable risk levels.

- Maintain a Model Inventory: Oversee a complete catalog of every AI model in use across the company, tracking its purpose, data sources, and performance.

- Assess Risks: Implement a standard process for evaluating the potential risks of any new AI project before it enters production.

Governance is not about slowing innovation. It is about building the guardrails that allow you to accelerate safely. It ensures every AI solution aligns with your company's values and the law.

A proactive GRC strategy is critical for preparing for new legislation. You can learn more by reviewing our guide on achieving AI Act readiness.

Putting Principles Into Practice

Turning high-level principles into daily operations is how governance becomes a practical discipline that protects the business.

Here are three actions to implement your governance framework:

- Maintain a Central Model Inventory: This is a library for all your AI models. Each entry should document the model's purpose, owner, data inputs, performance metrics, and version history. This inventory is your single source of truth for audits and regulatory inquiries.

- Set Up Automated Monitoring: AI models require ongoing management. Their performance can change as new data is introduced. Automated monitoring tools are necessary for tracking key metrics, detecting performance degradation, and flagging potential bias. For example, a system can alert you if a credit approval model's accuracy drops below a 95% threshold or if its decisions show a statistical bias.

- Conduct Regular Audits: Schedule periodic, independent reviews of your most critical AI systems. These audits should evaluate data quality, model fairness, and compliance with internal policies and external regulations.

Bringing Your AI Vision to Life

We have covered the process from foundational architecture to deployment patterns that define successful enterprise AI projects. The path from idea to a production system that drives value requires discipline and a focus on ROI. Now, it is time to take the first step.

Successful AI deployment is about choosing the right partner to navigate data integration, governance, and deployment. Their methodology is as important as their technical expertise.

What to Do Next

The best way to start is with a high-impact, low-risk pilot project. The goal is to secure a quick win. A successful pilot demonstrates tangible value to stakeholders, builds internal confidence, and creates momentum for wider AI adoption. Proving success on a small scale de-risks a larger investment.

To execute the first project successfully, you must ask the right questions. A suitable partner will provide clear answers that connect directly to your business goals.

The most critical decision you'll make isn't which model to use, but which partner to trust. Their methodology, their commitment to your IP, and their focus on business value will echo long after the first project is delivered.

Use this checklist to evaluate any AI provider:

- Intellectual Property: Do we get 100% ownership of all IP and source code? Anything less creates a risk of vendor lock-in.

- Technology Stack: Are you technology-agnostic? A partner should recommend the best tools for your problem, not just the ones they sell.

- Methodology: Can you provide a clear, time-bound plan, like the six-week roadmap discussed? Vague timelines are a red flag.

- Business Focus: How will you connect the technical work to our financial and operational KPIs from the beginning?

With these questions, you are ready to move forward. The next step is to schedule a discovery session to build your own six-week plan. This session will identify a specific business challenge and outline how a custom AI solution can solve it.

Common Questions from Enterprise Leaders

As you explore AI for your company, a few key questions typically arise. Here are answers based on our experience.

How Can We Start with AI Without a Massive Upfront Investment?

Start with a small, targeted project. Avoid a large-scale overhaul initially. Select one high-impact pilot project with a clear, measurable return on investment.

An agile, six-week implementation is ideal for this. It allows you to prove AI's value on a manageable scale, achieve a quick win, and gain stakeholder support before committing to a larger investment. This approach converts a large capital expense into a small, predictable operational cost with a fast return.

How Do We Avoid Getting Locked into One Vendor's Technology?

Vendor lock-in is a significant risk that can limit innovation and increase costs. To avoid it, insist on two conditions: a technology-agnostic approach and full ownership of your intellectual property (IP).

Your partner should build a solution that integrates with your existing systems, not force you onto their proprietary platform. Ensure your contract grants you full ownership of the source code upon project completion.

Always put your architecture first. Design the solution for your business, not the vendor's convenience. Before you sign anything, get absolute clarity on IP rights, technology dependencies, and what an exit strategy looks like.

How Can We Ensure AI Models Comply with New Regulations?

With new rules like the EU AI Act, compliance cannot be an afterthought. The key is proactive AI governance.

This means establishing clear internal policies for AI development and usage. You need to maintain a detailed inventory of every model in production and continuously monitor them for performance drift, bias, and fairness. This is about building a trustworthy system.

Working with a provider that has integrated Responsible AI and GRC (Governance, Risk, and Compliance) tools can simplify this. These systems can automate risk assessments and provide the transparency needed to meet new standards, turning a potential compliance issue into a manageable process.

Ready to put this all into practice? DSG.AI specializes in building production-grade AI solutions that deliver measurable business value in weeks, not years. Our architecture-first, ROI-driven approach ensures you get a custom solution with full IP ownership and zero vendor lock-in.

Explore our enterprise AI projects and schedule a discovery session to map your own six-week implementation plan.