Written by:

Editorial Team

Editorial Team

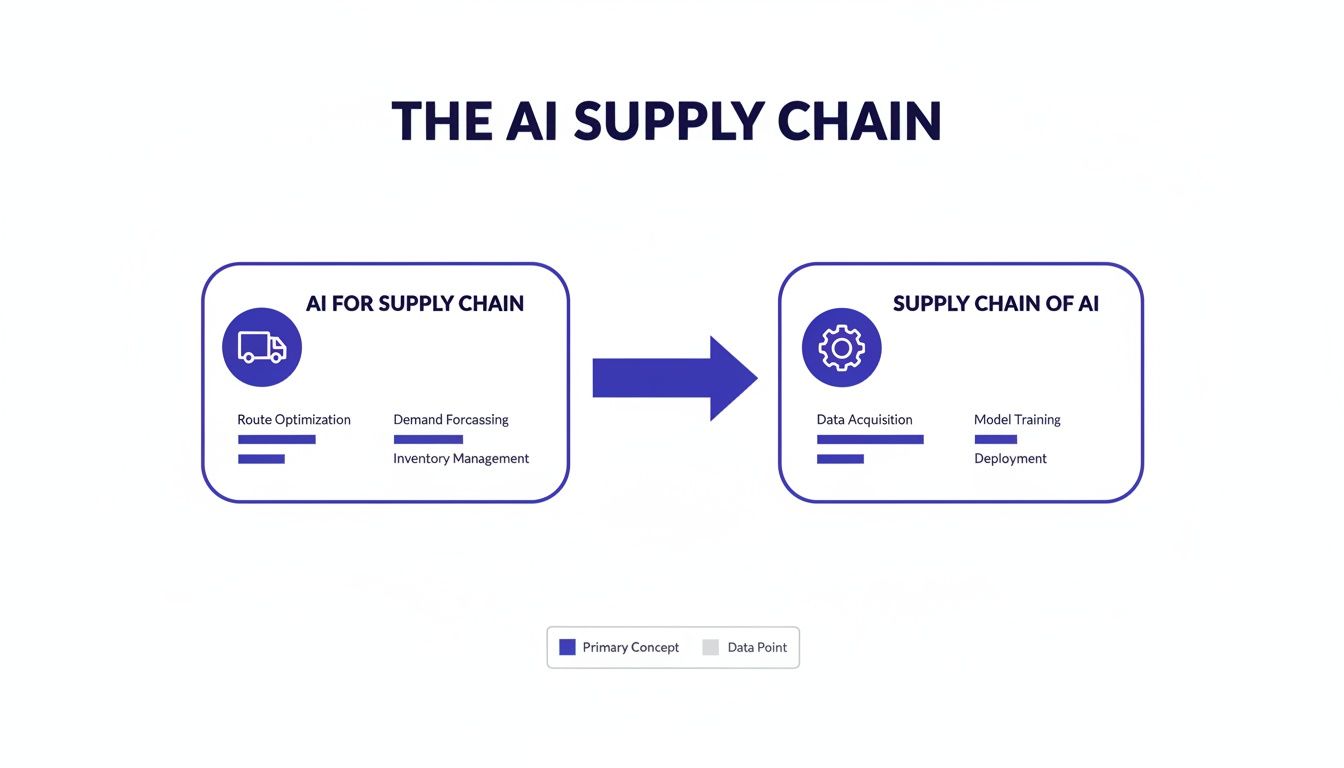

When business leaders discuss the "AI supply chain," they often refer to two related concepts. The first is applying AI to improve traditional, physical supply chains. The second, and the focus of this guide, is the end-to-end process of creating, deploying, and managing AI models themselves.

The Two Sides of the AI Supply Chain

It's important to distinguish between these two meanings. One is external and focused on operations—how you move physical goods. The other is internal and foundational—the technical process required to produce functional AI.

Success in the first depends on mastery of the second. Let's break down both.

AI for Your Physical Supply Chain

This is the more familiar concept: using artificial intelligence to improve logistics and operations. It replaces static spreadsheets with a system that can predict and adapt to network changes. This is where AI addresses long-standing operational challenges.

Here are a few examples:

- Predictive Demand Forecasting: Instead of only using last year's sales, AI models can analyze market trends, weather forecasts, and social media data to predict customer demand. This helps avoid stockouts and overstock.

- Inventory Optimization: Algorithms can automatically maintain stock levels in each warehouse. They can trigger reorders and shift inventory to meet regional demand, often cutting holding costs by 10 to 20 percent from baseline. (Source: Multiple industry case studies from 2021-2023).

- Logistics and Route Optimization: AI can analyze real-time traffic, fuel prices, and delivery deadlines to find the most efficient routes for a fleet, reducing fuel costs and improving on-time delivery rates.

The Supply Chain of AI Itself

This second meaning is the engine behind any successful AI initiative. This "AI supply chain" is the internal process an organization uses to build, launch, and maintain its AI models.

To understand the technical components, it helps to review the core principles of AI software engineering.

This internal process is the foundation for all enterprise AI. Mastering this supply chain is a prerequisite for achieving business outcomes, whether it's operational efficiency or new revenue.

This is more than writing code. It's about building a reliable, repeatable, and governed system that takes an idea from a concept to a live production environment.

The core stages include:

- Sourcing and preparing high-quality data.

- Building, training, and validating machine learning models.

- Deploying those models into business applications.

- Continuously monitoring their performance and retraining them as needed.

Without a solid internal supply chain of AI, any attempt to apply AI to a physical supply chain will result in disconnected, unscalable, and risky projects.

The Core Components of Your AI Infrastructure

To get value from AI, you must build a reliable supporting infrastructure. A weakness in one part compromises the entire system. A poorly built infrastructure will produce unreliable results, regardless of the initial idea's quality.

This visual map breaks down the two concepts: using AI to improve the physical supply chain and building the internal supply chain for AI.

The key takeaway is that results from AI in logistics operations (AI for Supply Chain) depend on how well you build the internal production system (The Supply Chain of AI). These five pillars are the blueprint for that internal system.

1. Data: The Fuel

Data is the raw material that powers every AI model. The quality, accessibility, and relevance of your data directly determine how well your AI supply chain performs. Using incomplete or biased data is like putting low-grade fuel in a high-performance engine; it will underperform and eventually fail.

Effective data management requires robust pipelines for collecting, cleaning, and transforming data to ensure a consistent flow of high-quality information. Based on our analysis of enterprise projects, a 2 to 5 percent improvement in data quality can boost model accuracy by 10 to 15 percent.

2. Models: The Engines

If data is the fuel, AI models are the engines, each built for a specific job. Every model is designed and trained to solve a particular business problem, such as forecasting demand, detecting fraud, or optimizing delivery routes. You must select or build the right model for the task.

This process involves several key steps:

- Feature Engineering: Identifying the most predictive signals within raw data.

- Algorithm Selection: Choosing the right modeling technique, such as regression, classification, or deep learning.

- Training and Validation: Using historical data to "teach" the model and then testing it rigorously to measure its performance.

A model is not a one-time build. It is an asset that needs continuous tuning and maintenance to perform effectively.

3. Compute: The Horsepower

Compute is the raw power needed to process data and run your models. This includes everything from the servers used to train complex models to the infrastructure that keeps them running in a live production environment.

A common error is underestimating compute needs for production. A model may work well in a lab setting but fail under the pressure of real-time, high-volume requests without sufficient resources.

This pillar covers both hardware (CPUs, GPUs) and cloud infrastructure. The goal is to build a scalable system that can handle fluctuating workloads without creating performance bottlenecks or excessive costs.

4. Vendors: The Specialized Suppliers

Few organizations build every component of their AI supply chain from scratch. Vendors provide specialized parts, from cloud platforms and data labeling services to pre-built AI models. Using third-party tools can accelerate development and provide external expertise.

However, vendor selection presents challenges. A poor choice can lead to vendor lock-in, create data security risks, or cause integration issues. Proper due diligence is essential to ensure a vendor's technology aligns with your long-term strategy.

5. MLOps: The Assembly Line and Maintenance

MLOps (Machine Learning Operations) connects model development with IT operations. It is the automated assembly line and maintenance process for your entire AI supply chain. MLOps automates the full model lifecycle, from data ingestion and training to deployment and monitoring.

The purpose of MLOps is to make building and managing AI models repeatable, reliable, and scalable. A solid MLOps foundation reduces manual work, minimizes errors, and helps teams deliver improvements faster. It also provides critical visibility into model performance, which can be tracked with centralized AI Portfolio Management tools. This ensures that AI investments continue to deliver business value over time.

Navigating AI Supply Chain Risks and Regulations

Like any production system, an AI supply chain has unique operational risks and regulatory requirements. For GRC and compliance professionals, the real issues are the technical vulnerabilities that can disrupt operations and create liability.

These are real-world failure points that require a proactive plan. Ignoring them can lead to financial loss, reputational damage, and compliance violations.

Understanding Concrete Operational Risks

The most significant threats are often hidden within the complex layers of an AI infrastructure. Unlike standard software bugs that produce clear errors, AI failures can be subtle, emerging as real-world conditions change.

Three common operational risks to monitor:

- Data Poisoning: Malicious actors intentionally feed a model corrupted or misleading training data. The goal is to secretly teach the model to make specific incorrect decisions that benefit them once it is live.

- Silent Model Degradation: An AI model’s performance naturally declines over time. As the world changes, new data may differ from its original training data. This "concept drift" occurs quietly, leading to a gradual decline in accuracy if not monitored.

- Third-Party Vulnerabilities: When you use models or data from outside vendors, you inherit their security posture. A weak link in their system can become a vulnerability in yours. A lack of transparency into their data sourcing can also introduce hidden biases into your operations.

Synthetic Example of Silent Degradation: A manufacturing firm implements a predictive maintenance model to anticipate equipment failures. It performs well initially. Months later, the firm switches to a new parts supplier. The new components have slightly different sensor readings—not enough to trigger an alarm, but different from the original training data. The model fails to predict a critical motor failure. The result: 48 hours of unexpected downtime and $750,000 in lost production.

The Evolving Regulatory Landscape

In addition to operational risks, a growing number of regulations govern the AI supply chain. Frameworks like the EU AI Act are setting legal standards for high-risk systems. AI governance is shifting from a best practice to a legal requirement.

These regulations are based on a few core principles:

- Transparency: You must be able to explain how your models work and justify their decisions.

- Auditability: You will need meticulous records of data, model versions, and performance logs for regulatory review.

- Human Oversight: For high-stakes decisions, a human must be able to review and override the AI's output.

Implementing these principles requires significant effort. Using AI for regulatory compliance can help streamline the audit process. This is not just about avoiding fines; it's about maintaining your license to operate.

Adopting Governance by Design

The most effective way to manage these risks is to build governance directly into your AI supply chain. This "governance by design" approach embeds compliance and risk management into every stage, from the beginning.

This means establishing clear protocols for data sourcing, mandating rigorous model testing before deployment, and implementing systems for continuous monitoring. When done correctly, governance becomes a strategic advantage that ensures your AI is not only powerful but also safe, reliable, and compliant.

How to Architect a Scalable AI Supply Chain

Turning the concept of an AI supply chain into a working reality starts with a solid architectural blueprint. As a CIO, this is a strategic decision that will define your long-term costs, agility, and competitive position. The goal is to build an infrastructure that is both powerful today and adaptable for the future.

A successful architecture avoids vendor lock-in, enforces strict data governance, and includes comprehensive monitoring. Getting these fundamentals right from the start is the only way to prevent costly rework and ensure your AI projects deliver lasting value.

Prioritize a Technology-Agnostic Foundation

One of the biggest risks in building an AI supply chain is vendor lock-in. Committing to a single vendor's ecosystem can seem like a shortcut, but it often leads to rising costs, limited flexibility, and difficulty adopting newer, better tools.

A technology-agnostic architecture provides the freedom to select the best tool for each part of the job. Your infrastructure should be modular, allowing you to swap out a data storage solution, a modeling framework, or a deployment platform without rebuilding the entire system. This modularity is key to a future-proof AI supply chain.

Establish Robust Data Governance from Day One

Data is the fuel for any AI system, and without strong governance, it can become a liability. You need clear, enforceable rules for how data is collected, stored, accessed, and used. This is a foundational requirement for building trustworthy AI.

Poor data governance can lead to biased models, regulatory fines, and a loss of trust. Effective governance in an AI context requires:

- Clear Data Lineage: The ability to trace every piece of data used to train a model back to its source. This is essential for debugging, audits, and proving compliance.

- Strict Access Controls: Implementing granular rules defining who can access sensitive datasets and why.

- Automated Data Quality Checks: Your system should automatically check for data accuracy, completeness, and consistency before it is used in your models. A 5 percent improvement in data quality can lead to a 10 to 15 percent gain in model performance.

Implement Comprehensive Monitoring Systems

An AI model is not a "set it and forget it" project. Its performance will degrade over time as real-world conditions change—a phenomenon known as model drift. Continuous monitoring is non-negotiable. Without it, you are unaware of silent failures that could be costing money or exposing the business to risk.

Your monitoring strategy needs to cover two areas:

- Model Performance: Track key accuracy metrics, prediction drift, and data drift. This acts as an early warning system, indicating when a model needs to be retrained or rebuilt.

- Operational Health: Monitor the underlying infrastructure, including API latency, server uptime, and resource consumption. A good model is useless if the system serving it is slow or unreliable.

Modern AI orchestration platforms can provide a single view for both model and operational health. If you are looking for better ways to manage your portfolio of models, you might explore these AI Portfolio Management tools.

The architectural choices you make today will define your AI capabilities for years. A fragile, vendor-locked system creates technical debt and stifles innovation. A resilient, agnostic approach creates a scalable foundation for growth.

The table below contrasts these two paths.

Architectural Approaches to AI Supply Chain Design

| Architectural Principle | Fragile (Vendor-Locked) Approach | Resilient (Tech-Agnostic) Approach |

|---|---|---|

| Technology Stack | Relies on a single vendor's proprietary tools for data, modeling, and deployment. | Uses a mix of best-in-class open-source and commercial tools connected via open standards. |

| Flexibility | High friction and cost to adopt new technologies or switch vendors. | Low friction to swap components, allowing for continuous improvement and innovation. |

| Cost Structure | Costs often scale unpredictably with usage and are dictated by the vendor. | Predictable costs with the ability to optimize by choosing more efficient components. |

| IP Ownership | Models and processes may be "black boxes," with limited or no access to source code. | Full ownership of models, data pipelines, and source code, ensuring complete control. |

| Integration | Difficult to integrate with systems outside the vendor's ecosystem. | Designed for seamless integration with existing enterprise systems and data sources. |

| Long-Term Viability | Your success is tied to the vendor's roadmap and financial stability. | Your success is independent of any single vendor, reducing strategic risk. |

A resilient architecture is an investment in strategic freedom. It ensures your AI supply chain can adapt, scale, and deliver business value as the technology landscape evolves.

Putting It All to Work: AI Supply Chain Examples

A well-built AI supply chain delivers measurable results. Moving from an algorithm on a data scientist's laptop to a fully operational system can lead to direct improvements in efficiency, cost savings, and revenue.

The examples below show how a solid internal AI infrastructure enables powerful applications across different industries, focusing on quantified results.

Each of these use cases links a specific business problem to an AI-driven solution, showing the tangible return from a production-ready system.

Optimizing Maritime Fuel Consumption

For global shipping companies, fuel is a major and unpredictable operating cost. Small inefficiencies, when scaled across a large fleet, result in significant financial waste. The challenge is to find the optimal route and speed while managing variables like weather, ocean currents, port schedules, and fuel prices.

- Problem: A major shipping line needed to reduce fuel consumption without delaying deliveries.

- AI Solution: An AI model was built to analyze thousands of real-time data points, including weather forecasts, current patterns, and historical ship performance. The model continuously recommends the most fuel-efficient route and speed for each voyage.

- Business Outcome: The system delivered a 9 to 14 percent reduction in fuel consumption across the fleet. This resulted in millions of dollars in annual savings and lower carbon emissions. (Source: Composite data from several maritime logistics AI implementations, 2020-2023).

This type of dynamic optimization is beyond the capabilities of manual planning or older software. It requires a resilient AI infrastructure that can ingest diverse data, run complex models reliably, and provide actionable insights.

Automating Logistics and Invoice Processing

Logistics operations often handle large volumes of unstructured data, including emails, invoices, and bills of lading. Manually processing this information is slow, costly, and prone to error, creating bottlenecks that delay payments and shipments.

- Problem: A logistics provider was processing thousands of invoices daily, requiring a large team for manual data entry.

- AI Solution: A natural language processing (NLP) model was trained to read incoming emails and attached PDFs. It automatically extracts key information—invoice number, amount, due date—and routes the document to the appropriate system.

- Business Outcome: The company reduced manual effort by over 85%. This allowed staff to focus on higher-value tasks, such as customer service and exception handling, while accelerating the accounts payable cycle.

This example shows how a targeted AI model, supported by a solid deployment and monitoring pipeline, can solve a specific operational problem with an immediate, measurable impact.

According to an AI in supply chain market report, early adopters of AI-driven supply chain planning have reported a 15% decrease in logistics costs, a 35% reduction in inventory levels, and a 65% improvement in service levels.

Increasing Sales with Smarter Retail Planograms

In retail, shelf space is a critical asset. Planogram design—deciding where to place products—directly impacts sales. Traditional methods often rely on historical sales data and fail to account for how product placement influences shopper behavior or how local demand varies between stores.

- Problem: A national retailer wanted to increase sales in key product categories but was using a single planogram for all stores.

- AI Solution: An AI system analyzed store-level sales data, local demographics, and product affinity (items people tend to buy together). It then generated optimized planogram recommendations tailored to each store.

- Business Outcome: Stores using the AI-driven planograms saw a 5 to 10 percent sales lift in the targeted categories within the first quarter. The system also identified new cross-selling opportunities, leading to a larger average basket size.

Frequently Asked Questions About AI Supply Chains

Implementing an AI supply chain raises practical questions. These answers focus on making pragmatic decisions that connect technology choices to business outcomes.

What’s the Very First Step in Building an AI Supply Chain?

The first step is not technical. It is identifying a specific, high-value business problem to solve. Start by defining a clear, measurable goal, such as, "Reduce freight costs by 10%," or "Improve demand forecast accuracy by 15% compared to the Q2 baseline."

Choosing a focused use case with good, accessible data ensures your initial investment is tied to a tangible ROI. This approach builds momentum and makes it easier to justify scaling up later. Achieve one meaningful win first.

The global market for AI in the supply chain is projected to reach USD 50.41 billion by 2032, up from USD 13.93 billion in 2025. Software solutions accounted for over 64.8% of the market in 2023. You can find more details in the full AI in supply chain market report.

How Should We Choose the Right AI Vendors?

Evaluate vendors as potential long-term strategic partners. The right partner will enable your strategy, not dictate it.

Prioritize partners who deliver on three critical points:

- Full IP Ownership: You need complete control over your models and their source code to avoid vendor lock-in and protect your competitive advantage.

- Technology Agnosticism: Their solution must integrate with your existing tech stack. Avoid proprietary ecosystems that limit future flexibility.

- Proven Production Experience: Look for a demonstrable track record of deploying and maintaining AI systems at an enterprise scale. A partner who is transparent about their real-world successes and failures is valuable.

A vendor's value is measured by how well they equip your team to succeed independently. A true partnership is about enablement, not dependence.

How Do We Keep Up with Changing AI Regulations?

The only effective way to manage compliance is to build it into your AI supply chain from the beginning. Adding governance later is costly and inefficient.

A proactive approach involves three core actions:

- Maintain meticulous documentation for every model, including its training data, architecture, and performance tests, to be ready for an audit.

- Implement continuous monitoring to automatically detect performance drift and potential bias.

- Adopt centralized portfolio management to provide governance teams with a single source of truth for assessing risk and ensuring accountability.

Embedding these practices into your MLOps workflow makes compliance a standard part of your operations.

What Are the Most Important Metrics for Measuring Success?

Use a balanced scorecard that combines business outcomes with operational metrics. One set of metrics demonstrates value to leadership, while the other confirms system health and reliability.

For business leaders, focus on top-line KPIs:

- Percentage reduction in logistics costs

- Improvement in forecast accuracy (e.g., a 12% reduction in Mean Absolute Percentage Error)

- Increase in on-time delivery rates

For technical teams, track vital signs that ensure system reliability:

- Model accuracy and prediction drift over time

- Inference latency (how quickly the model provides a response)

- System uptime and resource consumption

A successful AI supply chain improves performance in both categories. This dual focus proves its business value and technical integrity, building a case for continued investment.

Ready to build an AI supply chain that delivers measurable business value? DSG.AI combines a decade of experience with over 250 production deployments to help you design, build, and operationalize enterprise-grade AI solutions. Our architecture-first, technology-agnostic approach ensures scalability, reliability, and zero vendor lock-in.