Written by:

Editorial Team

Editorial Team

Automated regression testing is a system that automatically re-runs tests to confirm recent code changes have not broken existing functionality. It is a critical process for teams that need to release new features or update AI models without causing unexpected errors.

Why Automated Regression Testing Is Now Critical Enterprise Infrastructure

In environments with frequent deployments and complex microservice architectures, automated regression testing has become foundational infrastructure. Without a clear automation strategy, each release—whether a new software feature or a retrained AI model—creates a conflict between development speed and system stability.

This process has shifted from an optional accelerator to an essential quality gate. The shift is a direct result of market pressure to release software faster. The global automation testing market was valued at USD 25.4 billion in 2022 and is projected to reach USD 92.45 billion by 2030, according to Grand View Research. We have observed enterprises reduce their regression testing cycles by 50% to 80%, a change that enables multiple daily releases instead of quarterly ones.

The Cost of Manual Regression

Relying on manual regression testing introduces unpredictability into software delivery. The process is slow, subject to human error, and does not scale with complexity.

Consider a synthetic example: a financial services firm releases a minor update to its mobile app's payment module. A developer's small code change unintentionally breaks the user authentication logic.

Without an automated test suite, this regression might not be found for several days during manual testing, or until customers report issues. The consequences include locked accounts, failed transactions, and an overloaded support team. The damage to customer trust and revenue can be significant.

A well-designed automated regression suite is not an expense; it is an insurance policy against systemic failure. It transforms the unpredictable risk of system changes into a manageable, quantifiable process that protects revenue and reputation.

The table below outlines the impact of moving from a manual to an automated approach.

Shift from Manual to Automated Regression Impact

| Metric | Manual Regression | Automated Regression |

|---|---|---|

| Release Velocity | Days or weeks per cycle, slowing innovation. | Hours or minutes, enabling multiple deployments per day. |

| Cost | High operational cost due to repetitive human effort. | Higher initial setup cost but significantly lower long-term total cost of ownership. |

| Test Coverage | Limited and often inconsistent, focused on high-priority paths. | Comprehensive and repeatable, covering edge cases and complex scenarios. |

| Risk of Human Error | High, leading to missed bugs and production incidents. | Minimal, as tests are executed precisely and consistently. |

| Feedback Loop | Slow. Developers wait days for feedback, increasing the cost to fix bugs. | Immediate. Developers get feedback in minutes within the CI/CD pipeline. |

This shift does more than just find bugs faster. It changes how technology delivers business value.

From Technical Debt to a Strategic Capability

For technology leaders, the value of automated regression testing extends beyond catching bugs. It is a strategic tool for managing the total cost of ownership (TCO) of complex software and AI applications.

Here is how it provides returns:

- Mitigates Technical Debt: By identifying regressions early in the development cycle, automation prevents small issues from becoming larger, more expensive problems that slow future development.

- Ensures Predictable Delivery: Automated tests serve as a reliable, repeatable quality gate. This provides leadership with confidence in release schedules.

- Protects Revenue and Reputation: In a high-stakes field like healthcare, a regression in an AI diagnostic model could have serious patient safety implications. Automation provides the rigorous validation needed to deploy these critical systems.

When automated regression testing is treated as essential infrastructure, it is no longer a cost center but a core business enabler. Platforms like DSG.AI's assureIQ provide specialized tools to build and manage these testing systems, ensuring teams can innovate rapidly without compromising stability. It provides the safety needed to move faster and with greater confidence.

Architecting a Resilient and Scalable Test Strategy

Shifting from scattered scripts to a structured automation strategy is an architectural project. A resilient regression suite is not built by automating every manual test. Effective suites are designed with a focus on business risk, maintainability, and scalability.

The foundation of a solid strategy is focusing on the most critical business processes. The goal is not 100% test coverage, which is a common and expensive objective. The goal is to achieve the most risk mitigation from an efficient set of tests.

Start with What Matters: Critical User Journeys

Before writing automation code, map the user journeys essential to the business. Identify the core functions that, if broken, would cause immediate revenue loss, damage customer trust, or create significant operational problems.

- For an e-commerce site, this includes the full checkout flow: adding an item to the cart, applying a discount, entering payment details, and receiving an order confirmation. A failure here directly impacts revenue.

- For a healthcare AI system, it’s the path from a clinician uploading patient data to the model generating a diagnostic score and that score appearing correctly in the patient's record. A regression here could impact patient care.

The first step is to collaborate with product managers and business stakeholders to rank these workflows. This alignment ensures engineering effort is focused on business priorities, not just what is easy to automate.

The most effective regression suites mirror business priorities. Focus on automating the 20% of user journeys that support 80% of your critical business functions. This risk-based approach delivers value faster and results in a more maintainable asset.

Once this prioritized list is established, you can design test scenarios and determine how to manage the necessary test data.

Get Your Test Data House in Order

Automated tests often fail because of inconsistent or missing test data. A mature strategy treats test data management as a primary component, not an afterthought. This typically involves a hybrid approach that balances realism with security and stability.

Do not test directly against a live production database. This practice leads to unreliable, non-repeatable tests and creates security risks. Instead, establish a dedicated, isolated test environment that can be programmatically reset to a known state before each test run. This is the only way to guarantee consistent tests and reliable outcomes.

To populate this environment, use two key techniques:

- Anonymized Production Data: Use tools to copy and scrub production data, removing all personally identifiable information (PII). This provides tests with a high degree of realism because they run against data patterns that reflect actual user behavior, but without compliance risks.

- Synthetic Data Generation: Algorithmically create data to test specific edge cases that may be rare or absent in the production dataset. This is essential for validating boundary conditions, checking error handling, and preparing models for new scenarios.

This dual approach makes regression testing both comprehensive and secure. With data under control, the next step is managing the test execution flow. To see how this fits into a larger strategy, view our guide on test orchestration.

Strike the Right Balance Between Coverage and Speed

There is a natural tension between leadership's desire for exhaustive test coverage and developers' need for fast feedback. A regression suite that takes six hours to run becomes a bottleneck, defeating the purpose of agile development.

A risk-based testing model resolves this conflict.

This model involves evaluating parts of the system based on two factors: the probability of failure (e.g., areas with recent, complex code changes) and the business impact of failure (e.g., the payment processing module).

From this analysis, you can build different tiers of regression suites that run at different points in your CI/CD pipeline:

- Smoke Test Suite: A small set of about a dozen tests covering the most critical functions. It should run on every code commit and finish in under five minutes to confirm the application is not fundamentally broken.

- Full Regression Suite: A more comprehensive set of tests covering all high-priority user journeys. This suite can run nightly or serve as a quality gate before deploying to a staging environment.

This tiered structure provides benefits for both developers and the business. Developers receive the rapid feedback they need to work efficiently, while the business maintains a thorough check on system integrity before production deployment.

Weaving Automation into Your CI/CD Pipeline

An automated test suite is only valuable when integrated into the software delivery lifecycle. For technology leaders, this means making automated regression testing an integral part of the CI/CD pipeline, transforming it from a separate step into a real-time, automated quality gate.

The goal is to build a system where every code change is automatically validated against the most critical business functions. This shifts quality assurance from a slow, manual process to a proactive, automated feedback loop that helps developers increase their speed.

Choosing the Right Triggers and Tools

The first decision is when and how tests should run. The chosen integration points directly affect how quickly developers receive feedback. A common strategy is to trigger different test suites at different pipeline stages.

- On Every Code Commit: A lightweight "smoke test" suite should run automatically when a developer commits code. This provides a quick check (under 5 minutes) to ensure the build is not fundamentally broken.

- Before Merging to Main: A more thorough suite should run on pull requests, acting as a final gatekeeper before new code is merged into the main development branch.

- Nightly Builds: The full regression suite, which may take longer to complete, can be scheduled to run overnight. This provides a complete system health check each morning.

This orchestration requires selecting the right tools. For example, a framework like Playwright can be used for UI testing, while Pytest can be used for API validation. These are initiated by orchestration tools such as Jenkins, GitHub Actions, or CircleCI, which manage the entire workflow.

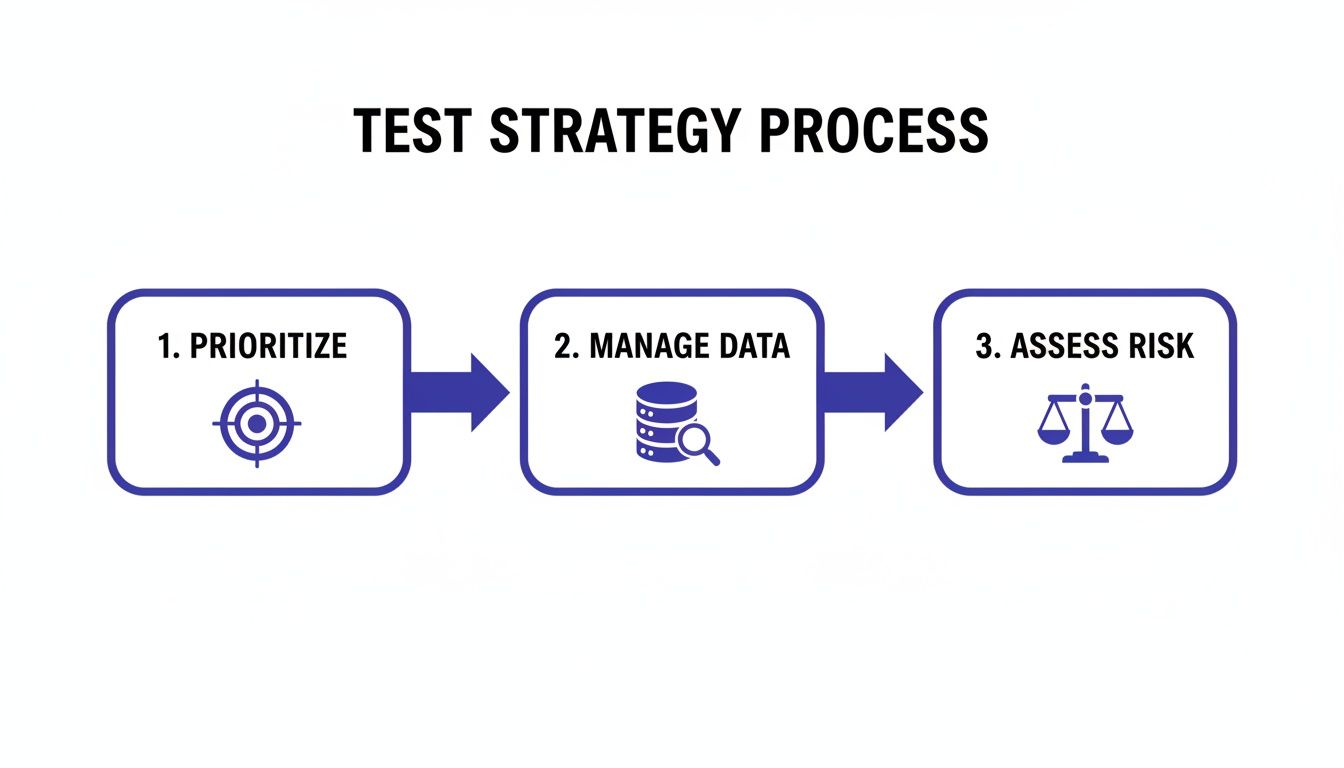

Before integrating these tests, a solid strategy is needed for what to test. This involves prioritizing, managing test data, and assessing risk.

This process is fundamental. First, determine what to test based on business impact. Second, ensure the right data is available. Finally, focus automation efforts where the risk is highest.

Shrinking the Feedback Loop with Parallelization

One of the largest obstacles to CI/CD integration is test execution time. If a full regression suite takes hours, it becomes a significant bottleneck. The solution is parallelization—running multiple tests simultaneously across different environments or machines.

By distributing the test load, you can reduce the total execution time. We have observed enterprise teams reduce a four-hour feedback loop to less than 20 minutes using this technique. This is the difference between waiting overnight for results and receiving comprehensive feedback while still focused on the task.

The goal is to make the automated regression feedback loop so fast that developers view it as a helpful tool, not a bureaucratic hurdle. When a test failure report arrives in minutes, it becomes a natural part of the coding process.

This speed transforms testing from a drag on velocity into an accelerator. It allows teams to deploy more frequently and with greater confidence.

Creating Actionable Alerts and Reports

The final step is to ensure test results are visible, understandable, and actionable. A test failure is just noise if the report is lost in an inbox or sent to the wrong person.

A well-configured system routes failure notifications directly to the team or individual developer who pushed the code that caused the failure. This can be done through integrations with tools like Slack, Microsoft Teams, or Jira.

An effective report should include:

- Clear Failure Identification: Pinpoint exactly which test failed and at what step.

- Logs and Screenshots: Provide context, such as browser console logs or UI screenshots at the moment of failure.

- Traceability: A direct link to the specific code commit or pull request that triggered the test run.

When this type of immediate, context-rich feedback is provided, automated testing becomes a powerful diagnostic tool. This level of robust monitoring is also a cornerstone of responsible AI governance. We address this further with our manageAI Monitoring platform, which ensures that regressions—in traditional code or complex AI models—are caught and fixed with precision and speed.

Measuring The Business Value of Your Testing Program

Eventually, you will need to justify the investment in your automated regression testing program. To do this, you must use business-oriented language. While engineers focus on pass/fail rates and execution times, executives want to see the impact on business outcomes.

This requires showing a clear return on investment (ROI). It involves proving that your quality program is a strategic asset that drives efficiency and protects revenue, not just a budget line item.

From Technical Metrics to Business KPIs

Frame your program's value by connecting testing efforts to business priorities: speed, cost, and risk. Shift from reporting the number of tests run to demonstrating how you have improved key business indicators.

Here are the essential KPIs to start tracking:

-

Fewer Critical Production Incidents: This is the primary metric. Track the number of Severity 1 and Severity 2 incidents caught before they reach production. Measure the number of critical bugs reported by customers within 72 hours of a new deployment. A steady decline demonstrates the effectiveness of your automation.

-

Faster Fixes for Regressions (MTTR): When a regression occurs, track how long it takes the team to resolve it. A solid automated suite provides developers with immediate, precise feedback, helping them find the root cause in minutes instead of days. Reducing Mean Time To Resolution (MTTR) from a baseline of 8-12 hours to 1-2 hours is a significant productivity gain.

-

Coverage of Business-Critical Workflows: Move beyond generic code coverage percentages to focus on business coverage. Map automated tests directly to your top 5-10 most critical user journeys, such as the checkout process or a new user sign-up. Reporting 95%+ automated coverage on these specific flows provides leadership with confidence that you are protecting key business functions.

Establish a clear baseline before implementing a full automation program. Track these KPIs for one quarter to determine your current state. Six months after implementation, measure them again. Presenting data that shows a 20-40% reduction in production defects provides a powerful narrative for budget discussions.

Quantifying the Financial and Productivity Impact

From a financial perspective, automated regression testing is a direct way to reduce software delivery costs. For a large enterprise running thousands of test cases, the financial impact is significant. Case studies often show 30–50% reductions in total testing effort and 20–40% fewer production defects after integrating a robust automated suite into the CI/CD pipeline.

Reducing a multi-day manual regression cycle to a few hours returns hundreds or thousands of engineering hours to the business annually. More data is available from the automation testing market on marketsandmarkets.com.

This reclaimed time is not just a cost saving; it is a direct boost to innovation. When engineers are not fixing preventable bugs or waiting for manual QA, they can focus on building new features that contribute to company growth. For a deeper analysis, see a comprehensive guide to engineering productivity measurement.

Measuring business value is about demonstrating the connection between a faster, more reliable test suite and fewer customer support calls, lower operational costs, and a more confident engineering team.

Future-Proofing Your Strategy with Advanced Techniques

Once a solid, integrated regression testing program is in place, the next step is to adopt advanced deployment patterns. These techniques de-risk production releases. While a comprehensive test suite confirms an application should work, strategies like canary releases and blue-green deployments prove that it does work in a live environment, with a built-in safety net.

These patterns reduce the "blast radius" of a potential failure. Instead of a high-risk, all-at-once deployment, new code is exposed to a small subset of users or infrastructure first. This allows automated tests to run against a live production environment, catching subtle, environment-specific issues that are difficult to replicate in staging.

Minimizing Risk with Advanced Deployment Patterns

These strategies are not replacements for automated regression testing; they are complements that build a more resilient delivery pipeline. Each pattern serves a different purpose, allowing you to choose the appropriate level of risk mitigation for a given release.

With a canary release, for example, a small fraction of live traffic—such as 5%—is routed to the new version. The other 95% of users continue to use the stable version. Automated health checks and key regression tests run against this "canary" instance. If metrics are positive, traffic is gradually increased until 100% of users are on the new code.

The table below outlines common patterns.

Advanced Deployment Patterns for Risk Mitigation

These advanced deployment strategies, combined with automated regression testing, significantly reduce the impact of potential failures in production.

| Pattern | Description | Best For |

|---|---|---|

| Blue-Green Deployment | Maintain two identical production environments ("blue" and "green"). Deploy the new version to the inactive environment, run the full regression suite, and then switch a router to direct all traffic to the new environment. | High-stakes releases where downtime is not an option. It allows for instant rollback by reversing the router switch. |

| Canary Release | Gradually roll out the new version to a small subset of users while monitoring performance and running targeted automated tests. If successful, slowly increase exposure until all users are on the new version. | Releases for testing new features with real user traffic while minimizing the impact of potential bugs. Also ideal for validating performance changes under a real-world load. |

By choosing the right pattern for your release, you can approach deployments with confidence, knowing a controlled, observable process is in place.

The ultimate goal of these patterns is to make production deployments routine. By combining a robust automated regression suite with a controlled rollout, you remove the drama and uncertainty from release day.

The Rise of AI in Testing Itself

The next frontier in this field is embedding artificial intelligence directly into the testing process. This shift is already underway, moving quality assurance from a reactive function to a more predictive and self-adapting one. AI is becoming a core part of the tester's toolkit.

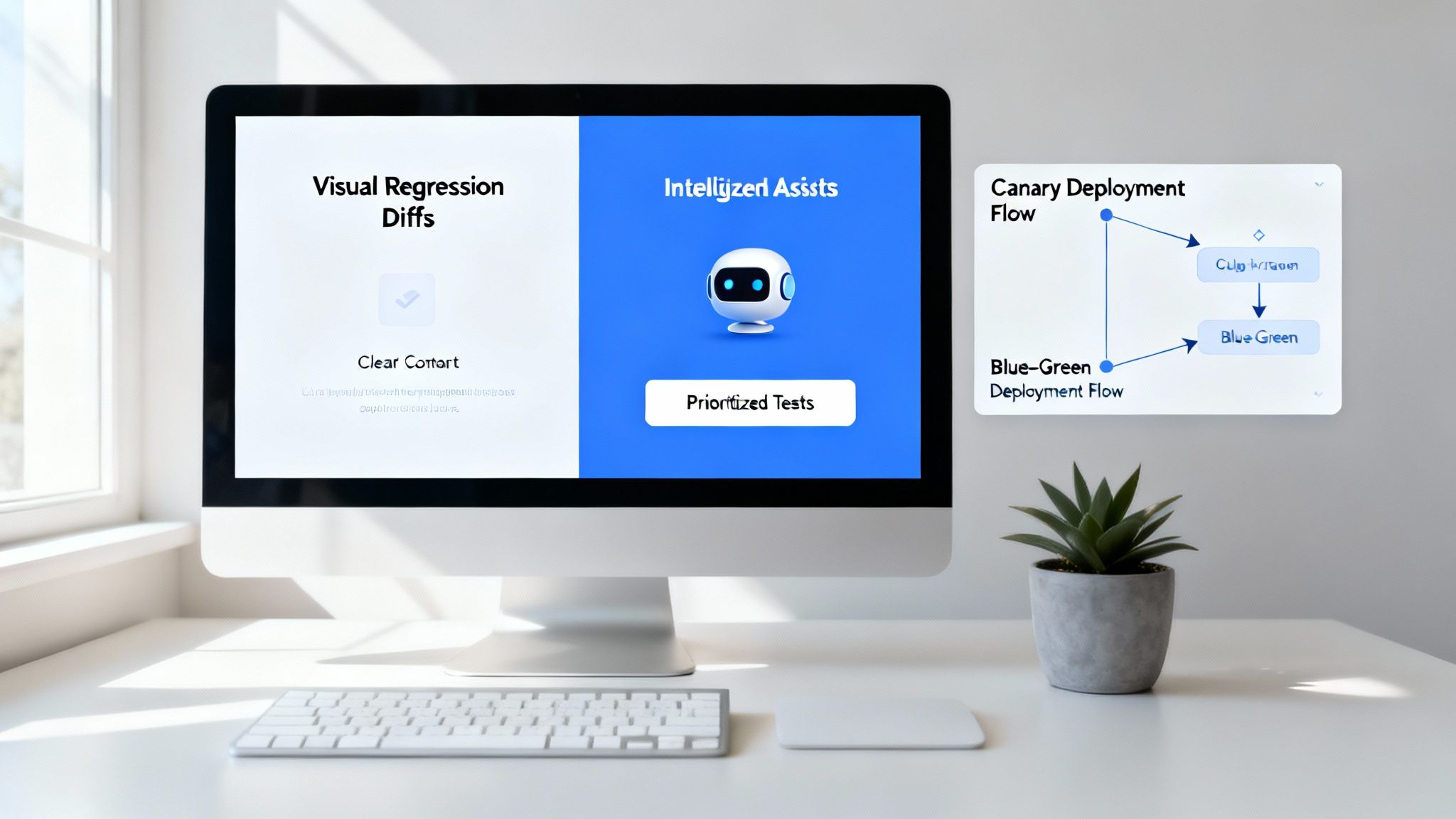

One of the fastest-growing applications is visual regression testing. This technique moves beyond simple functional checks to detect unintended changes in the user interface. It works by taking pixel-by-pixel snapshots of an application's UI and comparing them against an approved baseline. This is valuable for catching subtle UI bugs—such as a misaligned button or an incorrect font—that traditional automation would miss.

The market reflects this shift. Valued at approximately USD 0.52 billion in 2024, the visual regression testing market is projected to reach USD 2.33 billion by 2032. This indicates its growing importance in enterprise QA. More data can be found by exploring the visual regression testing market on verifiedmarketresearch.com.

AI’s influence extends beyond visuals. It is making test suites smarter and more efficient. Here are two key applications gaining traction:

- Self-Healing Tests: Test maintenance is a significant operational cost. AI-powered tools can automatically detect when a UI element's selector has changed (e.g., an ID or XPath) and intelligently update the test script. This reduces the manual effort required to fix broken tests after a UI refresh.

- AI-Powered Test Selection: Instead of running the entire regression suite for every minor change, AI can analyze the code in a new commit and select only the most relevant tests. This can reduce test execution times by 70% or more, giving developers faster feedback without sacrificing meaningful coverage.

These advancements provide a clear roadmap. By pairing proven deployment patterns with emerging AI-driven testing techniques, you build a resilient system for today and prepare for the increasing complexity of future software.

Navigating Key Questions in Enterprise Test Automation

When implementing a new automated regression testing strategy, many questions arise. Here are answers to some of the most common questions from technology leaders.

What’s The Biggest Mistake to Avoid When Starting Out?

The most common mistake is attempting to automate 100% of existing manual test cases from the beginning. This approach often fails. It results in an expensive, brittle test suite that is difficult to maintain and lacks trust.

A better starting point is a risk-based approach. Collaborate with product and business leaders to identify the most critical business workflows. Ask the question: "What absolutely cannot break?"

Start there. Focus initial efforts on building a small, stable, and reliable suite of tests covering only those core user journeys. This approach delivers value quickly, builds confidence in the automation program, and provides a solid foundation for expansion.

A good rule of thumb is to focus on automating the 20% of tests that cover 80% of your most critical business functions. This provides a quick, sustainable return on your investment in automated regression testing.

How Should We Handle Test Data for Our Regression Suites?

Test data management is a frequent cause of failure in automation projects. If tests are unreliable or results are inconsistent, a poor data strategy is often the cause. A hybrid approach is the most resilient.

First, automated tests should never access a live production database. This creates a major security risk and makes tests non-repeatable, as the data is constantly changing.

Instead, set up a dedicated, isolated test database that can be programmatically wiped and reset to a known state before each test run. This ensures consistency. Then, populate it using a mix of two sources:

- Anonymized Production Data: To create realistic test datasets, copy and scrub production data. Use data masking tools to remove or replace all personally identifiable information (PII). This allows tests to reflect real-world scenarios without creating compliance issues.

- Synthetic Data Generation: For edge cases that are rare in production data, use tools to algorithmically generate the necessary data. This is critical for testing error handling, boundary conditions, and complex business rules.

This dual strategy provides the realism of production data with the control of synthetic data.

How Does This Apply to AI and ML Models?

With AI and machine learning, automated regression testing has an additional dimension. It involves testing not just code, but also model regression, or performance drift. You must continuously prove that a newly trained model performs as well as or better than the model it replaces.

Your automated suite for an AI system must cover the entire MLOps pipeline, including checks for:

- Data Validation: Has the input data schema changed in a way that will break the model?

- Model Performance: Run the new model against a "golden" baseline dataset and automatically check key metrics like accuracy, precision, and recall against established thresholds.

- Business Logic: Does the model's output still trigger the correct downstream business outcome?

The goal is to ensure the model and its surrounding infrastructure remain stable and predictable. For those working with modern AI systems, Robert C. Martin's insights on Clean Code and AI offer valuable perspective.

How Can We Make Sure Our AI Testing Complies With Regulations?

With new regulations like the EU AI Act, your automated regression suite serves as evidence for compliance. It is no longer sufficient to claim a model is fair; you must be able to prove it continuously.

This requires designing tests that explicitly validate fairness, transparency, and robustness. For example, build automated test cases that feed the model specific data slices to check for bias against protected demographic groups.

The execution logs, versioned test cases, and detailed reports from your automation suite become a clear, auditable trail. This documentation proves to regulators that you are proactively monitoring your AI system against its declared specifications. You can further integrate your testing platform with a Governance, Risk, and Compliance (GRC) tool to automate the collection of this evidence.

At DSG.AI, we help enterprises build, deploy, and manage production-grade AI systems with confidence. Our architecture-first approach and specialized platforms like assureIQ and manageAI Monitoring provide the foundation for resilient, compliant, and high-performing AI. Learn more about how we turn data into a competitive advantage by exploring our past projects.