Written by:

Editorial Team

Editorial Team

- Persona: A Chief Information Officer (CIO) or senior technology leader at a large enterprise.

- Problem: Their organization has multiple successful AI/ML proof-of-concepts but struggles to deploy, monitor, and govern these models in production, leading to stranded assets and increased operational risk.

- Goal: Educate the CIO on the MLOps platform landscape to help them make an informed investment decision.

- Funnel Stage: Consideration.

Transitioning artificial intelligence from a research project to a core business driver requires a robust, scalable, and governed operational framework. Many organizations lack a mature MLOps practice, which bridges the gap between model development and reliable production deployment. Without it, models fail to deliver value, becoming stranded assets that introduce risk and operational drag. This guide provides a detailed analysis of the best MLOps platforms available, designed to help technology and governance leaders make informed investment decisions.

We evaluate each platform's core capabilities, from data ingestion and model training to deployment, real-time monitoring, and governance. You will find a structured breakdown of strengths and weaknesses, pricing signals, and crucial integration notes for building a technology-agnostic AI stack. This analysis is tailored for enterprise buyers navigating requirements around security, scalability, and regulatory compliance. While these platforms manage the model lifecycle, the underlying code is a critical component. To understand the broader landscape of tools that aid developers, explore the guide on the best AI for writing code.

Our goal is to move beyond generic feature lists and provide a pragmatic, in-depth resource. Each review includes specific use cases and practical considerations to help you select the platform that aligns with your organization's operational needs and strategic objectives. This curated list is your starting point for building a production-grade AI infrastructure.

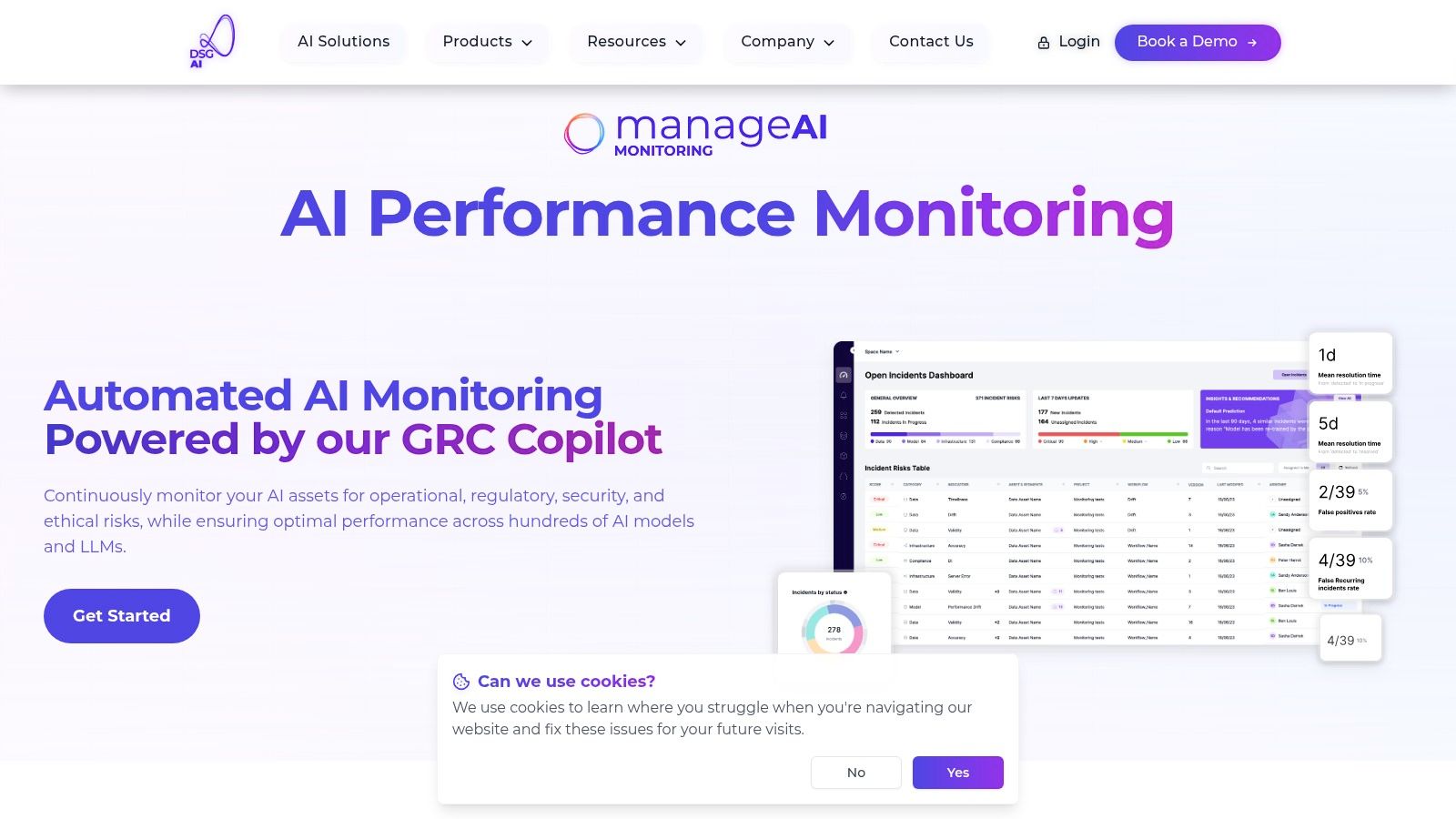

1. ManageAI Platform — AI Performance Monitoring

ManageAI from DSG.AI is an enterprise-grade solution focused on the post-deployment phase of the MLOps lifecycle: performance monitoring and risk management. Where many platforms focus on the build-and-deploy pipeline, ManageAI ensures that AI/ML models and LLMs in production deliver sustained business value while adhering to operational and regulatory standards. Its architecture-first, technology-agnostic approach makes it an overlay for existing MLOps stacks, providing a unified observability layer without forcing vendor lock-in.

This platform is engineered to give technology leaders, such as CTOs and Heads of AI, a real-time, comprehensive view into model health. It moves beyond basic drift detection to connect model behavior directly to business KPIs, answering questions about ROI, risk exposure, and operational efficiency. The result is a proactive, rather than reactive, approach to AI governance.

Core Capabilities and Differentiators

ManageAI’s strength lies in its extensive, out-of-the-box monitoring capabilities and its focus on enterprise-scale operations and governance.

- Comprehensive Monitoring Scope: The platform continuously tracks hundreds of models simultaneously with over 50 customizable monitors. These cover a wide spectrum of potential issues, from data quality and model drift to bias, explainability, security vulnerabilities, and ethical risks. This multi-faceted approach is essential for organizations operating in regulated industries.

- Proactive Risk Management: Instead of just flagging problems, ManageAI provides incident alerting and triage workflows. This allows teams to investigate and resolve issues before they escalate, reducing model downtime and mitigating potential compliance penalties or customer-facing failures.

- Enterprise-Ready Integration: Backed by over 250 production deployments, the platform integrates into existing data pipelines. DSG.AI’s structured six-week implementation methodology focuses on accelerating time-to-value. To ensure the performance and health of your AI models and MLOps infrastructure, a deeper dive into top monitoring and observability tools is recommended.

- Regulatory and GRC Alignment: A key differentiator is its integration with DSG.AI’s Responsible AI and Agentic GRC suite. This makes it a solution for organizations preparing for emerging regulations like the EU AI Act, providing the necessary auditability and documentation for compliance. You can discover more about the ManageAI Platform’s capabilities on their official website.

Strengths and Limitations

Pros:

- Scale and Coverage: Automated monitoring across hundreds of models and LLMs with 50+ monitors is built for enterprise scale.

- Holistic Risk Scope: Addresses operational, regulatory, security, and ethical risks in a single platform.

- Proactive Operations: Real-time risk management and incident workflows reduce business impact.

- No Vendor Lock-In: A technology-agnostic architecture ensures full IP ownership and integrates with existing tools.

Cons:

- Enterprise Focus: Pricing and operational overhead may be prohibitive for smaller teams or startups.

- Data Access Dependent: Full effectiveness requires access to model internals and business data, which can be a limitation with black-box models.

Website: https://www.dsg.ai/manageai

2. Amazon SageMaker (AWS)

Amazon SageMaker is a fully managed service from AWS designed to cover the entire machine learning lifecycle. It provides an integrated suite of tools for data labeling, preparation, model training, tuning, deployment, and management. For enterprises already invested in the AWS ecosystem, SageMaker offers a native solution to operationalize ML models at scale. Its key strength is its deep integration with the broader AWS stack, including services like S3 for data storage, IAM for granular access control, and CloudWatch for monitoring.

This platform's modularity and scalability make it a strong contender. Teams can use specific components like SageMaker Studio for a unified development environment, SageMaker Pipelines for orchestrating CI/CD workflows, or its managed inference endpoints that support real-time and batch predictions with auto-scaling.

Key Considerations

- Strengths: SageMaker benefits from AWS's enterprise-grade security, extensive compliance certifications, and a pay-as-you-go pricing model that allows for cost management of individual components like training jobs or endpoint hosting.

- Limitations: The primary drawback is its complexity. The pricing model, while granular, can be difficult to forecast for end-to-end projects. The user experience can also present a steep learning curve for teams not already proficient with AWS services.

- Pricing: Component-based, pay-per-use model. Costs are tied to specific resources like compute instance hours, storage, and data processing.

- GRC Implications: Provides extensive lineage tracking and artifact management through its Model Registry and Pipelines, which helps create the auditable trail required for regulations.

Website: https://aws.amazon.com/sagemaker/

3. Google Cloud Vertex AI

Google Cloud Vertex AI is a unified machine learning platform that streamlines the development and deployment of ML models and generative AI applications. It offers a serverless experience with a suite of tools that cover the entire ML lifecycle, from data preparation and feature engineering to model training, deployment, and monitoring. For organizations already leveraging the Google Cloud Platform (GCP) ecosystem, Vertex AI provides a highly integrated path to operationalize ML at scale. Its differentiator is the unification of traditional ML workflows with generative AI capabilities.

This platform provides a cohesive and managed environment that reduces operational overhead. Teams can leverage integrated services like the Feature Store for managing features, ML Metadata for tracking artifacts, and a Model Registry for versioning. Vertex AI Pipelines, built on Kubeflow Pipelines, allows for the creation of robust, repeatable CI/CD workflows, while its managed endpoints support autoscaling for real-time and batch predictions.

Key Considerations

- Strengths: Vertex AI delivers a cohesive developer experience, tightly integrating data services like BigQuery with its ML tools. Its generative AI offerings, including access to foundation models and tools for grounding, provide an advantage for building modern AI applications.

- Limitations: The platform is most effective for teams committed to the GCP ecosystem, as integrations outside of it are less direct. While pricing pages are transparent, forecasting total project costs can be complex due to the combination of multiple service SKUs.

- Pricing: Follows a component-based, pay-per-use model. Costs are calculated based on specific usage, such as training hours, prediction node hours, and storage in the Feature Store or Model Registry.

- GRC Implications: The platform's integrated ML Metadata and Model Registry provide strong foundations for lineage tracking and model governance, which are crucial for creating auditable systems.

Website: https://cloud.google.com/vertex-ai

4. Azure Machine Learning (Azure ML)

Azure Machine Learning is Microsoft's cloud-based service for accelerating the machine learning lifecycle. It offers an enterprise-grade platform that equips data scientists and developers with a comprehensive set of tools for building, training, and deploying models. For organizations already leveraging the Microsoft Azure ecosystem, Azure ML provides a deeply integrated environment. Its advantage lies in its connection to other Azure services like Azure Active Directory for security, Key Vault for secrets management, and Virtual Networks for secure environments.

Azure ML excels in enterprise governance and hybrid flexibility. It enables teams to manage the end-to-end workflow through components like Azure ML Pipelines for CI/CD, a shared model registry for versioning, and managed endpoints for scalable inference. Its introduction of Prompt Flow specifically addresses the need for developing and managing Large Language Model (LLM) applications.

Key Considerations

- Strengths: The platform's major benefits are its integration with enterprise identity and security controls (Azure AD) and its support for hybrid and multi-cloud scenarios via Azure Arc. Its Responsible AI dashboard provides tools for model interpretability and fairness assessment.

- Limitations: The number of interconnected Azure services required for a full MLOps setup can create configuration complexity. Forecasting costs can also be challenging as they are spread across multiple resource types, such as compute, storage, and networking.

- Pricing: Follows a pay-as-you-go model. Costs are calculated based on consumption of various Azure resources, including virtual machine hours for training and inference, storage, and other connected services.

- GRC Implications: Azure ML provides governance capabilities through its model registry, data lineage tracking, and audit trails. Integration with Azure Purview enhances data governance, helping organizations meet compliance requirements.

Website: https://azure.microsoft.com/services/machine-learning/

5. Databricks Data Intelligence Platform (Model Serving)

Databricks extends its unified data and AI lakehouse platform to the operationalization phase with its integrated Model Serving capabilities. It is designed for organizations that want to eliminate the friction between data preparation, model training, and deployment by keeping everything within a single environment. For enterprises standardizing on the lakehouse architecture, Databricks provides a path from raw data to production AI, powered by its integration with MLflow for experiment tracking, model registry, and lineage.

This platform offers a data-centric approach to model deployment. Its serverless compute for endpoints, which supports both CPUs and GPUs, allows teams to serve classical ML models and large language models (LLMs) with elastic scaling without managing underlying infrastructure. This unified approach simplifies governance and accelerates the model-to-production timeline.

Key Considerations

- Strengths: The platform's core advantage is its unified data-to-production workflow. It offers flexible serving options, including pay-per-token APIs for foundation models and provisioned throughput for high-demand workloads, all governed under the same platform.

- Limitations: Its full value is realized when an organization's data already resides within the Databricks lakehouse. For companies with disparate data ecosystems, integrating and realizing the same experience can be more complex.

- Pricing: Component-based, with different models for different workloads. Includes provisioned throughput (per hour) for consistent workloads and pay-per-token models for foundation model APIs.

- GRC Implications: The integration with MLflow and Unity Catalog provides comprehensive lineage tracking from data source to served model endpoint. This creates a strong, auditable trail that is critical for meeting regulatory requirements.

Website: https://www.databricks.com/product/model-serving

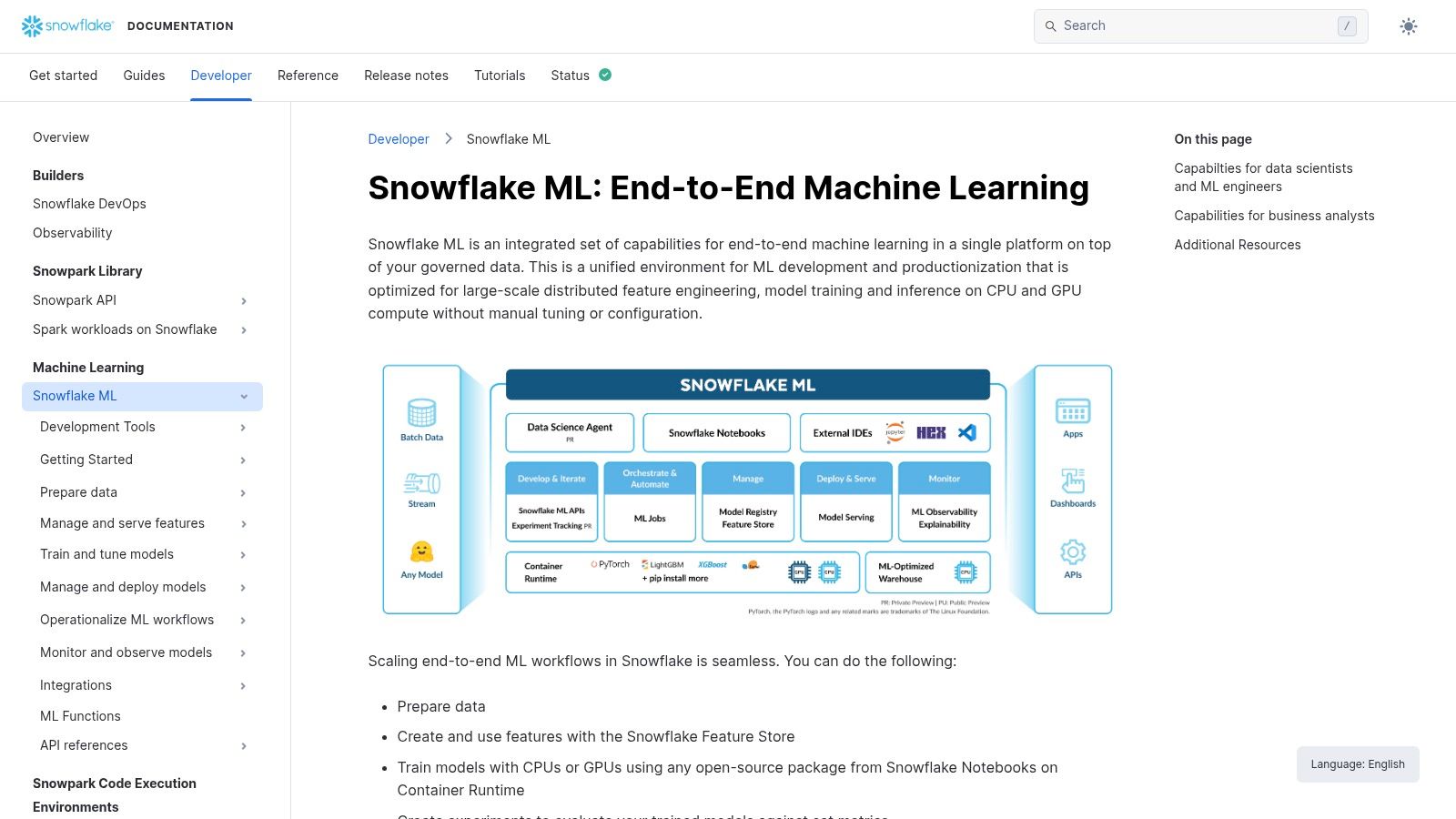

6. Snowflake ML

Snowflake ML is the platform's native suite designed to develop, register, deploy, and monitor models directly where governed data resides. This approach minimizes data movement and simplifies operations for organizations that have centralized their data within the Snowflake ecosystem. For companies already leveraging Snowflake as their data cloud, it provides an integrated path to operationalize machine learning by bringing compute to the data. This simplifies governance, reduces architectural complexity, and accelerates the path from development to production.

This platform stands out for its data-centric approach. Key features include a Model Registry with versioning and lineage, an integrated Feature Store, and model serving capabilities via Snowpark Container Services. By keeping the entire ML lifecycle within a single governed environment, teams can maintain data integrity and streamline compliance efforts.

Key Considerations

- Strengths: Because it operates directly within the data cloud, Snowflake ML offers simplified governance and a more straightforward path to compliance. It is flexible, allowing teams to import externally trained models or develop new ones natively using familiar tools.

- Limitations: Its dependence on the Snowflake ecosystem means it is not a standalone solution. Users must be familiar with Snowflake services, and costs are tied to Snowflake credit consumption. Advanced serving with GPUs requires configuring Snowpark Container Services.

- Pricing: Utilizes the Snowflake credit-based model. Costs are determined by compute and storage consumption for training, feature engineering, and model hosting.

- GRC Implications: The in-platform Model Registry and unified data governance framework provide a strong foundation for auditable ML workflows. This integration helps build the comprehensive lineage and model documentation required to meet regulatory standards.

Website: https://docs.snowflake.com/en/developer-guide/snowflake-ml/overview

7. Domino Data Lab

Domino Data Lab is an enterprise MLOps platform built to centralize and govern data science work at scale. It emphasizes reproducibility, collaboration, and a hybrid, multi-cloud approach, making it well-suited for organizations in regulated industries like financial services and life sciences. The platform provides a unified system of record for all data science projects, enabling teams to develop, validate, deliver, and monitor models within a secure, auditable, and vendor-neutral environment. Its architecture is designed to integrate with existing infrastructure, whether on-premises or across major cloud providers like AWS, Azure, and GCP.

This platform has a strong focus on governance and infrastructure flexibility. Domino's Governed Model Registry, complete with approval workflows and lineage tracking, provides the controls needed for strict compliance. Meanwhile, its ability to support hybrid deployments and connect to diverse data sources like Snowflake and Databricks gives enterprises the freedom to build on their preferred technology stack without being locked into a single ecosystem.

Key Considerations

- Strengths: Excels in governance, security, and auditability, making it a top choice for organizations with stringent GRC requirements. Its vendor-neutral stance offers flexibility, preventing lock-in and allowing it to adapt to a company's evolving tech stack.

- Limitations: As an enterprise-grade solution, the initial setup can be complex and typically requires a partnership with internal IT teams. Its power comes with a steeper learning curve compared to more simplified, single-cloud solutions.

- Pricing: Pricing is quote-based and requires direct engagement with the sales team to tailor a package to specific enterprise needs.

- GRC Implications: The platform is built for governance. Its automated documentation, model cards, and auditable history of all experiments and deployments directly support compliance with regulations.

Website: https://domino.ai/platform/

8. DataRobot AI Platform

DataRobot offers a comprehensive, enterprise-grade AI platform focused on accelerating the path from data to value. It provides a highly automated environment covering the MLOps lifecycle, including model development, deployment, monitoring, and governance. The platform is designed for organizations seeking an outcomes-oriented, vendor-supported stack that simplifies the operational complexities of production AI, making it a strong choice for enterprises that prioritize speed and reliability.

This solution focuses on business impact and its extensive support for varied deployment topologies. DataRobot enables teams to deploy and manage models on-premises, in the cloud, or in hybrid environments, providing flexibility for diverse IT strategies. Its integrated monitoring capabilities, which track model drift and accuracy, are crucial for maintaining performance and trust in production systems.

Key Considerations

- Strengths: DataRobot’s enterprise references and professional services can accelerate production timelines. The platform's ability to support a wide range of deployment environments (on-prem, hybrid, multi-cloud) is a key differentiator for organizations with complex infrastructure requirements.

- Limitations: The platform's pricing is often geared toward higher-value annual contracts, which may be a barrier for smaller teams. Customers realize the strongest value when adopting a larger portion of the integrated platform, making it less ideal for those seeking a single point solution.

- Pricing: Enterprise subscription-based, with costs varying based on usage, features, and deployment type. Typically involves a significant annual contract value.

- GRC Implications: The platform includes built-in governance and compliance workflows, such as automated model documentation and lineage tracking. These features provide a solid foundation for building the audit trails needed to demonstrate compliance with regulations like the EU AI Act.

Website: https://www.datarobot.com/

9. Weights & Biases (W&B)

Weights & Biases (W&B) is a developer-first MLOps toolkit focused on providing tools for experiment tracking, model evaluation, and artifact versioning. It is designed to integrate into a data scientist's existing workflow, offering visibility into training runs, model behavior, and dataset changes. For teams that prioritize rapid iteration and detailed performance analysis, W&B acts as a centralized system of record for all ML development activities, enhancing collaboration and reproducibility.

The platform excels at the core development loop. Its strength lies in its intuitive user interface and SDKs that integrate with popular frameworks like PyTorch and TensorFlow. W&B enables practitioners to log metrics, hyperparameters, and model artifacts with just a few lines of code, transforming cluttered experiment logs into interactive, shareable dashboards.

Key Considerations

- Strengths: W&B is known for its strong adoption among ML practitioners, leading to robust community support and a rich set of integrations. Its flexible deployment options, including a managed SaaS, dedicated cloud, and self-hosted instances, cater to diverse enterprise security and infrastructure needs.

- Limitations: While excellent for development and tracking, it is not an end-to-end platform and must be paired with other tools for CI/CD, infrastructure provisioning, and large-scale serving. Enterprise pricing requires direct sales engagement.

- Pricing: Offers a free tier for individuals and academic use. Team, Enterprise, and Dedicated Cloud plans are quote-based.

- GRC Implications: The platform’s comprehensive experiment tracking and Artifacts registry provide a detailed, immutable log of model development. This creates a clear lineage from data to model, which is a foundational requirement for building auditable AI systems.

Website: https://wandb.ai

10. Neptune.ai

Neptune.ai provides a specialized MLOps solution focused on experiment tracking and model registry. Unlike comprehensive end-to-end platforms, Neptune offers a lightweight, dedicated system that excels at organizing, visualizing, and comparing machine learning runs. It is designed for teams who want best-in-class tracking capabilities that can be easily integrated into their existing development stack. The platform prioritizes developer experience with fast metadata logging and a clean user interface.

This tool solves one of the most critical challenges in ML development: reproducibility. By providing a centralized hub for all experimental metadata, from hyperparameters to code versions and output artifacts, Neptune helps teams avoid disorganized spreadsheets and scale their research and development efforts efficiently.

Key Considerations

- Strengths: Neptune's primary advantages are its transparent, predictable pricing and its simplicity. It is language- and framework-agnostic, making it simple to adopt alongside any training stack without forcing a change in existing workflows. The availability of both SaaS and self-hosted options provides flexibility for organizations with varying data residency and security requirements.

- Limitations: Its focused scope means it does not handle model deployment, serving, or monitoring. Teams must integrate Neptune with other tools to build a complete MLOps pipeline, which requires additional engineering effort. It is a component, not a full-stack solution.

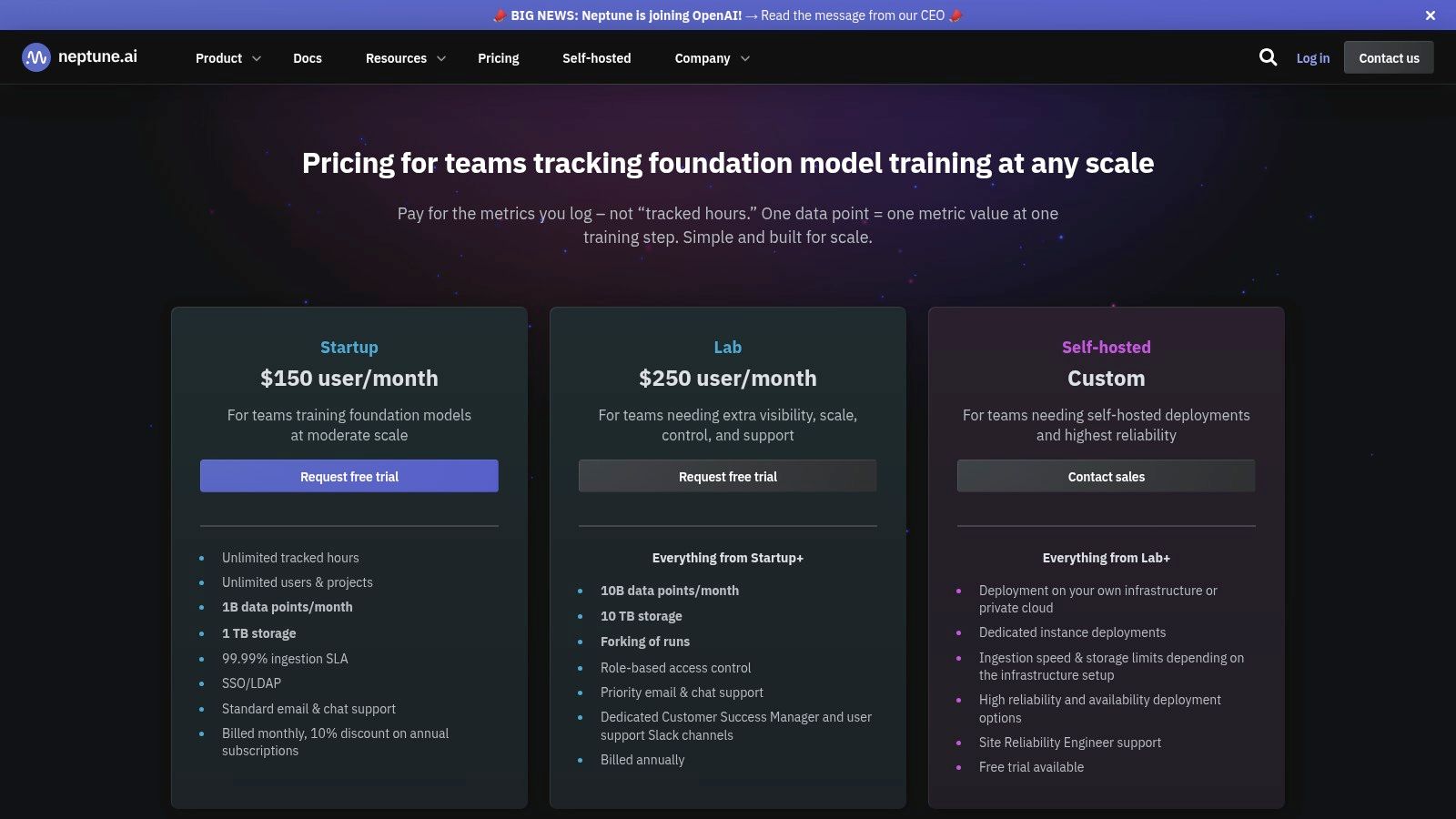

- Pricing: Offers a public, user-based pricing model with quotas for tracked experiments and data points. Free and team tiers are available, with custom enterprise plans for self-hosting and advanced security features.

- GRC Implications: The platform's robust experiment tracking and versioning create a detailed, immutable record of model development. This lineage is crucial for auditability and can provide foundational evidence for GRC processes and compliance.

Website: https://neptune.ai/pricing

11. Kubeflow (open source)

Kubeflow is an open-source MLOps framework designed to be a Kubernetes-native solution for deploying, scaling, and managing machine learning workflows. It provides a composable, portable, and scalable stack for organizations that want to build their AI platform on top of Kubernetes without vendor lock-in. For teams with strong platform engineering and Kubernetes expertise, Kubeflow offers control and flexibility, enabling them to construct a bespoke MLOps environment that runs consistently across any cloud or on-premises infrastructure.

This platform provides a comprehensive set of open-source components for the entire ML lifecycle. Key projects like Kubeflow Pipelines for orchestration, Katib for hyperparameter tuning, and KServe for standardized model serving allow teams to assemble an end-to-end system tailored to their needs. Its status as a Cloud Native Computing Foundation (CNCF) project ensures strong community backing and a robust ecosystem.

Key Considerations

- Strengths: As an open-source solution, Kubeflow has no licensing costs and is highly extensible and cloud-agnostic. Its composable components are portable, allowing for consistent ML workflows across any Kubernetes cluster.

- Limitations: The primary challenge is its operational complexity. Kubeflow requires significant Kubernetes expertise and a dedicated platform engineering investment to install, configure, and maintain. Commercial support is not included by default, though third-party enterprise distributions are available.

- Pricing: Free and open-source. Costs are entirely related to the underlying cloud or on-premises infrastructure required to run the Kubernetes clusters.

- GRC Implications: Kubeflow Pipelines provides a clear, version-controlled definition of ML workflows, creating an auditable record of experiments and model creation steps. However, implementing comprehensive artifact tracking and lineage for full GRC compliance often requires integration with other tools.

Website: https://www.kubeflow.org/

12. MLflow (open source)

MLflow is an open-source standard for managing the machine learning lifecycle. It offers a set of loosely coupled components for experiment tracking, model packaging, and model registration. Because it is an open-source framework rather than a fully managed platform, it provides a flexible and vendor-agnostic foundation that can be self-hosted or found integrated within larger commercial offerings like Databricks. Its key strength is its ubiquity and simplicity, establishing a common language for MLOps practices that prevents vendor lock-in.

This framework provides the foundational components of MLOps without imposing a rigid architecture. Teams can adopt its Tracking API and UI for experiment management, use the Model Registry for versioning and staging, and leverage its standard model format for deployment to various targets, including Docker and Kubernetes. Learn more about how MLflow fits into a cohesive ML orchestration strategy to build scalable workflows.

Key Considerations

- Strengths: As a free, open-source tool, MLflow offers flexibility and avoids vendor lock-in, with extensive support across the ML ecosystem. It helps standardize the core components of the ML lifecycle, reducing switching costs between different cloud providers or platforms.

- Limitations: The primary challenge is the operational overhead of self-hosting, which requires managing the backend database, artifact storage, and authentication. Its feature depth is also dependent on the integrations and surrounding tools you build around it.

- Pricing: Free to use and self-host. Costs are associated with the infrastructure required to run it (compute, storage, database). Managed versions are available through vendors.

- GRC Implications: The MLflow Model Registry provides a clear system of record for model versions, stage transitions (e.g., from staging to production), and associated metadata. This creates a foundational audit trail crucial for governance and compliance.

Website: https://mlflow.org/

Top 12 MLOps Platforms Comparison

| Product | Core focus | Key features | Target audience | Unique selling points | Pricing / Deployment |

|---|---|---|---|---|---|

| ManageAI Platform — AI Performance Monitoring | Enterprise AI observability & risk management | 50+ customizable monitors; real‑time alerts; explainability; business‑outcome linkage | Large enterprises needing scalable monitoring & compliance | Out‑of‑the‑box coverage; tech‑agnostic; six‑week implementation; zero vendor lock‑in | Enterprise pricing; integrates on‑prem or cloud |

| Amazon SageMaker (AWS) | End‑to‑end managed ML platform | Training, model registry, pipelines, drift detection, autoscaling | Organizations standardized on AWS | Deep AWS service integration; mature security & compliance | Pay‑as‑you‑go; complex multi‑SKU billing; AWS managed |

| Google Cloud Vertex AI | Unified ML & LLM platform | Feature store, metadata, model registry, generative AI catalog | GCP customers and LLM teams | Turnkey LLM features; cohesive data‑to‑deploy experience | Multiple SKUs on GCP; managed service billing |

| Azure Machine Learning (Azure ML) | Enterprise ML with governance & Responsible AI | Pipelines, Prompt Flow, feature store, governance tooling | Microsoft‑centric estates; hybrid scenarios | Azure AD/Key Vault/VNet integration; global/hybrid support | Azure resource‑based pricing; managed enterprise service |

| Databricks Data Intelligence (Model Serving) | Lakehouse‑centric model serving & governance | MLflow integration, serverless serving, foundation models | Organizations unifying data and AI on Databricks | Strong data‑to‑production path; flexible pricing (incl. pay‑per‑token) | Workload‑based pricing; managed Databricks platform |

| Snowflake ML | In‑data ML (train/serve where data lives) | Model registry, feature store, Snowpark serving, explainability | Snowflake customers centralizing data & ML | Minimizes data movement; simpler governance/compliance | Uses Snowflake credits; Snowpark Container setup |

| Domino Data Lab | Reproducible, governed enterprise MLOps | Governed registry, one‑click deployments, monitoring, hybrid support | Regulated industries needing hybrid/on‑prem | Strong audit/governance; vendor‑neutral integrations | Quote‑based enterprise pricing; requires IT partnership |

| DataRobot AI Platform | Outcomes‑oriented enterprise AI platform | Deployment, monitoring, governance, agentic workflows | Enterprises seeking vendor‑backed outcomes & services | Enterprise services & references; multi‑topology support | High annual contract value; subscription-based |

| Weights & Biases (W&B) | Practitioner‑first experiment tracking & observability | Experiment tracking, model registry, Launch orchestration, monitoring | ML teams and researchers | Widely adopted by practitioners; rich integrations; flexible deploy modes | SaaS / dedicated cloud / self‑managed; quote-based enterprise |

| Neptune.ai | High‑throughput experiment tracking & registry | High‑throughput logging, RBAC, SaaS or self‑hosted, forking runs | Teams wanting focused tracking outside main platform | Transparent per‑user pricing; easy integration | Public pricing; SaaS or self‑host options |

| Kubeflow (open source) | Kubernetes‑native portable MLOps stack | Pipelines, KServe, Katib, Notebooks, model registry | Organizations with Kubernetes & platform engineering | No license cost; highly extensible and cloud‑agnostic | Free OSS; operational/platform engineering cost |

| MLflow (open source) | Experiment tracking & model registry standard | Tracking UI/API, artifacts, model registry, broad integrations | Teams wanting minimal lock‑in baseline | Ubiquitous support; reduces switching costs | Free OSS; self‑host ops or managed vendor options |

Final Thoughts

Navigating the landscape of MLOps platforms requires more than a feature comparison. The decision to invest in a specific toolchain is strategic, intertwined with your organization's existing architecture, technical maturity, regulatory obligations, and business goals. The search for a single "best mlops platform" can be misleading; the optimal choice is the one that aligns with your operational context and empowers your teams to deliver value repeatably and responsibly.

Our analysis of leading platforms, from comprehensive cloud suites like Amazon SageMaker and Google Vertex AI to specialized tools like Weights & Biases and open-source frameworks like MLflow, reveals a clear trend. The market is moving away from monolithic, all-or-nothing solutions toward a more modular, interoperable ecosystem. This shift empowers organizations to adopt an architecture-first, technology-agnostic approach, selecting best-in-class components that solve specific problems without vendor lock-in.

Key Takeaways for Selecting Your MLOps Stack

Reflecting on the platforms reviewed, several core principles should guide your selection process.

- Start with Your Architecture, Not the Tool: Before evaluating vendors, map your ideal MLOps workflow. Identify critical capabilities you need, such as data versioning, experiment tracking, automated deployment pipelines, and post-production monitoring. This blueprint becomes your scorecard for assessing how well a platform fills your specific gaps.

- Balance Power with Usability: A platform like Kubeflow offers immense flexibility for sophisticated engineering teams but can present a steep learning curve. Conversely, solutions like DataRobot accelerate development for data scientists but may offer less granular control. Evaluate the skills of your current team and the platform’s ability to democratize ML without sacrificing rigor.

- Prioritize Governance and Compliance: In an era of increasing AI regulation, MLOps is no longer just an operational discipline; it is a core component of your Governance, Risk, and Compliance (GRC) strategy. The ability to ensure model lineage, auditability, fairness, and transparency is non-negotiable. Platforms that integrate these capabilities natively provide a significant advantage in mitigating risk.

Your Actionable Next Steps

Armed with this information, your path forward should be methodical and collaborative.

- Assemble a Cross-Functional Team: Involve data scientists, ML engineers, DevOps specialists, and GRC stakeholders in the evaluation process. Each group brings a unique and vital perspective.

- Define a Pilot Project: Select a well-defined, medium-complexity machine learning model to serve as a test case. Use this project to conduct a proof-of-concept (POC) with your top two or three platform candidates. This real-world test will reveal practical integration challenges and usability issues far more effectively than any demo.

- Calculate Total Cost of Ownership (TCO): Look beyond the sticker price. Factor in implementation costs, required engineering resources for maintenance (especially for open-source tools), training expenses, and potential cloud consumption costs. A platform with a higher license fee may ultimately have a lower TCO if it reduces engineering overhead.

Ultimately, implementing an MLOps platform is a foundational investment in your organization's ability to scale AI. It is the bridge between innovative data science and tangible business impact. The right platform will not only accelerate model deployment but also instill a culture of discipline, collaboration, and trust, ensuring your AI initiatives are reliable, compliant, and poised for future growth.

Choosing the right MLOps platform is only part of the journey; successful implementation and achieving measurable ROI is the other. At DSG.AI, we help enterprises design and build robust, production-grade AI systems, ensuring your chosen MLOps stack aligns with your strategic objectives for a 15-25% improvement in operational efficiency. Explore our proven approach to enterprise AI implementation at DSG.AI.