Written by:

Editorial Team

Editorial Team

Implementing AI in a large organization requires moving beyond proofs-of-concept. Real value comes when AI is integrated into core operations to deliver measurable results. This requires a plan that starts with a high-impact business problem, confirms data readiness, and builds on a scalable architecture.

This guide helps you sidestep common pitfalls, like building a model that cannot be integrated into existing workflows or failing to show a clear return on investment.

An AI Implementation Roadmap

For most enterprise leaders, implementing AI is a question of how, not if. A recent study shows that top-performing companies see a 5% or higher impact on earnings (EBIT) from AI adoption. Those who have already started report productivity gains between 26-55%.

However, a high percentage of AI projects fail to deliver on their initial promise. They often start from a weak business case or cannot be integrated with existing systems. This guide offers a clear roadmap to avoid those outcomes, focusing on an architecture-first approach.

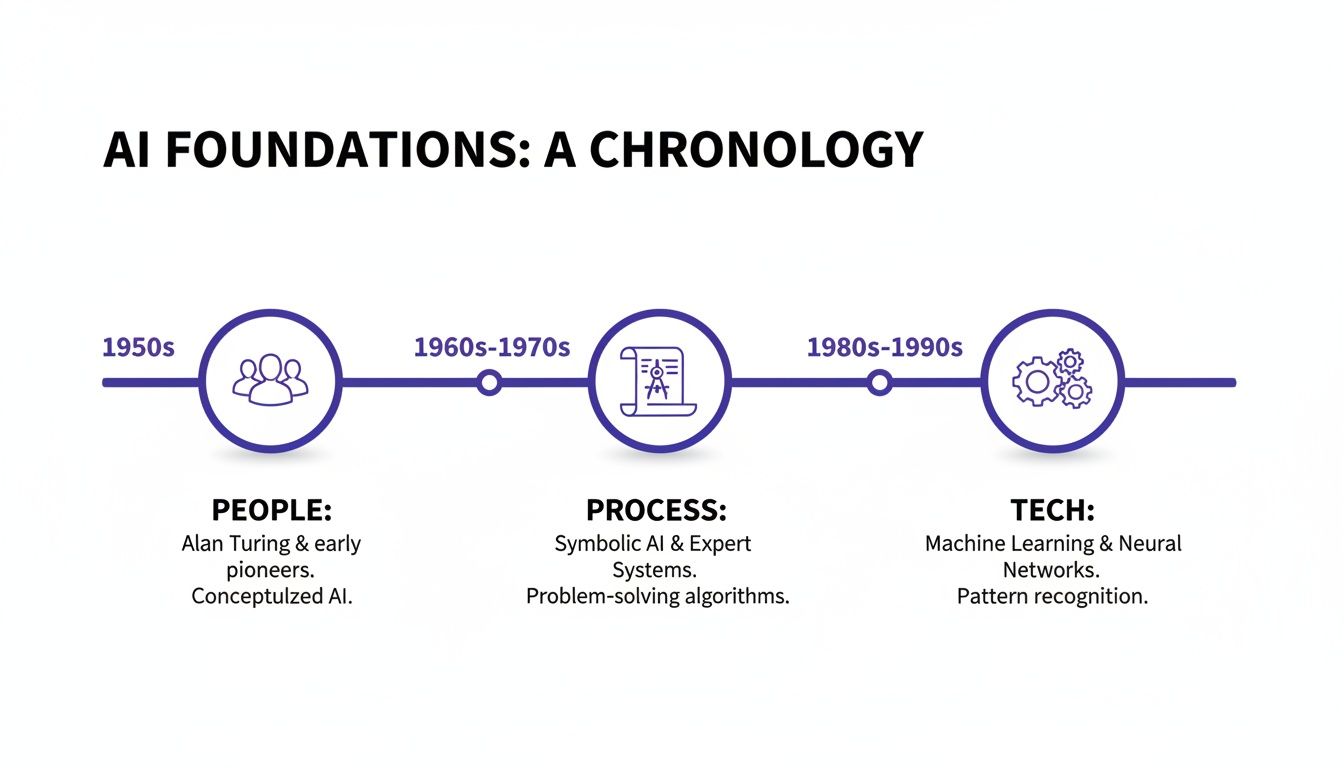

Defining a Practical Framework

A successful strategy covers the entire lifecycle. It includes the people, processes, and technology that support a model from development to retirement. For a technical perspective on this, see this CTO's guide on how to implement AI in business.

A solid plan includes these core elements:

- Pinpointing High-Impact Use Cases: Find a specific operational bottleneck—like manual invoice processing or inaccurate demand forecasting—where AI can create a quantifiable improvement.

- A Data Readiness Check: Assess your data quality, accessibility, and volume. Is it sufficient to train the planned model?

- Structuring a Scalable Pilot: Your initial project must prove value quickly and be built on an architecture that can be expanded across the organization.

- Securing Executive Buy-In: Build a business case based on projected cost savings, revenue uplift, and efficiency gains.

The biggest mindset shift is moving from treating AI as an experiment to treating it as a core business capability. This means planning for integration, monitoring, and governance from the beginning—not as an afterthought.

This guide provides a blueprint for this shift. The process begins with a structured, phased approach.

The table below outlines the end-to-end process.

The 6-Phase Enterprise AI Implementation Framework

| Phase | Objective | Key Activities |

|---|---|---|

| Discovery | Identify and validate a high-value business problem suitable for an AI solution. | Conduct workshops, analyze workflows, quantify potential ROI, assess data availability. |

| Pilot (6-Week Sprint) | Develop and deploy a minimum viable model to prove business value quickly. | Data preparation, iterative model development, controlled production deployment, baseline measurement. |

| Scale & Productionize | Expand the pilot solution into a fully operational, integrated enterprise system. | Build robust data pipelines, implement MLOps, integrate with core business systems. |

| Monitor & Optimize | Ensure the AI model's performance and business impact are sustained over time. | Track KPIs, monitor for model drift, retrain models as needed, gather user feedback. |

| Govern & Comply | Establish a framework for responsible AI use and regulatory adherence. | Conduct bias audits, document model behavior, manage access controls, align with regulations. |

| Measure ROI | Continuously track and report on the tangible business value generated by the AI system. | Compare post-implementation metrics to baselines, report on cost savings or revenue uplift. |

Each phase builds on the last, creating a repeatable process that de-risks investment and accelerates time-to-value.

Find High-Value AI Opportunities and Build the Business Case

The most successful AI projects start with a business problem, not a technology. The goal is to methodically identify operational bottlenecks where AI can provide a measurable advantage.

Start by meeting with operational leaders in logistics, finance, customer service, or manufacturing to map their most persistent pain points. Ask questions to uncover hidden inefficiencies.

Pinpointing Specific Pain Points

Identify where employees spend hours on manual, repetitive work or which processes have high error rates. These conversations can reveal opportunities for high-value AI initiatives. Listen for problems before prescribing a solution.

For instance, a logistics manager might mention that five team members spend half their day reading, sorting, and forwarding emails about shipment status. This is a potential AI opportunity.

This insight allows you to reframe a vague goal into something measurable:

- The Problem: We spend too much time processing logistics emails.

- The AI Opportunity: We can build an email classification system with Natural Language Processing (NLP).

- The Metric: Reduce manual email processing time by 40% against the Q3 baseline.

This specificity turns a technology idea into a compelling business case. It provides a clear definition of success that resonates with stakeholders focused on the bottom line. You can explore a framework for an initial AI readiness assessment to understand your current state.

Quantifying the Potential Impact

After shortlisting potential projects, you must quantify their impact to get executive buy-in. Every AI pitch should be built around projected cost savings, potential revenue growth, or efficiency gains.

The most effective business cases use conservative, defensible numbers. Under-promise and over-deliver. For a pilot, focus on a single, primary metric.

A simple prioritization matrix can help. Plot each opportunity based on potential business value versus technical difficulty. A high-value, low-complexity project is an ideal starting point for a quick win.

Synthetic Example: A Maritime Company

A maritime shipping company realized their vessels were burning excess fuel due to suboptimal speed and routing decisions.

- The Problem: Inaccurate fuel consumption forecasts were causing an average 5-8% overspend on fuel each year.

- The AI Solution: They developed a predictive model using historical voyage data, weather patterns, and ocean currents to recommend the most efficient speed and route.

- The Business Case: The model projected a 3-6% reduction in fuel consumption. For a fleet of 20 vessels, this translated to an estimated $1.2 to $2.4 million in annual savings.

Building a Realistic Pilot Plan

With a priority project and business case, the final step is to define the pilot plan. This requires an honest assessment of your data. Do you have the necessary data? Is it accessible? Is the quality sufficient to train a reliable model?

Your initial plan must also identify risks, such as data privacy hurdles, integration with legacy systems, or skill gaps. Managing expectations is critical. A 6-week pilot should aim to prove the business case, not to be a perfect, full-scale deployment. This approach lowers risk, demonstrates value quickly, and builds momentum for future AI work.

Assembling Your AI Team and Technology Foundation

An AI initiative is a business transformation project supported by IT. Technology is one piece of the puzzle. Without the right people and a scalable architecture, even a high-performing model will fail to deliver value.

Success requires a cross-functional team and a technology foundation designed for long-term use.

Top-performing organizations move from pilot to full deployment in 90 days, while the average company takes nine months. Only one-third of organizations manage to scale their AI initiatives effectively. You can review these findings on AI business implementation for more detail.

The key is building a solid foundation from day one.

Structuring Your Cross-Functional AI Team

Your AI team needs a mix of technical, business, and operational expertise.

Key roles include:

- Project Sponsor: A VP or C-level executive who secures resources and connects the AI team's work to business strategy.

- Domain Expert: An operational leader, such as a logistics manager or financial analyst, whose input ensures the model is useful in a real-world context.

- Data Scientist / ML Scientist: The model builder who explores data, runs experiments, and focuses on statistical rigor.

- ML Engineer: The link between a prototype and a production system. They build data pipelines and manage the MLOps infrastructure.

- Data Engineer: Ensures clean, reliable data is always available. They build and maintain the data architecture.

An Architecture-First Technology Approach

A smart strategy is to take an architecture-first, technology-agnostic approach.

This means you design the system to solve your specific business problem first, then select tools that fit the design.

This mindset shift helps avoid vendor lock-in and ensures your new AI system integrates with your existing tech stack. The goal is a flexible foundation that supports your entire AI roadmap.

Your MLOps (Machine Learning Operations) foundation is central to this architecture. It is the process that takes a model from development to a reliable, monitored production service.

Key MLOps decisions include:

- Data Pipelines: How will you consistently get, clean, and prepare data for training?

- Model Training Infrastructure: Where will you train models? On-premise or in the cloud?

- Deployment & Serving: How will the model be packaged and served so other applications can access its predictions?

- Continuous Monitoring: How will you monitor the model’s real-time performance to detect drift or accuracy degradation?

The Critical Build vs. Partner Decision

A key decision is whether to build AI capabilities in-house or work with a specialized partner. The answer depends on your company's maturity, resources, and strategic goals.

Consider the following:

- Time-to-Value: A partner can reduce your timeline from months to weeks by providing pre-built frameworks and expertise.

- Access to Specialized Expertise: Finding and retaining top ML engineers and AI researchers is difficult and expensive. A partner provides immediate access to an experienced team.

- IP Ownership: If owning the source code is a requirement, ensure your partner agreement provides full ownership with no lock-in.

- Focus on Core Business: Outsourcing technical development frees up your internal teams to focus on creating business value with the AI solution.

When building your technology foundation, also consider related areas like choosing your AI partner for cybersecurity compliance. The right combination of people and technology turns a promising AI concept into a sustainable competitive advantage.

From Pilot to Production in a 6-Week Sprint

Long AI projects can lose momentum. A focused, 6-week sprint can deliver measurable results quickly. This timeframe forces a focus on the minimum viable product (MVP), proving value and building a case for further investment.

This approach prioritizes what moves the business forward. Instead of building a perfect solution over many months, the goal is to deploy a functional, value-generating model into a controlled production environment. This allows you to validate the business case with real data.

Success requires a balanced effort across People, Process, and Technology from day one.

Weeks 1-2: Deep Dive and Baseline Reality Check

The first two weeks focus on discovery and data validation. This involves a detailed analysis of the target workflow. Your team should work with domain experts to map the process, identify data sources, and establish baseline metrics.

You cannot prove value if you do not know the starting point.

For an email classification system for a logistics company, you would need to answer these questions:

- How many emails does a human agent process per hour? (This is your manual processing time baseline.)

- What is the average error rate for manual classification? (This is your accuracy baseline.)

- Is the historical email data accessible, clean, and sufficiently labeled to train a model?

By the end of week two, you must have a clear, quantifiable definition of success and verified data.

Weeks 3-4: Build, Test, and Refine

Weeks three and four are for building and refining the model. This is an iterative process. Data scientists create an initial model, and the results are shared with domain experts for feedback.

Their input is critical for spotting operational nuances a model might miss. This collaboration helps ensure the AI's logic aligns with business realities.

For the logistics company example, the model might initially confuse a "shipment delay notification" with a "customs clearance issue." The domain expert’s input helps data scientists engineer features to teach the model this distinction, improving its practical value.

Week 5: Controlled "Go-Live"

In week five, the model is deployed to a controlled production environment. This is not a full launch. You might run the AI in parallel with the human team or have it handle a small fraction—for example, 5-10%—of the live workload.

This step tests the model's performance and the MLOps infrastructure under real-world conditions. It can surface integration issues or performance bottlenecks before a full-scale rollout. You can explore tools and strategies to manage AI systems in production to ensure proper monitoring.

Week 6: Monitor, Tune, and Handover

The final week is for monitoring, fine-tuning, and a formal handover. The team monitors the model’s live performance against the baselines established in week one. They analyze predictions, track accuracy, and measure its impact on the target metric, such as manual processing time.

For the logistics example, the goal is to confirm the AI is classifying emails correctly and delivering the targeted efficiency gains. By the end of the sprint, the logistics company has an operational model that has already reduced manual email processing by over 50% in the controlled test.

You now have a tangible win, a clear ROI, and the momentum needed to scale the solution.

Measuring AI's Business Impact

An AI model that does not prove its business worth is a failure. Showing a return on investment is what separates a science project from a core business capability. This means defining success with metrics that matter to business leaders.

A model that is 95% accurate is a good start, but the real question is whether that accuracy translates into financial or operational advantages. To determine this, you must focus on business-level key performance indicators (KPIs).

Tying Your AI to Business-Centric KPIs

Before writing any code, you must define the specific business metrics your AI solution will impact. These KPIs connect your model’s technical performance to financial and operational results.

Instead of focusing on model accuracy, use metrics like these:

- Cost Per Transaction: For an automated invoice processing system, the goal is to lower the cost of handling each invoice. A relevant KPI would be a 15% to 25% reduction in processing cost compared to the prior quarter's baseline.

- Customer Satisfaction (CSAT) Scores: An AI-powered chatbot must improve the customer experience. Success is measured by an increase in CSAT scores for interactions handled by the AI.

- Scrap Rate Reduction: In manufacturing, a predictive maintenance model should prevent failures. The KPI is a tangible 8% to 12% reduction in the material scrap rate versus the previous year's baseline.

- Employee Productivity: For an email classification system, the benefit is giving employees more time for high-value work. The metric could be a 40% increase in high-value tasks completed per employee.

The most powerful business cases are built on hard numbers that link AI's performance to the P&L statement. A model that cuts scrap by 10% is a concrete win that everyone understands.

To make this work, you must establish a pre-implementation baseline. Measuring the "before" state is necessary to prove the value of the "after." Without a clear baseline, any ROI calculation is a guess.

Proving Your AI Was the Difference-Maker

After your AI is live, you must prove it caused any improvements. To isolate the AI's impact, run controlled tests.

Two reliable methods are:

- A/B Testing: This is the standard. Route a portion of the workload (e.g., 20% of customer service chats) to your new AI system while the rest continues through the existing process. After a set period, such as 30 days, compare KPIs between the two groups.

- Control Groups: If a direct A/B test is not practical, you can compare the performance of a team or facility using the AI tool against a similar one that is not.

These methods provide defensible data showing that your AI implementation drove the results.

User Adoption Determines ROI

An AI tool that no one uses has an ROI of zero. The final piece of measuring business impact is user adoption. High-performing organizations understand this and dedicate resources to change management.

Top firms see a 5%+ EBIT impact from AI because they redesign workflows and scale solutions effectively. They achieve this by dedicating 70% of their AI transformation resources to people and change management, according to McKinsey. You can find more data on how top organizations achieve AI-driven value on McKinsey.com.

To ensure your tool becomes part of daily operations, address the human element:

- Make it simple to use: The UI/UX must be intuitive. If the old way is easier, people will not switch.

- Show the user benefit: Training should focus on how the AI helps users achieve their goals faster or with less effort.

- Create a feedback loop: Provide a simple way for users to report bugs or suggest improvements. This helps refine the model and gives users a sense of ownership.

Weaving in AI Governance and Regulatory Compliance

As AI becomes part of core operations, a governance framework is non-negotiable. With regulations like the EU AI Act taking shape, moving forward without guardrails creates risk.

Successful AI implementation requires building responsible, fair, and trustworthy systems. Neglecting this foundation can lead to financial, legal, and reputational damage.

Building Your Responsible AI Framework

A practical Responsible AI framework includes four guiding principles. These should support every AI project from concept to long-term monitoring.

- Transparency: Can you explain how your model made a specific decision? Maintain clear documentation, from the training data to the model's architecture and performance metrics.

- Fairness: Actively test for biases in your models that could create unfair outcomes. This requires rigorous testing before deployment and continuous monitoring afterward.

- Accountability: Establish clear ownership. Who is responsible for the AI system’s performance and accountable for its impact?

- Security: Protect your models and data from adversarial attacks, data poisoning, and unauthorized access.

Taking a Risk-Based Approach to Compliance

Not all AI systems carry the same level of risk. A model that optimizes warehouse inventory has a different risk profile than one used for medical diagnoses. A risk-based approach allows you to focus governance efforts where they are most needed.

Our guide on preparing for the EU AI Act can help you classify your systems and apply the appropriate level of oversight.

The table below provides a framework for classifying AI systems and matching them with the right level of governance, based on principles from regulations like the EU AI Act.

AI Risk Level and Required Governance Actions

| Risk Level | Example Use Case | Required Governance Actions |

|---|---|---|

| High-Risk | AI-powered credit scoring, medical diagnostic tools, critical infrastructure management. | Stringent pre-deployment assessments, mandatory human oversight, extensive logging, and regulatory registration. |

| Limited-Risk | Chatbots that interact with customers, content recommendation engines. | Clear transparency obligations (e.g., informing users they are interacting with an AI), basic monitoring. |

| Minimal-Risk | Internal inventory management, AI-enabled spam filters, simple data analysis tools. | Standard IT security and data protection practices. Voluntary codes of conduct are encouraged. |

This is not just about compliance. This framework forces a conversation about the real-world impact of each AI initiative.

Proactive risk management is better than reactive cleanup. A risk-based framework forces you to consider consequences, turning compliance into a strategic advantage.

By classifying projects this way, your most sensitive applications receive the necessary scrutiny—including human-in-the-loop reviews and impact assessments—while lower-risk systems can be managed with more agile governance.

The Need for Continuous Monitoring

An AI model's performance can degrade over time as the real world changes. This is known as concept drift.

Continuous monitoring is the only way to detect this degradation. Your MLOps team needs to track technical metrics, like model accuracy, and the business KPIs the model is designed to influence. For example, if a demand forecasting model's accuracy slips by 5%, it should trigger an automated alert for review and potential retraining.

Automated monitoring tools are essential. They watch models in real-time, flag anomalies, and provide the data needed to maintain trust and prove that your AI systems continue to deliver value.

Common Questions in AI Implementation

When moving an AI project from concept to a real-world system, certain questions arise. Addressing these challenges early can determine the success of the initiative.

One of the first decisions is buy versus build. Off-the-shelf tools can provide a quick start for standard functions, like an AI module for a CRM. For your core competitive advantage—such as a unique routing algorithm for a logistics company—a custom build is often the better long-term choice. It provides control and avoids vendor lock-in.

How Do We Handle Data Privacy and Security?

This question is common. With regulations like GDPR and the EU AI Act, you must handle data securely from the start.

We often implement techniques like data anonymization to remove personal identifiers or federated learning. Federated learning trains the model on data locally, so sensitive information does not need to be moved to a central server.

Bake privacy-by-design principles into your AI architecture from day one. Do not treat it as an afterthought. This means conducting a Data Protection Impact Assessment (DPIA) before launching a pilot.

Our Pilot Was a Success—Now What?

Scaling a successful pilot is a common challenge. A frequent mistake is building the pilot on temporary infrastructure not meant for production. When it is time to scale, you have to start over.

Instead, build your pilot on a production-ready, scalable architecture from the beginning. Focus on:

- A Modular Design: Use reusable components for data pipelines, feature stores, and model deployment.

- Automation: Build MLOps pipelines to automate the testing and deployment of new models. Manual deployments do not scale.

- Clear Ownership: Assign ownership for the scaled system, often to a central AI team or a Center of Excellence that can maintain the platform and share best practices across business units.

This approach turns your first successful project into a repeatable playbook.

Ready to start building? At DSG.AI, we help enterprises design, build, and operationalize AI systems that deliver measurable value in just six weeks. See how we turn data into a competitive advantage. Learn more about our projects.