Written by:

Editorial Team

DSG.AI

The difference between a logical and a physical data model comes down to a simple concept: the blueprint versus the building. A logical data model is the technology-independent blueprint—it defines what data the business needs and how it connects. The physical data model is the technology-specific implementation, spelling out how that data will be physically stored in a specific database.

Architectural Plans for Data

Imagine you are building a custom home. Your first meeting with an architect is not to select plumbing fixtures; it's to design the floor plan. You map out the rooms, their purpose, and how they flow together. This initial blueprint is your logical data model. It captures the core business needs—a master bedroom connected to a bathroom, a kitchen that opens into the living room—in a way that everyone, from the homeowner to the construction crew, can understand. It defines the what.

Once that high-level plan is approved, engineers and contractors create detailed construction documents. They specify the exact type of copper pipe, the load-bearing capacity of the beams, and the precise wiring schematics. This is the physical data model. It translates the conceptual floor plan into a concrete design, defining specific table names like CustomerOrders, column types like VARCHAR(255), and performance-tuning features like indexes. You need both; one defines the vision, and the other makes it a reality.

What Is a Logical Data Model?

The logical data model acts as the bridge connecting business requirements to a technical database design. It is a visual representation of business information and rules, focusing on the core entities (like 'Customer' or 'Product'), their attributes (like 'FirstName' or 'SKU'), and the relationships between them.

Its primary characteristics are:

- Technology-Agnostic: It is not tied to a specific database management system (DBMS), which makes it a flexible and reusable asset.

- Business-Focused: It uses terminology that business analysts, stakeholders, and data architects can all understand and validate.

- Structurally Sound: It follows normalization principles (often to Third Normal Form, or 3NF) to reduce data redundancy and ensure data integrity at a conceptual level.

What Is a Physical Data Model?

The physical data model takes the concepts from the logical model and grounds them in the reality of a specific database system, like PostgreSQL, Oracle, or Microsoft SQL Server. This model contains all the technical details needed to build the database.

Key elements of a physical data model include:

- Technology-Specific: It defines columns with precise data types (

INT,DATETIME), character limits, and constraints specific to the chosen database technology. - Performance-Oriented: It is designed for speed. This model introduces performance optimizations like indexes, partitions, and deliberate denormalization to ensure queries run fast.

- Implementation-Ready: It is the final design handed to database administrators (DBAs) and developers to write the DDL (Data Definition Language) scripts and create the database.

The separation between logical and physical models dates back to the rise of relational databases in the 1970s. The goal was to define business needs without being locked into specific hardware or software. For more perspective on how these concepts fit into modern architectures, it is helpful to compare semantic layers vs. traditional data models.

To clarify these distinctions, here is a side-by-side comparison.

Quick Comparison Logical vs Physical Data Model

This table breaks down the core differences, highlighting how each model serves a unique purpose for a different audience.

| Attribute | Logical Data Model | Physical Data Model |

|---|---|---|

| Purpose | To define business requirements and data structures conceptually. | To design the actual database implementation on a specific platform. |

| Audience | Business Analysts, Data Architects, Business Stakeholders. | Database Administrators (DBAs), Developers, Technical Architects. |

| Abstraction | High-level and technology-agnostic. Focuses on entities and relationships. | Low-level and technology-specific. Focuses on tables, columns, and indexes. |

| Detail | Describes what data is stored. | Describes how the data is stored and accessed. |

You cannot have a well-designed physical model without a solid logical model to guide it. One defines the strategy, the other executes the tactics.

Comparing the Core Attributes of Each Model

Logical and physical data models are two sides of the same coin. They both describe your data, but they speak to different audiences and serve different functions. The logical model is the strategic blueprint, while the physical model is the on-the-ground implementation. Understanding this distinction is the foundation of any scalable and maintainable data architecture.

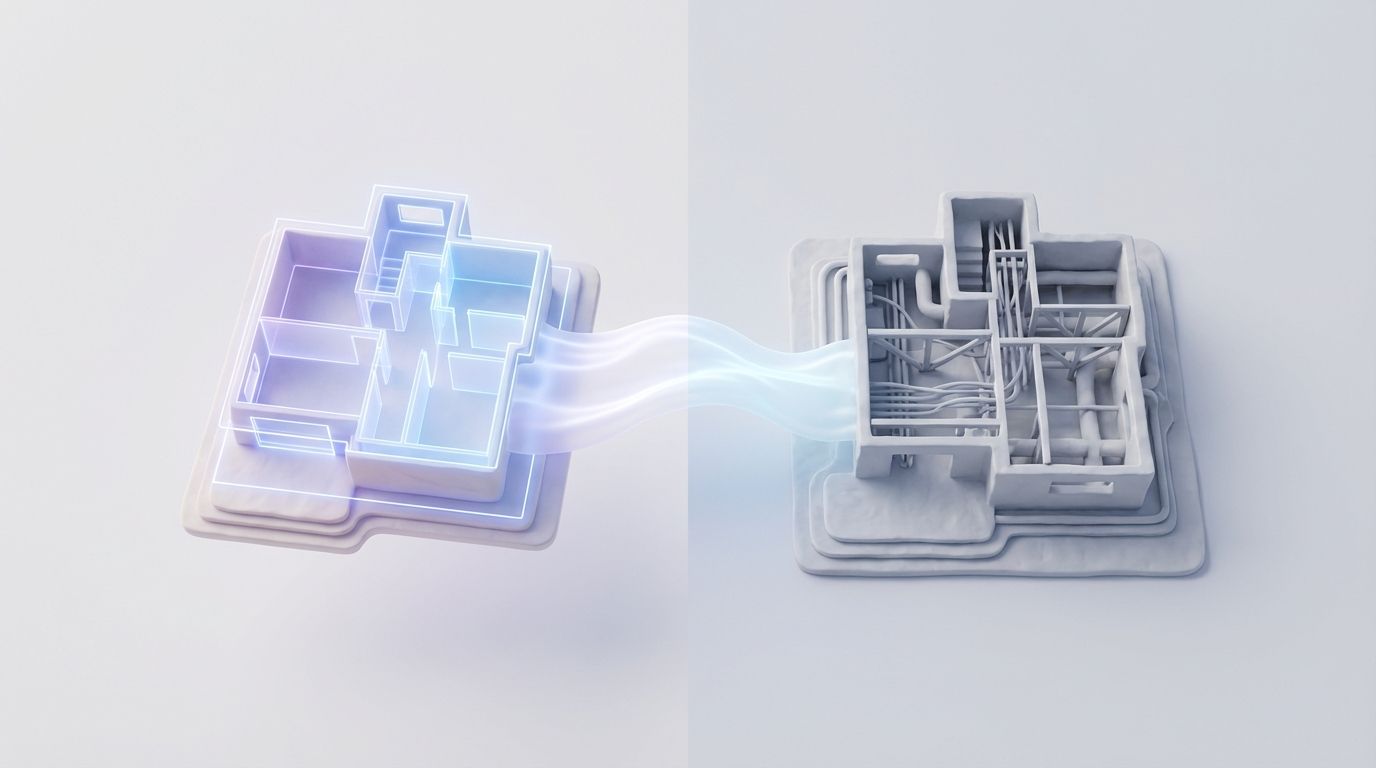

This visual captures the relationship: the logical model is the architect's plan focused on business concepts, while the physical model represents the actual database built from that plan.

The logical model defines what data the business needs, and the physical model dictates how that data will be stored and managed in a specific system.

Purpose: Business Blueprint vs. Technical Implementation

A logical data model captures business requirements. It is a communication tool that allows data architects, business analysts, and key stakeholders to agree on the essential data elements that run the company. It answers the question, "What information is critical to our operations?"

In contrast, a physical data model is a technical guide. Its job is to map out the exact database schema for a chosen database management system (DBMS), with a focus on storage efficiency and query performance. It answers the question, "How are we going to build the database to hold and serve this data?"

Abstraction: Technology-Agnostic vs. Technology-Specific

The logical model is intentionally technology-agnostic. It defines entities, attributes, and relationships without being tied to a specific database. This independence makes it a stable, long-lasting asset that can guide implementations on anything from Oracle to PostgreSQL.

The physical model is inherently technology-specific. It must address the details of the chosen DBMS.

- Data Types: A logical attribute like 'CreationDate' must be translated into a specific type, such as

DATETIME2for SQL Server orTIMESTAMPfor PostgreSQL. - Constraints: It defines database-level rules such as

CHECKconstraints orDEFAULTvalues that the DBMS will enforce. - Storage Parameters: It specifies low-level details like tablespaces, partitioning schemes, and filegroups that directly impact performance and storage.

A logical data model is a business asset; a physical data model is a technical asset. The logical model can outlive multiple technology stacks, whereas the physical model is often retired alongside its underlying database.

Granularity: High-Level Entities vs. Detailed Columns

The level of detail in each model reflects its purpose. A logical model works at a high level, focusing on business concepts like entities, attributes, and the relationships between them. For example, a logical model would define a 'Customer' entity with attributes like 'Name', 'Email', and 'PrimaryAddress'.

A physical model translates these abstractions into concrete database objects.

- The 'Customer' entity becomes a

Customerstable. - The 'Name' attribute might be broken into two columns:

first_name(VARCHAR(50)) andlast_name(VARCHAR(50)). - A surrogate key, like

customer_id(INT, PRIMARY KEY, NOT NULL), is often added to ensure every row is unique. - An index might be created on the

last_namecolumn to speed up search queries.

This is where architectural vision meets implementation reality. The logical model ensures you are building the right thing for the business, while the physical model ensures you are building it efficiently on your chosen technology.

Mapping the Logical Model to a Physical Design

Moving from a logical data model to a physical design is like handing an architect's blueprint to the construction crew. It is a methodical process of translating the abstract, business-centric plan into a concrete schema ready for a specific database system. Every choice made here has a direct effect on storage, performance, and scalability.

The first step is mapping each entity from the logical model to a table in the physical database. A Customer entity becomes a customers table. The Product and Order entities are similarly translated into products and orders tables. This lays the basic structural foundation.

This initial mapping is just the beginning. The craft lies in turning high-level concepts into low-level technical details that the database management system can understand and enforce.

Converting Attributes and Relationships

Next, every attribute inside an entity becomes a column with a chosen data type. The specific database platform starts to matter here. For instance, an OrderDate attribute might be implemented as a TIMESTAMP in PostgreSQL but as DATETIME2 in SQL Server.

The relationships defined in the logical model also need a physical implementation using primary and foreign keys.

- One-to-Many Relationships: The primary key from the "one" side is added as a foreign key to the "many" side. For example,

customer_idfrom thecustomerstable is added to theorderstable. - Many-to-Many Relationships: These require creating a new junction or bridge table. To link

OrdersandProducts, you would build anorder_itemstable containing foreign keys for bothorder_idandproduct_id.

The transformation from logical to physical is not a simple one-to-one conversion. It is an act of optimization, where business requirements are balanced against the performance constraints and capabilities of the chosen technology.

This detailed mapping ensures the business rules captured logically are enforced at the database level, which is fundamental for data integrity. A choice like using an INT instead of a BIGINT for an ID column can save a large amount of storage across millions of records. To manage complex data flows around these structures, understanding effective data orchestration is key.

Introducing Performance Optimizations

While the logical model focuses on normalization to eliminate data redundancy, the physical model must prioritize performance. This often means making strategic compromises to the normalized structure. An early decision is choosing the right database technology, which involves weighing SQL vs NoSQL database choices.

Denormalization is a common optimization technique. For a synthetic e-commerce example, a common query for an order summary might need to join orders, order_items, and products. To make this faster, the physical design might add a product_name column to the order_items table. This creates redundancy but can reduce join complexity and improve query response times for reports by an estimated 10-15%.

Beyond that, the physical model introduces a toolbox of performance-enhancing features:

- Indexes: These are created on frequently queried columns, like foreign keys or columns in a

WHEREclause, to make data retrieval faster. - Partitions: This involves breaking up large tables into smaller chunks. You might partition a large

orderstable by month to speed up queries that target specific time ranges. - Constraints: You will define rules like

NOT NULL,UNIQUE, andCHECKconstraints to enforce data quality at the source.

By following this disciplined translation, an architect ensures the final database is not just an accurate reflection of the business but also an efficient and scalable technical asset.

How Modeling Choices Impact Performance and Maintenance

Decisions made when defining logical versus physical data models have lasting consequences for your system's performance and ease of maintenance. The logical model is the stable architectural blueprint. The physical model is the actual construction—the foundation, wiring, and plumbing—that determines daily function. An incorrect blueprint leads to costly renovations. Poor construction leads to constant problems.

A well-crafted logical model supports long-term maintainability. Since it is independent of any specific technology and focused on business rules, it provides a solid reference point that does not become obsolete when you switch databases. When business needs change, you update the logical model first. This step ensures that changes are thought through before anyone writes code, which reduces the risk of introducing errors.

This structured, two-step approach delivers tangible results. According to industry analysis from data architecture consultancies, companies that adopt a formal logical-then-physical modeling process can see up to a 30% reduction in database redesign times. Catching design flaws early also translates into a 20%-25% improvement in query execution times and a 15%-18% decrease in data anomalies. A detailed industry overview on TechTarget.com dives deeper into these benefits.

The Physical Model’s Direct Impact on Speed

While the logical model provides structural integrity, the physical model is where performance is determined. Its design directly dictates how quickly the database can store, locate, and return data. Developers and DBAs apply specific techniques to tune for speed.

Here are a few critical performance-tuning levers in a physical model:

- Indexing: This is the most common optimization. Adding an index to a frequently queried column, like a customer's last name, can reduce query times by an estimated 40-60%. However, every index adds overhead to write operations (inserts, updates, deletes), so placement must be strategic.

- Partitioning: For large tables, partitioning breaks data into smaller chunks based on a key, like a date range. A query asking for sales in June only has to scan the June partition instead of a multi-billion-row table, leading to performance gains.

- Denormalization: This technique intentionally adds redundant data to a table to avoid expensive joins. For example, storing the

customer_namedirectly in theorderstable speeds up read-heavy reports but adds storage costs and creates the challenge of keeping the redundant data synchronized.

A logical model gives you architectural resilience, letting the system adapt to business changes. A physical model delivers operational performance, ensuring the system can handle its workload. You need both to succeed.

Balancing Scalability and Long-Term Costs

The initial time spent on a thorough, two-tiered modeling process pays dividends in operational efficiency and future scalability. A solid logical model acts as clear documentation, making it easier to onboard new developers. It also gives your organization the freedom to evolve its technology stack—for example, migrating from an on-premise SQL Server to a cloud-based PostgreSQL instance—without having to rediscover business requirements from scratch.

A physical model is never "done." It needs continuous monitoring and optimization as data volumes grow and query patterns shift. A design that was fast with one million records might slow down with one hundred million. The logical model provides the stable context needed to make intelligent physical optimizations without compromising data integrity. For organizations wanting to keep AI and ML systems performant and compliant, tools for AI model monitoring and portfolio management are crucial for maintaining this balance over time.

When to Use Each Data Model in a Project

Understanding the difference between a logical and physical data model is one thing. Knowing when to prioritize each separates a successful project from one needing rework. Each model has its moment in the project lifecycle. Focusing on physical details too soon or skipping the logical blueprint can lead to systems that miss business goals or fail under load.

The logical data model is the focus during discovery and requirements gathering. This is where business analysts and data architects work with stakeholders to define the project's scope and capture functional needs. The key is to remain technology-agnostic. The goal is to build a shared language and a clear picture of the business domain: What are our core entities? How do they relate? What rules govern those relationships?

Prioritizing the Logical Model for Business Alignment

In the early phases, clarity and consensus are critical. A logical model acts as a communication tool, turning complex business processes into a structured, visual format that both technical and non-technical stakeholders can validate. For example, when designing a new e-commerce platform, the logical model defines entities like Customer, Product, and Order and establishes business rules like "A Customer can have multiple Orders," ensuring the development team builds what the business requested.

The market for data modeling tools, which has surpassed USD 2.3 billion and is projected to grow at a CAGR of 11.5% according to a 2023 Grand View Research report, reflects the importance of this stage. A 2022 survey by the Data Management Association (DAMA) found that 76% of enterprises consider logical data modeling a vital step for maintaining data consistency and meeting regulations like GDPR. You can find more on how data models support modern business strategies to get the full context.

Shifting Focus to the Physical Model for Implementation

Once the logical blueprint is approved and business requirements are locked in, the project moves to implementation. The physical data model takes center stage. The conversation shifts from what the system needs to do to how it will do it. Database administrators (DBAs) and developers translate the logical entities and relationships into concrete tables, columns, and indexes for a specific database system.

This is where performance-tuning decisions happen. You ask critical questions:

- What are the most efficient data types for storing this information?

- Which indexes should we create to make common queries run faster?

- Should we denormalize tables to eliminate joins for our reporting dashboards?

The logical model ensures you build the right system, one that aligns with business goals. The physical model ensures you build the system well, making it performant and scalable in the real world.

Applying the Models in Specific Architectures

How you use these models also changes depending on the system you are building.

-

Data Warehouse Design: You start with a logical model to define business subject areas like Sales, Marketing, and Inventory. The physical model then brings these to life as star or snowflake schemas, with fact and dimension tables designed for fast analytical queries.

-

Microservices Architecture: In this architecture, each microservice owns its data and typically has its own database. Each service needs its own logical and physical model. The logical model defines the data contract for that service, while the physical model optimizes its local database for its specific workload.

By shifting focus between the logical and physical models at the right times, teams can deliver a final product that is aligned with business needs and technically solid.

Common Questions About Data Modeling

Even with clear definitions, practical application can raise questions. Here are answers to common questions about logical and physical data models.

Getting these details right is what separates a smooth project from one that requires rework.

Can You Have a Physical Model Without a Logical One?

You can, but you should not. It is like building a house without a blueprint. You can start laying bricks, but you will likely encounter structural problems later.

When you jump straight to a physical model, you tie your design to immediate technical assumptions. This creates a rigid system that is difficult to adapt. Projects that skip the logical model face an estimated 25-40% increase in rework when missed business requirements surface, according to internal project reviews from several enterprise IT departments.

How Do NoSQL Databases Fit into This Process?

The principles do not change, only the implementation. Your logical model is still the source of truth for business rules and relationships, but the physical implementation will differ depending on the database.

Consider an entity like a 'User' who has many 'Posts'. The logical model defines that relationship.

- In a NoSQL database like MongoDB, the physical model might embed the posts directly within the user document as a JSON array. This is efficient for read performance.

- In a relational database like PostgreSQL, the physical model would create two tables,

UsersandPosts, connected by a foreign key.

The logical model remains your platform-agnostic guide to the business data. The physical model is where you make the specific, performance-driven choices for your chosen technology.

A conceptual data model is the highest-level, most abstract view of a system. It identifies core business concepts and their relationships, designed to get buy-in from senior business stakeholders before detailed modeling begins. It precedes both the logical and physical models.

What Are the Most Common Modeling Tools?

Data architects and developers rely on several industry-standard tools to bridge the gap between logical design and physical implementation.

Some of the most popular choices include:

- Erwin Data Modeler: A comprehensive tool that has been a staple in large enterprises for years.

- SAP PowerDesigner: Another powerful tool known for its ability to model complex enterprise systems.

- Navicat Data Modeler: A modern option with a clean, user-friendly interface.

A key feature in most of these tools is the ability to design the logical model and then auto-generate a starter physical model for a specific database. From there, a DBA can fine-tune it for production. For more deep dives on data architecture, you can find more articles on the DSG.AI data science and engineering blog.

At DSG.AI, we help enterprises design and operationalize AI systems that deliver measurable business value. Our architecture-first approach ensures your data models provide a scalable, reliable foundation for production AI. Discover how our team can help you build your next mission-critical AI project by exploring our work at https://www.dsg.ai/projects.