Written by:

Editorial Team

Editorial Team

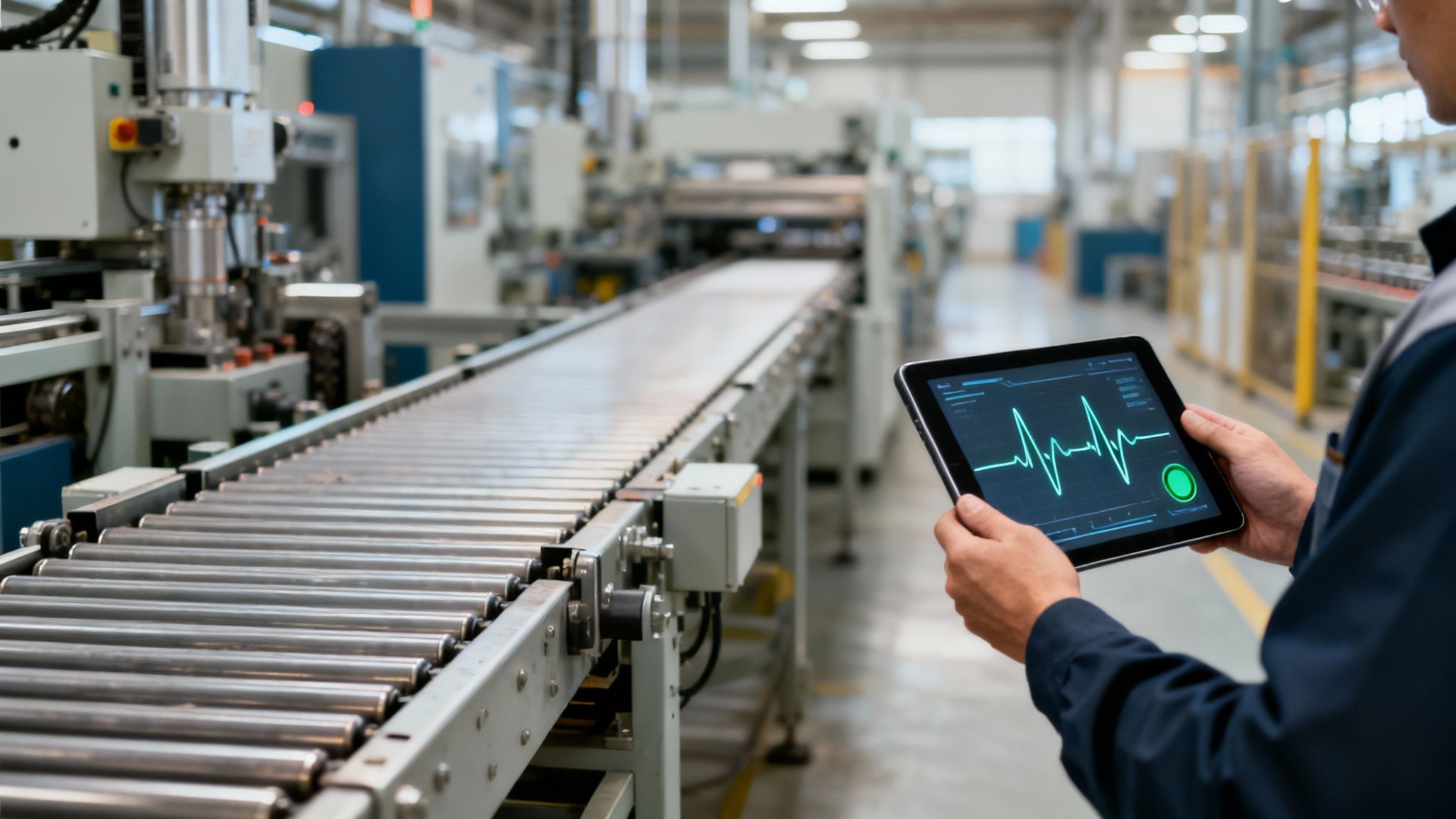

An entire production line grinds to a halt without warning. Suddenly, you're facing a cascade of costly disruptions and blown deadlines. If this sounds painfully familiar, you're likely still stuck in the old world of reactive or calendar-based maintenance. There is a more effective approach. Predictive maintenance offers a data-driven path forward, moving from fixing what's broken to preventing failures before they happen.

Moving From Reactive Repairs to Proactive Performance

For decades, maintenance has followed two paths: run-it-til-it-breaks (reactive) or service it based on a calendar (preventive). The reactive approach is simple, but it turns every breakdown into an emergency and incurs high costs. Preventive maintenance is an improvement, but it often means technicians service healthy machines while still failing to catch many potential failures.

Predictive maintenance is a different approach. It uses continuous health monitoring to prevent failure. Instead of waiting for an issue to occur, this method analyzes real-time data from sensors and diagnostics to intervene at the right moment. The crisis is averted, and asset health is preserved.

That is what predictive maintenance in manufacturing does for your equipment. It uses data from sensors and operational systems to monitor the real-time condition of your assets. By applying analytics, it can identify when a specific part is likely to fail. This lets your team schedule the fix precisely when it's needed—no sooner, no later.

The Core Business Transformation

This shift from a time-based to a condition-based approach turns maintenance from a cost center into a strategic function. It is about more than just fixing machines efficiently; it is about achieving a higher level of operational performance and financial return. By using the data your machines are already generating, you can see measurable gains.

A well-executed predictive maintenance program can reduce unplanned downtime by a range of 15-25%. This is a direct boost to production capacity and on-time delivery.

This data-first approach lets you redirect maintenance spending away from expensive, last-minute emergencies and into planned, efficient interventions. To understand this evolution, it helps to explore predictive maintenance and its core principles. The key insight is that the information you need is often already available in your factory.

Why This Shift Matters Now

The primary challenge for manufacturing leaders is the cost and inefficiency of traditional maintenance. Unplanned downtime doesn't just stop one machine; it affects the entire value chain, disrupting labor schedules, material flow, and customer trust.

Predictive maintenance addresses this problem by giving your teams clear, data-backed insights so they can act proactively. The benefits go beyond avoiding breakdowns.

- Optimized Resource Allocation: Technicians are dispatched based on data-driven alerts, not a calendar. This ensures their skills are used where they are most needed.

- Reduced Ancillary Costs: By catching problems early, you avoid the high costs of overtime, rush shipping for parts, and the collateral damage that comes with catastrophic failures.

- Improved Capital Planning: With accurate health data on every asset, you get a clearer picture of when equipment truly needs to be replaced. This leads to more effective long-term spending.

This guide provides a practical roadmap for implementing this strategy at scale, turning abstract ideas into a concrete plan for delivering business value.

How to Build a Business Case for Predictive Maintenance

Getting approval for a predictive maintenance program requires a strong financial justification. To convince senior leadership, you need to translate the technical benefits into a business case that demonstrates return on investment. This requires a financial model that clearly shows how the initiative will improve the bottom line.

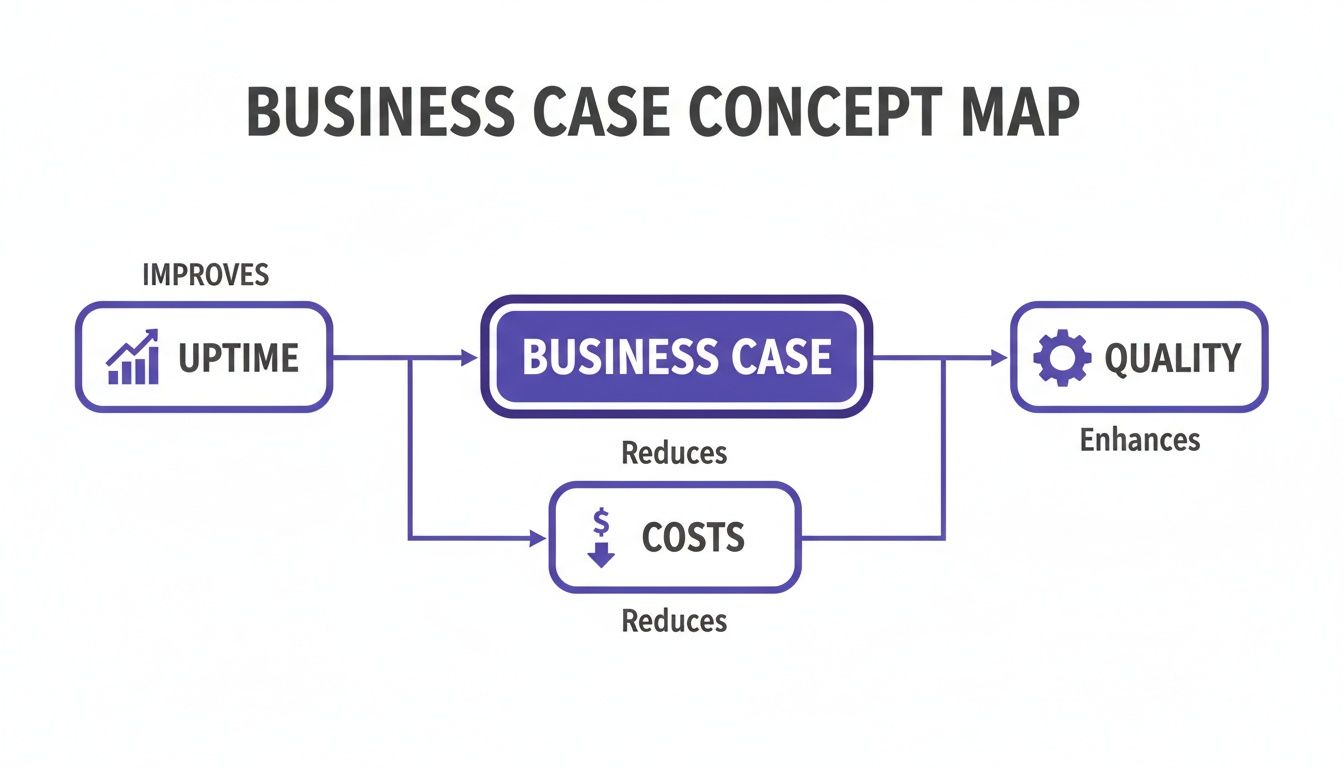

Build your case around four pillars of value. These represent measurable improvements that address common challenges in manufacturing operations, from unexpected shutdowns to wasteful spending.

Pillar 1: Increase Asset Uptime

The most powerful argument for predictive maintenance is its ability to reduce unplanned downtime. When a critical machine goes down without warning, the costs escalate. You are not just losing production; you are paying for idle labor and potentially facing penalties for late shipments.

Predictive models provide advance warning, letting you see failures coming before they happen. This means you can schedule repairs during planned outages instead of scrambling to fix a broken machine. Moving from reactive to proactive maintenance can increase equipment availability by 10–20%. That’s a direct boost to your production capacity without spending on new equipment.

Pillar 2: Reduce Maintenance Costs

Traditional preventive maintenance can be inefficient. It often means servicing equipment that's running properly, just because the calendar says so. Predictive maintenance changes this by directing your team to fix only what needs fixing, right when it needs it.

Based on industry benchmarks, this data-driven approach typically cuts maintenance labor and parts costs by 20–30%. You stop replacing components on a fixed schedule and instead replace them based on their true condition, extending the life of every part and eliminating unnecessary spending.

Synthetic Example: A plant with 50 CNC machines averages 10 unexpected spindle failures a year. Each incident costs $20,000 in downtime and repairs—a $200,000 annual cost. A predictive maintenance system, costing $75,000 to implement, successfully predicts 8 of those 10 failures. This action prevents $160,000 in losses, delivering a net saving of $85,000 in the first year.

Pillar 3: Improve Production Quality

A failing machine does not just suddenly break. Its performance usually degrades first, creating subtle quality problems. A machine running slightly out of specification can produce bad parts, driving up your scrap and rework numbers.

By constantly monitoring equipment health, predictive maintenance helps maintain consistency. This focus on quality can lead to an 8–15% reduction in scrap and rework rates, which means less wasted material and more products that meet specifications the first time.

Pillar 4: Enhance Worker Safety

A strong business case must also account for risk. When a large piece of equipment fails catastrophically, it creates a dangerous situation on the plant floor.

Predicting and preventing these failures makes the work environment safer. While this benefit is harder to quantify in dollars, a better safety record reduces the risk of accidents, can lower insurance premiums, and improves team morale. A safe plant is a productive plant.

Predictive maintenance is becoming a standard in manufacturing. Industry analysis from 2023 shows that 30–40% of industrial facilities have adopted it. Since most plants already perform preventive maintenance, the opportunity is to redirect existing resources toward smarter, data-driven actions that deliver a higher return.

Building your business case on these four pillars provides a complete picture of the value. To make it specific to your company, start by benchmarking your current state. You can get a clear picture of where to start with a readiness assessment for enterprise AI to pinpoint the opportunities with the biggest impact. This turns a tech proposal into a strategic business plan.

Understanding the Data and AI Models That Drive Prediction

Predictive maintenance is about listening to your equipment. A predictive model needs the right information to make an accurate diagnosis. It's about strategically collecting the right data from the right places to build a complete health profile for each asset.

Each data source provides a different piece of information. Only when you combine them can the AI see the subtle patterns that precede a breakdown. Most modern manufacturing plants already have this data.

The Four Essential Data Inputs

A robust predictive maintenance program blends four key types of data. Each one offers a unique view of equipment health.

- Sensor Data: This is your direct line to the machine's real-time condition. Vibration sensors can detect a slight imbalance in a motor. Thermal sensors can flag a component that is starting to overheat long before it becomes a problem.

- Operational Data: Pulled from systems like your SCADA or MES, this data provides context. It tells the model about production speeds, cycle times, and load levels. This helps it differentiate between normal operational stress and early signs of degradation.

- Maintenance Records: Your Computerized Maintenance Management System (CMMS) is a historical record. It contains the story of every past failure, repair, and part replacement. This history is what you need to train an AI model to recognize specific failure patterns.

- External Data: For equipment exposed to the environment, outside factors matter. Ambient temperature, humidity, and air quality can affect performance and should be included for a comprehensive model.

This is how a solid business case is built—by connecting these data streams to tangible improvements on the shop floor.

As the graphic shows, when you get the data inputs right, the outputs are a measurable boost in uptime, a reduction in costs, and an improvement in quality.

From Raw Data to Actionable Signals

A raw stream of sensor data contains a lot of noise. An AI model cannot make sense of it on its own. This is where feature engineering is important.

Feature engineering transforms noisy, raw data into clean, meaningful "features" or signals that a model can interpret. For instance, instead of feeding a model thousands of individual vibration readings, an engineer might create features like "average vibration over the last minute" or "peak frequency band." This step requires a partnership between data scientists and your domain experts. Its success often determines the project's success.

Choosing the Right AI Model for the Job

The goal is to match the model to the problem, the data you have, and the business outcome you are chasing. Start simple and add complexity only where it is needed.

The best strategy is not to chase the newest, most complex AI algorithm. It is to find the simplest model that can reliably solve your business problem. Over-engineering a solution adds cost and complexity for little gain.

Different modeling approaches are suited for different jobs. Here is a look at the most common techniques and where they fit best.

A Comparison of Predictive Maintenance Modeling Approaches

| Modeling Approach | Best For | Example Manufacturing Use Case | Data Requirement | Complexity |

|---|---|---|---|---|

| Statistical Models | Establishing a normal baseline and flagging unusual deviations (anomaly detection). | Monitoring motor temperature and triggering an alert when it exceeds its normal operating range. | Primarily real-time operational data. | Low |

| Machine Learning | Classifying known failure types when historical failure data is available. | Using past CMMS data to train a model that can distinguish the vibration pattern of a bearing failure from a misalignment issue. | Historical failure data (labeled) and sensor data. | Medium |

| Deep Learning | Uncovering complex, time-dependent patterns in high-frequency sensor data. | Analyzing thousands of data points per second from a CNC machine to predict a critical spindle failure hours in advance. | Large volumes of high-frequency time-series data. | High |

The model you choose is a tool. The real work is in understanding the problem and feeding that tool the right information.

1. Statistical Models for Anomaly Detection This is often the best place to start. Statistical models are effective at learning what "normal" looks like for a piece of equipment and then flagging anything that falls outside that baseline. They are suitable for getting early wins and spotting unusual behavior without needing a deep history of past failures.

A simple example: a statistical model tracks the current draw of a conveyor motor. If the amperage suddenly spikes beyond its typical range, it triggers an alert for a technician.

2. Machine Learning for Failure Classification Once you have good historical data that includes specific types of failures, you can use supervised machine learning. Models like Random Forests or Gradient Boosting can be trained to not just spot a problem, but to classify what kind of problem it is.

For instance, you could use past maintenance records and sensor data to train a model to recognize the unique vibration signatures of three known failure modes in a gearbox. Now, the alert is not just "there's a problem," it's "it looks like a bearing failure." This makes the repair plan more precise.

3. Deep Learning for Complex Pattern Recognition For your most complex and critical assets—especially those generating massive amounts of high-frequency sensor data—you may need deep learning. Models like Long Short-Term Memory (LSTM) networks are designed to analyze long sequences of data over time, finding subtle patterns that simpler models would miss.

An LSTM network can sift through thousands of data points per second from an industrial press. It can learn to identify the slow, evolving patterns over several hours that are the true precursors to a catastrophic hydraulic system failure. This gives you a much longer window to intervene.

Architecting an AI System for Scale and Reliability

A pilot project on a single machine is a good start. It is different from a successful enterprise-wide rollout. The real test for predictive maintenance in manufacturing is moving from a proof-of-concept to a production-grade system that performs reliably across hundreds of machines in multiple plants.

If you don't build for scale from day one, you may face costly rework and technical debt.

A modern, scalable architecture is about more than prediction accuracy; it’s about reliability and integration. It connects data from the factory floor with the systems your maintenance teams use every day. This means creating a blueprint with robust, repeatable components that is not locked into a single technology.

Core Architectural Components

To build a system that can grow, focus on four foundational pillars. Each one plays a critical role in turning raw data into actionable intelligence.

- Robust Data Ingestion Pipelines: Your system must pull data from various sources—from operational technology (OT) like SCADA systems to information technology (IT) sources like your CMMS. These data pipelines need to handle network issues and different data formats.

- A Centralized Data Platform: A data lakehouse or a similar platform is your single source of truth. It is where all raw and processed data lives, ready for model training, validation, and historical analysis. This approach breaks down data silos.

- An MLOps Framework: MLOps (Machine Learning Operations) automates the entire lifecycle of your models—from training and deployment to monitoring and retraining. It ensures your predictions stay accurate and consistent over time.

- API-Driven Integration: An alert is useless if no one acts on it. The architecture must have APIs that plug directly into your existing EAM/CMMS systems, like SAP PM or Maximo. The goal is to automatically generate work orders from high-confidence alerts.

The objective is to create a closed-loop system. A predicted failure should seamlessly become a scheduled work order in the tool your technicians already use. This focus on workflow separates a science experiment from an operational tool.

The Edge vs. Cloud Decision Framework

An important architectural question is where to process data: on the factory floor (the "edge") or in a centralized cloud environment. There is no single right answer; it depends on the use case. A hybrid approach often works best.

Process at the Edge when:

- Latency is critical: For high-speed equipment where a millisecond matters, on-device processing provides the fastest reaction time.

- Data volume is massive: Sending terabytes of raw sensor data to the cloud is expensive. Pre-processing at the edge reduces bandwidth costs.

- Network connectivity is unreliable: For remote sites or plants with spotty internet, edge models can run autonomously without a constant connection.

Process in the Cloud when:

- Large-scale model training is needed: The computational power of the cloud is ideal for training complex models on large historical datasets.

- Combining data from multiple sources: The cloud is the logical place to bring together data from different machines, plants, and enterprise systems to get a holistic view.

- Fleet-wide analytics are the goal: If you want to compare performance across your entire asset portfolio, you need a centralized environment for processing and storage.

As you design your system, it’s worth looking into the features of a specialized manufacturing cloud platform, which are often built to handle these industrial data challenges.

According to Precedence Research, the global predictive maintenance market size is projected to reach $25.0 billion by 2035. The conversation has moved beyond "can we build a model?" to "can we run it reliably and at scale?"

This shift means that predictive maintenance must be built with scalability and governance in mind from the beginning. As these AI systems become more critical to operations, so does the need for solid oversight. Using a dedicated platform to govern AI is becoming standard practice. Tools that offer a centralized view of AI model performance and governance, like https://dsg.ai/manageai, become essential.

A Phased Roadmap from Pilot to Full-Scale Production

Rolling out a predictive maintenance program should be a structured, phased approach. This method reduces investment risk, allows you to prove value quickly, and builds momentum for a full-scale deployment.

This four-phase plan is designed to deliver measurable results in weeks, not years. By starting small with a high-impact asset, we can prove the concept and create a repeatable playbook for expansion.

Phase 1: Discovery and Scoping (Weeks 1-2)

The first two weeks are for laying a solid foundation. The goal is not to solve every problem at once, but to pick a single asset where a predictive maintenance win will have an immediate and visible impact.

Success here comes down to three key actions:

- Select a High-Impact Asset: Work with your operations and maintenance crews. Ask them which equipment causes the most problems or costs the most when it goes down. Critical pumps, motors, and fans are often good candidates.

- Define Success Metrics: Be specific about what you want to achieve. Aim for something concrete, like "reduce unplanned downtime on the primary stamping press by 20%" or "get at least 72 hours of advance warning for 80% of bearing failures."

- Conduct a Data Readiness Assessment: You cannot build a model without data. Check the quality and availability of historical information for the chosen asset. Make sure you have enough operational data from control systems and maintenance logs from your CMMS to train a reliable first model.

Phase 2: Iterative Development (Weeks 3-4)

With a clear target, the next two weeks are for rapid model development. This is where data science meets the expertise of your floor personnel. The objective is to build a working first version of the model and test it against historical data.

This means pulling together data sources, building an initial predictive model, and evaluating its output. It is important to maintain a tight feedback loop with the maintenance teams. Their input helps confirm whether the model's predictions are useful and trustworthy.

An effective pilot does not just produce a model; it builds confidence. By validating predictions with the experts who know the equipment, you create the early champions needed to drive broader adoption.

Phase 3: Production Deployment (Weeks 5-6)

Once the model proves its worth, it’s time to put it to work. The focus for weeks five and six is on integrating the system into your existing maintenance workflows. An alert is useless if it does not trigger a response.

This means connecting the model’s output directly to the tools your technicians use, like your CMMS. When the model flags a potential failure with high confidence, it should automatically create a work order, assign it to the right person, and include the necessary diagnostic info. Automating this step turns insight into action without creating more manual work.

Phase 4: Scaling and Optimization

The final phase is about taking what you learned from one successful pilot and turning it into a scalable, enterprise-wide capability. You now have a proven template. The next step is to create a standardized playbook that documents the process, technology, and lessons learned.

This playbook becomes your guide for repeating the success on other assets and across different facilities. A critical piece of this stage is establishing a center of excellence—a dedicated team to manage the AI model lifecycle, refine the deployment process, and ensure consistent governance. As you grow, managing dozens of models becomes a challenge, which is where effective AI model orchestration is vital. To learn more, see our guide on AI orchestration for enterprise systems. This strategic approach drives long-term value.

Common Implementation Pitfalls and How to Avoid Them

Even well-planned predictive maintenance programs can encounter problems. Knowing the common traps from the start is important for success.

The challenge is not just building a good algorithm; it's scaling it from a pilot to a reliable, plant-wide system. Many initiatives get stuck in "pilot purgatory" because they encounter foundational issues with data, people, and processes.

The Challenge of Poor Data Quality

The most common reason predictive maintenance models fail is poor data quality. This is the "garbage in, garbage out" problem. An algorithm cannot learn anything useful if it's fed incomplete maintenance logs, patchy sensor readings, or insufficient historical failure data.

A 2024 analysis of multiple industry reports showed that approximately 60% of implementations are hampered by data quality problems. The way to avoid this is to start with a data readiness assessment before modeling.

- How to fix it: Before writing code, put a data governance plan in place. This means getting operations teams to standardize how data is collected, cleaning up old maintenance records, and ensuring sensor data is accurate and consistently formatted.

Failing to Involve Domain Experts

Data scientists may not know the unique operating characteristics of your equipment. Without the knowledge of your maintenance technicians and engineers, a model might flag a statistical anomaly that is actually a normal part of the machine’s cycle. This creates false positives, which erodes trust in the new system.

A model might flag a sudden spike in motor vibration as a problem. A veteran technician knows that spike happens every time a new batch of material is loaded. Without that context, the model learns the wrong lesson.

How to fix it: Make your maintenance and operations experts part of the project team from day one. Their on-the-ground knowledge is valuable for feature engineering—turning raw sensor data into meaningful signals. They are also your best resource for validating whether the model’s predictions make sense. This collaboration builds a smarter model and creates internal champions for adoption.

Mismanaging Governance and Risk

Once predictive maintenance in manufacturing is running your plant, it’s a critical business system that falls under corporate governance and regulatory scrutiny. A "black box" model that no one can explain is a risk. If its performance degrades over time—known as model drift—it could miss a critical failure.

New rules like the EU AI Act are emerging, and they require companies to explain how their AI systems work and keep a clear audit trail.

How to fix it: Build strong MLOps and model governance practices from the beginning.

- Monitor Performance Continuously: Keep a close eye on your model's accuracy and other key metrics. You want to spot performance drift before it causes a problem.

- Ensure Transparency: Do not settle for black-box models. Use techniques that provide explainability, so you can understand why the model made a specific prediction.

- Maintain an Audit Trail: Log everything. Keep detailed records of model versions, the data they were trained on, and their performance over time. This is essential for internal risk teams and external auditors.

By addressing these common issues, you can turn a tech project into a dependable capability that delivers business value.

Frequently Asked Questions

Here are answers to some of the most practical questions leaders have when exploring a predictive maintenance program.

How Much Historical Data Do We Really Need to Get Started?

There is no single number, but a general guideline is 12-24 months of operational and failure data for the specific equipment you're targeting. The quality of that data is more important than the quantity.

You need a dataset that shows examples of normal, healthy operation and documented instances of the exact failures you want to predict. A data readiness assessment during the discovery phase is the only way to know if you have the right data for a reliable model.

What’s the Real Difference Between Predictive and Preventive Maintenance?

The key distinction is timing.

-

Preventive maintenance is driven by a calendar or usage. You service a machine every 500 hours or every six months, whether it needs it or not. This approach can lead to unnecessary downtime and replacing parts that are still functional.

-

Predictive maintenance is driven by the machine's actual health. It uses live data to monitor the equipment and anticipates a failure before it happens. This lets you intervene at the optimal moment.

Essentially, you move from a fixed schedule to an as-needed, data-driven strategy, which is more efficient.

How Do We Get These AI Alerts into Our Team’s Hands?

The insights must trigger action on the floor. The most direct path is to send the AI alerts into the systems your team already uses every day.

A common and effective approach is to connect the AI platform to your Computerized Maintenance Management System (CMMS) or Enterprise Asset Management (EAM) system. When the model flags an upcoming failure with high confidence, it automatically generates a detailed work order in the CMMS.

This ticket can be pre-populated with all the diagnostic data the technician needs, pointing them directly to the problem. It closes the loop from prediction to action without forcing anyone to learn new software.

Ready to move from theory to production? The team at DSG.AI specializes in designing, building, and operationalizing enterprise-grade AI systems that deliver measurable value. We provide a clear path to production with zero vendor lock-in. See our work.