Written by:

Editorial Team

Editorial Team

The EU AI Act is the world's first comprehensive law for artificial intelligence. Its primary goal is to ensure AI systems used within the European Union are safe, transparent, and trustworthy. The law establishes rules based on the level of risk an AI system could pose to people's health, safety, or fundamental rights.

What Exactly Is The EU AI Act?

The EU AI Act is a set of regulations for developing and deploying artificial intelligence. It works like safety standards for other industries. For example, a high-performance race car must meet more demanding safety requirements than a standard passenger vehicle. The Act applies the same logic to AI, using a risk-based approach to match the level of regulation to the potential for harm.

The regulation's goal is to establish trust in AI technology. By setting clear requirements for data quality, transparency, human oversight, and accountability, the Act aims to create an environment where businesses can adopt AI with confidence and the public can trust its outputs. It also creates a single, predictable set of rules for any company operating across the EU market.

Setting a New Global Standard

This regulation positions Europe as a leader in defining responsible AI. The Act’s influence extends beyond the EU's borders, making its principles relevant for enterprises worldwide.

- Global Reach: Similar to the GDPR, the Act applies to any company that provides or uses AI systems within the EU, regardless of the company's location. If an AI system's output affects people in the EU, compliance is mandatory.

- A Focus on People: The framework is built to protect fundamental rights, health, and safety from the potential negative consequences of poorly designed or misused AI.

- Clear Lines of Responsibility: It outlines specific duties for all parties involved, from the providers who develop AI systems to the deployers who use them in their operations.

According to legal analysis, "The EU AI Act introduces a risk-based regulatory framework with the overarching goal of protecting fundamental rights and safety. It brings obligations for all actors across the AI supply chain, including providers, deployers, distributors, users, and importers."

The Countdown to Enforcement Has Begun

The EU AI Act is now law. Following its adoption by the European Parliament, the regulation is entering into force with a staggered timeline. This approach gives businesses time to prepare while prioritizing the rules for the highest-risk applications.

The rollout is phased. Rules for prohibited AI systems take effect first. Regulations for high-risk systems will follow, providing organizations a window to implement compliance measures. Details on the regulation are available in the European Parliament's official announcement.

Making Sense of the Four AI Risk Categories

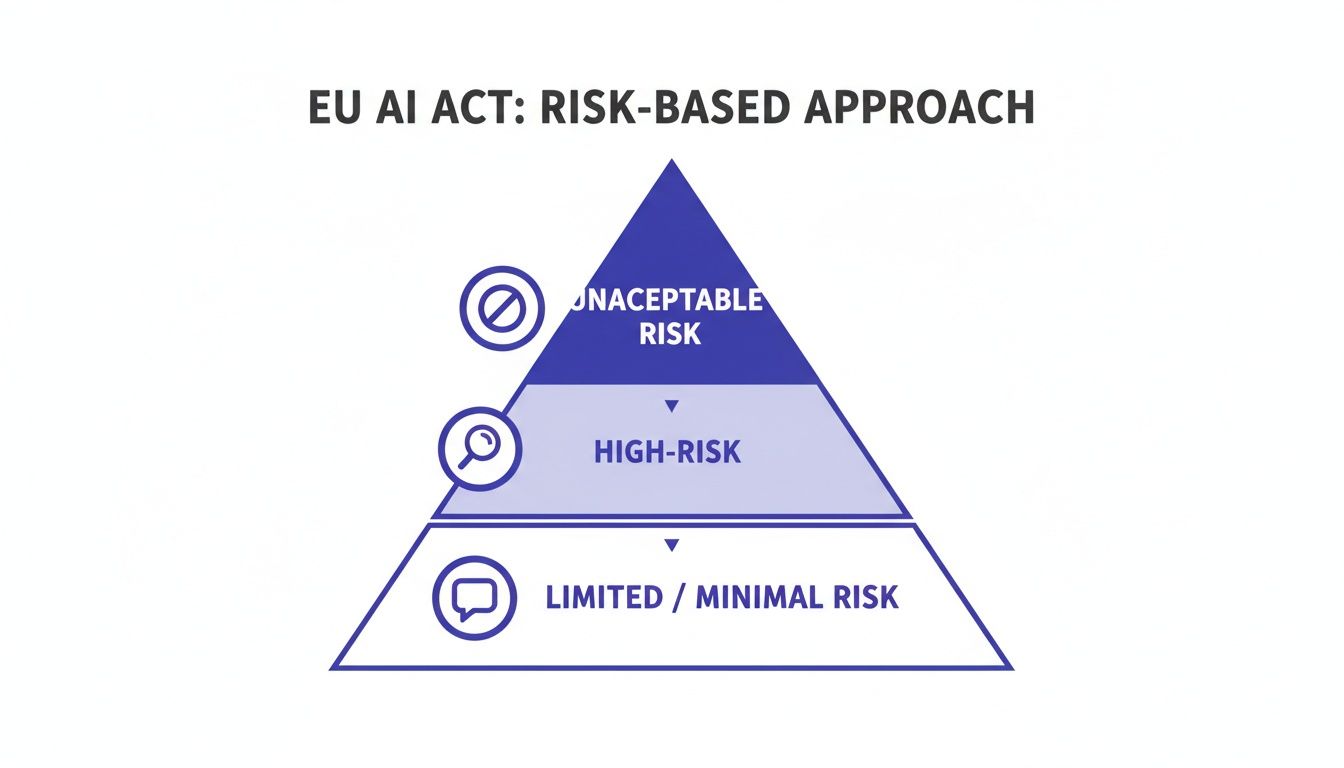

The EU AI Act is built on a single concept: not all AI systems carry the same level of risk. Instead of a uniform set of rules, the regulation classifies AI systems into four distinct tiers. The classification depends on the potential impact on people's safety, rights, and well-being.

This risk-based approach reserves the most significant compliance burdens for applications with the highest potential for harm, leaving most AI development free from new constraints. Determining where a company’s AI tools fit is the first step toward compliance.

This pyramid illustrates the EU AI Act's risk hierarchy, with the most critical category at the top.

As the visual shows, regulatory focus is concentrated on a small fraction of AI systems at the top. The vast majority of applications will face few, if any, new rules.

Unacceptable Risk: The Red Lines

At the top of the pyramid are AI practices considered a direct threat to fundamental human rights. These systems are banned entirely within the EU. The Act specifies which applications are prohibited.

These prohibitions target AI systems with a high potential for manipulation, discrimination, or social harm.

- Government-led Social Scoring: Systems used by public authorities to score individuals based on social behavior, which could lead to unfavorable treatment.

- Subliminal Manipulation: AI designed to distort a person’s behavior in a way that could cause physical or psychological harm, often without their awareness.

- Exploiting Vulnerabilities: Systems that target and take advantage of vulnerable groups, such as children or people with disabilities.

- Real-time Biometric Identification: The widespread use of live facial recognition in public spaces by law enforcement is banned, with limited, tightly controlled exceptions for serious crimes.

High-Risk AI: The Core Compliance Zone

This is the category that most enterprises need to focus on. High-risk AI systems are not banned but are subject to a strict set of requirements before they can be placed on the market. These are systems where a malfunction or biased output could have serious consequences for a person's safety, livelihood, or fundamental rights.

The Act identifies specific domains where AI is automatically classified as high-risk.

The high-risk category focuses on AI that can significantly impact a person's life opportunities or physical safety. This includes tools used in hiring, credit decisions, education, and the management of essential infrastructure.

Examples include:

- Recruitment and HR: AI used to screen resumes, rank job applicants, or make hiring decisions.

- Credit Scoring: Systems that evaluate a person's creditworthiness or eligibility for a loan.

- Critical Infrastructure: AI used in the management of water, gas, and electricity grids.

- Medical Devices: Software that assists with diagnostics or recommends treatment plans.

Organizations developing or using high-risk AI must complete a checklist of requirements. This involves implementing a risk management system, strict data governance, comprehensive technical documentation, effective human oversight, and robust cybersecurity. An initial risk evaluation is the first step toward understanding these obligations. You can learn more about how to conduct an AI risk assessment with DSG.AI.

Limited Risk: Where Transparency is Key

The third tier, limited-risk AI, focuses on transparency. There are no heavy compliance requirements. The main rule is that users must be informed they are interacting with an AI. This allows people to make informed decisions.

The logic is simple: a person should not be misled into believing they are interacting with a human or viewing authentic content when they are not.

Common examples of limited-risk AI include:

- Chatbots: Customer service bots must clearly identify themselves as an AI system.

- Deepfakes: Any content generated or manipulated to appear authentic (such as an image, audio, or video) must be labeled as artificially created.

Minimal Risk: The Vast Majority of AI

The largest category is minimal-risk AI. This includes the majority of AI systems in use today, from AI-powered spam filters and inventory management tools to recommendation engines in video games. These applications pose little to no threat to people's rights or safety.

For this group, the EU AI Act imposes no new legal obligations. Companies are free to innovate without additional regulatory requirements, though they are encouraged to adopt voluntary codes of conduct for ethical AI.

Meeting Your Obligations for High-Risk AI

If your business develops or uses AI for critical functions like recruitment or credit scoring, you are operating in the high-risk zone. The requirements in this category demand a structured, auditable approach to AI development and deployment.

The central challenge is translating legal mandates like "risk management" or "human oversight" into specific, actionable items for data science and MLOps teams.

A Practical Risk Management System

The Act requires a continuous risk management system that operates throughout the AI's entire lifecycle. This is an ongoing process, not a one-time check at deployment.

Your team must establish a formal procedure to identify, evaluate, and mitigate potential harms before they occur. This involves asking critical questions at every stage, from initial design to post-market monitoring.

- Identify Foreseeable Risks: Brainstorm potential negative impacts on people's health, safety, or fundamental rights. For a hiring tool, this could mean identifying the potential for gender or ethnic bias in screening candidates.

- Estimate and Evaluate Risks: Quantify the likelihood and severity of these harms. For example, a synthetic example could be estimating that a biased model might incorrectly reject 15 to 20 percent of qualified female applicants.

- Adopt Mitigation Measures: Implement technical and procedural safeguards to reduce those risks. This could involve using fairness toolkits to retrain the model on a more balanced dataset or adding a mandatory human review step for all rejected candidates from underrepresented groups.

Data Governance and Management

Reliable AI systems are built on high-quality, relevant data. The Act mandates robust data governance for training, validation, and testing datasets.

Your teams must be prepared to demonstrate that the data used was fit for its purpose, free from unfair bias, and representative of the environment where the AI will be deployed.

The EU AI Act makes data governance a legal requirement. It demands that data is handled with the same rigor and documentation as a financial audit, ensuring the inputs to your AI are sound and legally defensible.

For a closer look at strategies in this area, valuable insights can be found on navigating AI ethics, EPPA compliance, and risk management in human resources, a field where high-risk AI is common.

Comprehensive Technical Documentation

Before a high-risk system can be placed on the market, you must create and maintain detailed technical documentation. This documentation serves as the primary evidence for auditors and national authorities that you have met your obligations.

It should function as the complete architectural blueprint and operational manual for your AI, clear enough for a third party to understand how the system was built and how it operates.

Your documentation needs to cover:

- The system’s purpose, capabilities, and limitations.

- A detailed description of the methods used to build and test it.

- Specifics about the training, validation, and testing datasets.

- The risk management measures implemented.

- Clear instructions for the user (the deployer) on how to operate the system correctly.

Human Oversight and Intervention

A critical operational requirement is ensuring effective human oversight. This means designing systems with built-in intervention points that allow a person to step in, override a decision, or shut the system down if necessary. It is not about passively monitoring a dashboard.

These "stop" buttons must be built directly into the AI's workflow. For an AI managing an electrical grid, this might be an alert system that flags anomalies for human review and requires an operator to approve any automated adjustments that fall outside of pre-set safety parameters.

Meeting these high-risk obligations requires a structured plan that integrates these principles directly into your development lifecycle.

When Does This All Kick In? A Look at Timelines and Penalties

The EU AI Act is being implemented now. Understanding the rollout schedule is essential for planning resources and avoiding significant fines. The Act is being phased in, but the deadlines are firm.

Implementation begins with the most urgent provisions first. Just six months after the law officially enters into force, the rules banning prohibited AI practices will apply.

Other deadlines follow over the next few years. This tiered approach is designed to help organizations manage compliance in logical stages, starting with the most critical systems.

The Phased Enforcement Schedule

The rollout consists of a series of key dates. Each date brings another part of the Act into full effect.

Here is a simplified breakdown of the major deadlines:

- 6 Months: The ban on AI systems with Unacceptable Risk goes into effect. This includes applications like government-run social scoring and manipulative AI.

- 12 Months: The rules for General-Purpose AI (GPAI) models apply. This is significant, as it targets the large foundational models that power many other AI tools.

- 24 Months: The majority of the Act's rules become law, covering all High-Risk AI systems. This is the most critical deadline for most enterprises.

- 36 Months: The final provisions come into force. This includes rules for certain high-risk systems that are already part of products governed by existing EU safety laws.

The work of auditing your AI inventory and classifying systems needs to begin now to be ready for the crucial 24-month deadline.

The High Cost of Getting It Wrong

The EU AI Act includes substantial penalties for non-compliance. The fines are structured to be a serious deterrent and are large enough to impact global revenue, similar to GDPR's enforcement structure.

The EU AI Act's penalty structure is calibrated to enforce compliance across global enterprises. It caps fines at the greater of a fixed euro amount or a percentage of worldwide annual turnover. This makes regulatory adherence a critical business issue for Governance, Risk, and Compliance (GRC) leaders.

Fines are tiered based on the severity of the violation.

Potential penalties include:

- Up to €35 million or 7% of a company’s total worldwide annual turnover for using a prohibited AI system.

- Up to €15 million or 3% of worldwide annual turnover for failing to meet the obligations for high-risk AI and most other requirements.

- Up to €7.5 million or 1.5% of worldwide annual turnover for providing incorrect or misleading information to authorities.

These figures represent a significant financial risk for any global company, demonstrating the seriousness of enforcement. For more on this topic, see the analysis on morganlewis.com.

Building Your Enterprise Readiness Plan

Preparing your business for the EU AI Act requires a practical, step-by-step roadmap. A structured readiness plan can turn this regulatory challenge into a manageable project that builds more dependable and trustworthy AI.

The first step is to identify your company's complete AI footprint. You cannot govern what you do not know. This requires creating a comprehensive inventory of every AI model, system, and AI-powered feature in use or development across your organization.

This discovery work is the foundation for all subsequent steps. It involves mapping where AI exists in your business, who owns it, what data it uses, and which business processes it affects.

Step 1: Inventory and Classify Your AI Systems

After creating a full inventory, classify each system according to the Act's risk tiers. This process separates high-stakes applications from low-risk tools, allowing you to focus your efforts where they are most needed. For example, is a new logistics model high-risk or minimal-risk?

This classification stage requires a methodical review of each system against the Act’s definitions. You will need to ask:

- Unacceptable Risk: Does this system perform a function that is banned?

- High-Risk: Is it used in one of the eight critical areas, such as hiring, credit scoring, or managing essential infrastructure?

- Limited Risk: Does it interact directly with people (e.g., chatbots) or create synthetic content, which triggers transparency rules?

- Minimal Risk: Does it fall outside the other categories, meaning no specific action is required?

Step 2: Conduct a Thorough Gap Analysis

With your systems classified, you can now conduct a gap analysis. This involves comparing your current practices for high-risk AI against the Act's specific requirements. This diagnostic identifies where your organization is non-compliant and what needs to be fixed.

For instance, a data science team might have excellent model validation techniques, but their documentation process may not meet regulatory standards. A gap analysis reveals these discrepancies and creates a concrete to-do list. This is often where an outside perspective, such as from Regulatory Compliance Consulting Services, can be beneficial.

Step 3: Establish a Formal AI Governance Framework

Compliance must become an integrated part of your company's operations. This requires establishing a formal AI governance framework with clear roles and responsibilities. For example, who is ultimately responsible for the fairness of a hiring algorithm? Who signs off on the risk assessment for a new AI diagnostic tool?

An effective AI governance framework creates clear lines of ownership. It designates specific individuals or committees responsible for oversight, risk management, and ensuring that all AI systems align with both regulatory mandates and the company’s ethical principles.

This framework should include:

- An AI Review Board: A cross-functional team of experts to oversee AI strategy and approve high-risk projects.

- Defined Roles: Clear responsibilities for data scientists, ML engineers, product managers, and legal teams.

- Standardized Processes: Formal, repeatable procedures for risk assessments, model documentation, and post-deployment monitoring.

Step 4: Implement Technical and Procedural Controls

The final step is to implement the technical and procedural controls identified during your gap analysis. This involves providing your teams with the tools and workflows needed to maintain compliance.

This could mean deploying new software to monitor model drift, creating standard templates for technical documentation, or establishing mandatory human review checkpoints for sensitive AI-driven decisions. These controls are the mechanisms that keep your AI systems safe, transparent, and compliant throughout their lifecycle.

How Our Platform Makes EU AI Act Compliance Practical

Navigating the EU AI Act can be complex. True compliance requires a structured approach to AI governance. We built our platform to embed regulatory readiness directly into the AI lifecycle.

This approach turns a regulatory challenge into a manageable, day-to-day process. It helps de-risk your compliance journey from the start.

Our philosophy is to establish the right architecture first. We help you build a durable, auditable foundation for your AI systems, which simplifies meeting the Act’s requirements. This begins by automating the first steps of a readiness plan.

From Model Inventory to Real Insight

One of the initial challenges for companies is identifying all their AI systems. Manually creating a complete, real-time inventory of all AI models is often difficult. Our tools automate this discovery, building a comprehensive catalog that serves as a single source of truth.

Once the inventory is complete, the platform guides you through risk classification. It provides a structured workflow to evaluate each model against the Act’s criteria, helping you identify which systems are high-risk and require immediate attention.

Based on our experience, automating the initial inventory and classification can reduce the manual work of compliance preparation by an estimated 40 to 50 percent. This allows your teams to focus on mitigating risk and governing AI, rather than just locating it.

Tackling High-Risk Obligations Head-On

For systems identified as high-risk, the obligations are extensive, covering everything from data governance to human oversight. Our platform provides specific modules that translate these legal requirements into operational workflows.

Here is how we address some of the key mandates:

- Automated Documentation: The platform automatically generates much of the required technical documentation. It tracks model development, data lineage, and testing results, ensuring your records are always audit-ready.

- Continuous Monitoring: To meet human oversight rules, our tools continuously monitor model performance, data drift, and fairness metrics. The system flags anomalies and potential biases as they happen, allowing your teams to intervene proactively.

- Risk Management Workflows: We have built in repeatable workflows for conducting and documenting risk assessments. This provides a clear, consistent process for identifying and mitigating potential harms throughout the AI lifecycle.

This integrated approach makes compliance a continuous, automated part of how you build and manage AI. By providing a unified command center for your entire AI portfolio, our solution makes it easier to manage risk and demonstrate accountability to regulators.

To see these capabilities in action, learn more about the DSG.AI integrated platform for AI management and governance at https://dsg.ai/manageai.

A Few Common Questions About the EU AI Act

Specific questions often arise when leadership begins to examine the details of compliance. Here are answers to some common questions from business leaders.

We’re Not Based in the EU. Does the Act Still Apply to Us?

Yes, there is a high probability it does. The Act has extraterritorial scope, meaning its rules apply beyond the physical borders of the European Union. This is similar to the GDPR.

If your AI system is sold or made available in the EU market, or if the output produced by your AI is used within the EU, you are subject to the regulation. Your company's headquarters location does not matter.

What's the Difference Between a "Provider" and a "Deployer"?

The Act defines specific roles and responsibilities. Identifying your company's category is the first step in understanding your compliance tasks.

-

A provider is the entity that develops an AI system and places it on the market under its own name. Providers have the most significant compliance burden, particularly for high-risk systems.

-

A deployer is a business "user"—any organization using an AI system in a professional context. Deployers have their own duties, which primarily involve proper use, monitoring, and ensuring human oversight.

Are Open-Source AI Models Exempt from the Rules?

Not entirely. The Act attempts to avoid hindering innovation in the open-source community, but the exemption is not absolute. The exemptions are narrow and typically apply only to models released under a free and open-source license.

When those models are used in a commercial activity or fall into a prohibited or high-risk category, the rules apply.

It is important to remember this: If you build a commercial, high-risk application on top of an open-source model, your application must be fully compliant with the EU AI Act. The original model's open-source license does not provide an exemption for your application.

DSG.AI delivers enterprise-grade AI solutions that create measurable business value. Our integrated Responsible AI and GRC product suite helps teams meet governance standards and emerging regulations like the EU AI Act. See how we turn data into a competitive advantage by exploring our projects at https://www.dsg.ai/projects.