Written by:

Editorial Team

Editorial Team

- Persona: A Chief Information Officer (CIO) at a mid-to-large enterprise.

- Problem: The CIO needs to implement an enterprise AI program but is concerned about the substantial data security, privacy, and compliance risks that could lead to financial loss and reputational damage.

- Goal: To educate the CIO on tangible strategies for building a secure and compliant AI foundation.

- Funnel Stage: Awareness

When building an enterprise AI program, data security and privacy are foundational. A secure approach builds trust and delivers business value. A flawed approach exposes the organization to financial and reputational damage.

These principles ensure the sensitive information that trains your AI models remains protected while respecting individual privacy rights.

Why Data Security Is Mission-Critical for AI

The primary challenge for technology leaders is launching AI projects without compromising data protection. The effectiveness of an AI model is proportional to the volume and quality of data it accesses. This dependency requires precise management.

Consider an AI model as a high-performance engine and data as its fuel. A racing car needs high-octane fuel to perform. Similarly, an AI model requires relevant, secure, and well-governed data to produce expected business outcomes.

The Link Between AI and Risk

An AI model without robust safety controls is a liability. Data security and privacy controls are the roll cage and braking system that prevent operational failure.

A single data breach or privacy violation can trigger significant consequences. According to a 2023 IBM report, the global average cost of a data breach has reached $4.45 million. This figure represents the tangible risk associated with each deployed AI system.

An AI-driven organization must treat its data governance framework with the same seriousness as its financial controls. A proactive security posture is a core element of business strategy, not just an IT function.

This guide provides a roadmap for building that secure foundation. It offers actionable strategies for technology executives, covering AI threat models and operational best practices.

Here is what this guide covers:

- The Modern AI Threat Landscape: New vulnerabilities such as data poisoning and adversarial attacks.

- Security Across the AI Data Lifecycle: Implementing controls from data ingestion to model retirement.

- Privacy-Preserving Techniques: Advanced methods for training models without exposing raw, sensitive data.

- AI Governance and Compliance: Navigating new regulations, including the EU AI Act, and managing third-party risk.

The goal is to provide tools for building an AI program where security and privacy enable long-term, sustainable success.

Understanding the Modern AI Threat Landscape

Standard cybersecurity measures like firewalls and intrusion detection are necessary but insufficient for the challenges introduced by AI. AI systems present a new class of vulnerabilities that can corrupt the logic of your models from within.

The average cost of a data breach is $4.45 million globally. In the healthcare sector, that number increases to over $10 million per incident, as reported by IBM in 2023. These figures underscore the financial impact when sensitive, AI-processed data is compromised.

The New Frontier of AI-Specific Attacks

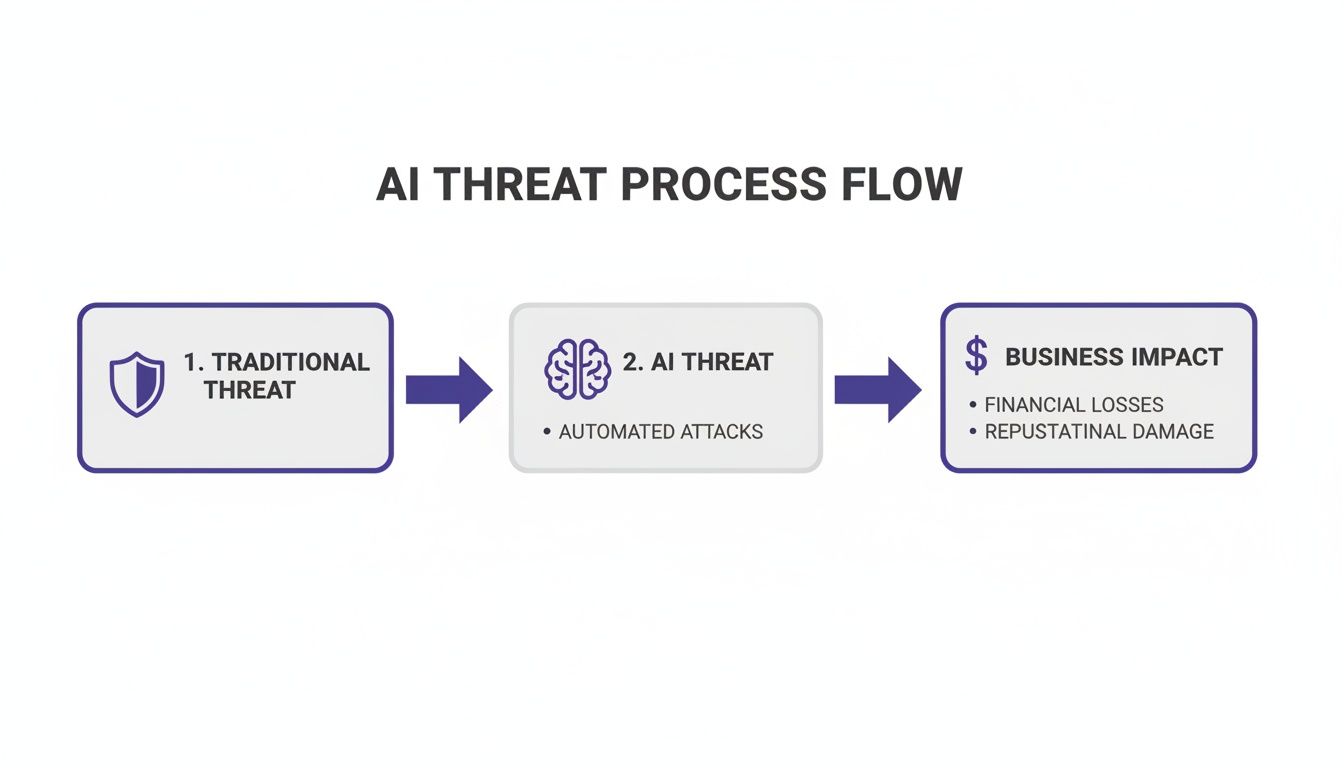

Traditional security focuses on preventing unauthorized access, like a lock on a door. AI security focuses on ensuring the system cannot be manipulated into making poor decisions. Attackers now target the data and algorithms at the core of AI systems.

They can poison the training data or use manipulated inputs to cause model failure. Understanding these new attack vectors is the first step toward building an effective defense.

The core challenge in AI security is that "code" includes both the software and the data the model learns from. An attacker who influences the data can alter the AI's logic without modifying the application code.

The following table compares these new threats to traditional cybersecurity issues.

AI-Specific Threats vs. Traditional Cyber Threats

This table outlines the differences between established threats and new challenges from machine learning.

| Threat Category | Traditional Threat Example | AI-Specific Threat Example (Synthetic) | Business Impact |

|---|---|---|---|

| Data Integrity | An attacker alters records in a customer database via SQL injection. | Data Poisoning: An attacker feeds a logistics model falsified traffic data, causing it to suggest inefficient routes. | Corrupted business intelligence, operational disruption, and financial loss from poor automated decisions. |

| System Evasion | Malware uses obfuscation to bypass antivirus software. | Adversarial Attack: A fraud detection AI misclassifies a slightly altered transaction as legitimate, allowing fraud. | Financial fraud, brand damage, and loss of trust in automated security systems. |

| Data Confidentiality | An attacker breaches a server to steal user passwords. | Model Inversion: An attacker queries a healthcare AI to reconstruct sensitive patient records from its training data. | Severe regulatory fines (GDPR, HIPAA), reputational damage, and exposure of PII or trade secrets. |

| Model Integrity | A malicious insider steals proprietary source code. | Model Stealing: An attacker repeatedly queries a proprietary trading algorithm to reverse-engineer its strategy. | Loss of competitive advantage and intellectual property. |

The focus has shifted from protecting static infrastructure to defending dynamic, data-driven systems.

Key AI Threat Models Explained

To manage data security and privacy in AI, you must understand the threat models. Here are three common and damaging types.

-

Data Poisoning: An attacker intentionally feeds a model bad data during its training phase. Synthetic example: A competitor subtly injects flawed information into a public dataset used by your pricing algorithm. The model learns to make incorrect pricing decisions, directly impacting revenue.

-

Adversarial Attacks: An attacker targets a fully trained model with inputs that appear normal to humans but are designed to deceive the AI. Synthetic example: A self-driving car's vision system is tricked by strategically placed stickers on a stop sign, causing it to misinterpret it as a speed limit sign.

-

Model Inversion and Membership Inference: These are privacy-focused attacks. Attackers attempt to reverse-engineer a model to extract sensitive training data (inversion) or confirm if an individual's data was used (inference). Synthetic example: A model trained on user emails could be attacked to reveal private messages, causing a massive privacy breach.

Securing AI requires protecting data integrity, model logic, and customer trust. This also affects how you manage third-party vendors. A structured approach to third-party risk management is necessary to protect your AI ecosystem.

Implementing Security Across the AI Data Lifecycle

Protecting an AI system requires a security posture that adapts with the data. Data security and privacy must be integrated into every stage, from data collection to model retirement.

Consider it a sensitive supply chain where specific controls are needed at each step. A failure at any single stage can compromise the entire system.

AI can magnify existing vulnerabilities, turning a technical issue into a financial threat.

Securing Data Acquisition and Preparation

The initial stage of data acquisition is critical for a secure foundation. Vulnerabilities introduced here will affect all subsequent phases. The goal is to ensure data is trustworthy, handled securely, and stripped of unnecessary sensitive information before it reaches a model.

Practical steps include:

- Data Minimization: Collect only essential data. A model predicting equipment failure does not need employee birth dates.

- Anonymization and Pseudonymization: Remove or replace personally identifiable information (PII). Synthetic example: A retail model could replace customer names with unique, random IDs, breaking the direct link to an individual.

- Robust Access Controls: Use role-based access control (RBAC) so that only authorized personnel and services can access datasets. Data scientists training a model rarely need full access to the production database.

Fortifying the Model Training Process

Once data is secured, the training process begins. This stage is a target for attacks like data poisoning. Security here involves protecting the integrity of the training environment and the data within it.

A secure training environment is an isolated one. It should be separated from other corporate networks to prevent malicious data injection and accidental data leakage. This ensures the model's learning process remains uncorrupted.

Two necessary controls for this stage are:

- End-to-End Encryption: All training data must be encrypted, both at rest (in storage) and in transit (moving between servers). Use protocols like TLS 1.3 for data in transit and AES-256 for data at rest.

- Privacy-Preserving Techniques: For highly sensitive datasets, use methods like Federated Learning. This allows a central model to be trained on decentralized data that never leaves its original location. Synthetic example: Training a predictive health model across multiple hospitals without any of them sharing raw patient records.

Hardening Model Deployment and Integration

A trained model is deployed into a production environment where it connects with other applications, exposing it to external threats. Deployment security focuses on securing the model's operational environment.

Practical steps for secure deployment include:

- API Security: The model's API is its primary interface. Secure it with strong authentication, rate limiting to prevent denial-of-service attacks, and input validation to block malicious queries.

- Container Hardening: Deploy models in hardened containers with a minimal footprint. Remove unnecessary libraries and services from the container image to reduce the potential attack surface.

- Secure Configuration Management: Do not hard-code secrets like API keys. Manage them through a secure vault and inject them into the environment at runtime.

Establishing Continuous Monitoring and Auditing

An AI model requires ongoing oversight. Its performance can drift, and new vulnerabilities can emerge. Continuous monitoring ensures the model operates securely and as intended after deployment.

This requires tracking both performance and security metrics. Implement comprehensive logging of prediction requests, detailed access logs, and regular audits of security controls. A formal AI risk assessment provides a structured method to identify and address these potential threats.

Mastering Privacy-Preserving Machine Learning Techniques

Strong data security and privacy controls enable innovation. Training powerful AI models often requires sensitive data. The challenge is to use this data without exposing the raw information. Privacy-Enhancing Technologies (PETs) allow for value extraction while protecting individual privacy.

PETs are designed to permit complex analysis and model training on data that remains protected. Using these techniques demonstrates a mature AI strategy and builds trust with customers and regulators.

Unlocking Insights with Differential Privacy

Differential Privacy provides a mathematical guarantee that an analysis result will not reveal whether any single individual's data was included in the dataset. It works by injecting a small, calibrated amount of "statistical noise" into the data or query results.

This noise blurs individual data points enough to protect privacy while preserving the accuracy of aggregate insights. This makes it a useful tool for releasing public datasets or running internal analytics without exposing individuals.

Training Models Locally with Federated Learning

Federated Learning enables collaborative model building in regulated fields like healthcare or finance. The core concept is to train a shared AI model using data that never leaves its original location. The raw data remains local; only the model's learned updates are shared.

Synthetic example: A group of hospitals wants to build an AI model to predict patient health risks. Privacy laws like HIPAA prevent pooling this data centrally.

With federated learning:

- A generic base model is sent to each hospital's local server.

- Each hospital trains this model using its own private patient data.

- The model updates—mathematical adjustments from the data—are encrypted and sent to a central server. The raw patient data never moves.

- These updates are aggregated to improve the central model, which is then sent back for another round of local training.

The result is an accurate model trained on the collective data of all hospitals, without any patient records leaving their source.

Computing on Encrypted Data with Homomorphic Encryption

Homomorphic Encryption allows for computations directly on encrypted data without decryption. The result remains encrypted and, when decrypted, is identical to the result of the same computation on the raw data.

This is like working with a locked box that has special gloves allowing you to manipulate the contents without seeing them. Only the keyholder can open the box to see the final result.

This technique is useful for secure cloud computing where a third party processes data without having access to it. Synthetic example: A bank could use a cloud service to perform risk analysis on encrypted customer portfolios. The cloud provider performs the computations but has zero visibility into the financial data.

Integrating these advanced techniques helps build systems that are both intelligent and trustworthy.

Navigating AI Governance and Regulatory Compliance

Deploying AI requires a governance framework. Without structured oversight, AI projects can introduce unacceptable risks. For AI, effective data security and privacy depends on a governance model that satisfies regulators and earns customer trust.

This framework defines responsibilities, decision-making processes, and methods for demonstrating compliance. With regulations like the EU AI Act setting new standards, a governance framework is now a requirement to operate.

The Three Pillars of a Strong AI Governance Framework

A dependable AI governance strategy is built on three core pillars:

-

Accountability: Establish clear lines of ownership. Define who approves a model for production and who is responsible if a model produces biased results. This involves creating an AI review board, assigning data stewards, and having a clear process for risk assessment and incident response. A human must be answerable for the machine's decisions.

-

Transparency: Maintain meticulous documentation for each model, including its training data, purpose, and known limitations. This also requires explainability—using tools to show why a model made a specific decision. This is essential for debugging, audits, and user trust.

-

Fairness: Actively identify and mitigate bias in training data and model outputs. This includes running statistical tests to check for disparate impacts on different demographic groups and implementing plans to correct biases when they are found.

Managing Risk in the AI Supply Chain

Governance extends to the entire AI supply chain, which often includes third-party vendors, external datasets, and pre-trained models. Each component is a potential source of risk.

Synthetic example: If your customer service chatbot uses a third-party sentiment analysis API, a security flaw in that API could expose your customer conversations. A disciplined approach to third-party risk management (TPRM) is a critical part of AI governance.

The interconnected nature of AI means your security is only as strong as your weakest partner. Vetting vendors for their data security practices is as important as evaluating their technology's performance.

Data from IBM's 2023 "Cost of a Data Breach Report" shows that breaches originating from third parties account for 15% of all data breaches and add an average of $360,000 to the total cost. Understanding AI-driven compliance risk assessment strategies is essential for managing these risks. You can find more data on the financial impact of third-party breaches in IBM's comprehensive report.

A compliant AI program requires examining both internal practices and the external ecosystem. Proactive governance allows you to use AI's full potential while staying within legal and ethical boundaries.

Your Actionable Checklist for Secure AI Implementation

This checklist provides specific, measurable actions to integrate data security and privacy at every stage of the AI lifecycle. Use it to assess current AI projects or establish a foundation for new ones.

Each step is a checkpoint for managing risk and ensuring responsible innovation.

Secure AI Implementation Checklist

| Phase | Action Item | Key Metric/Outcome |

|---|---|---|

| 1. Strategy & Governance | Establish a cross-functional AI Governance Body (Legal, IT, Business). | Documented AI charter and policies; clear ownership for risk decisions. |

| Define and document your AI Risk Management Framework and threat models. | A formal risk assessment is completed and approved for every new AI project. | |

| 2. Data Management | Implement a strict data classification policy (e.g., Public, PII). | 100% of training datasets are classified before use; access controls are verified. |

| Enforce data minimization and automated PII anonymization. | Reduction in sensitive data storage; audit trail shows PII was removed. | |

| 3. Model Development | Mandate privacy-preserving techniques (e.g., federated learning) for all models trained on sensitive data. | Documented proof of privacy technique implementation in model architecture. |

| Secure the model development environment with strict access controls. | Zero unauthorized access incidents to model code repositories or training servers. | |

| 4. MLOps & Deployment | Implement automated security scanning (e.g., for vulnerabilities in dependencies) in the CI/CD pipeline. | Number of critical vulnerabilities detected and patched before deployment. |

| Deploy continuous monitoring tools for production models to detect drift, bias, and adversarial attacks. | Mean Time to Detect (MTTD) for production anomalies is under 1 hour. | |

| 5. Ongoing Operations | Conduct regular red-teaming and penetration testing on live AI systems. | Number of new vulnerabilities discovered per quarter; time-to-remediate. |

| Maintain a comprehensive model inventory and data lineage records. | Ability to trace any model's prediction back to the specific data it was trained on. |

This checklist is about building a culture of security. By embedding these actions into your workflow, you shift from a reactive to a proactive posture where security is a fundamental part of innovation.

Frequently Asked Questions About AI Data Security

Here are answers to common questions from technology and compliance leaders about implementing AI security and privacy controls.

How do we integrate new AI security controls with legacy IT systems?

A phased approach is effective. You do not need to replace all legacy infrastructure at once. Start by creating isolated environments for AI development and training, cordoned off from your core network. This reduces the attack surface.

Use secure APIs as controlled bridges between new AI models and legacy systems. Synthetic example: A legacy inventory system can feed data to a new AI forecasting model through a hardened API gateway. All data in transit is encrypted and validated, but neither system exposes its internal architecture to the other.

What is the best way to communicate AI-related risks to the board?

Translate technical security risks into business impact. Focus on revenue, cost, and reputation.

Frame the conversation around three key areas:

- Financial Impact: Quantify the potential cost of a data breach involving AI. Citing the average $4.45 million cost of a breach, based on the 2023 IBM report, provides a clear benchmark.

- Reputational Damage: Present a scenario. Explain how a privacy failure or a biased algorithm could damage customer trust and the brand. Synthetic example: An AI pricing model that sets different prices based on demographics, leading to public backlash.

- Regulatory Penalties: Connect regulations like GDPR or the EU AI Act to your AI systems and specify potential fines.

Frame AI security to the board as an investment that protects the company's future revenue and market standing.

How often should we audit our AI models for security vulnerabilities?

The audit cadence depends on the model's criticality.

For a high-risk model, such as one used in medical diagnostics or fraud detection, conduct a comprehensive audit at least quarterly and after any major update. For lower-risk models, a semi-annual review may be sufficient.

Between formal audits, use automated security monitoring in real-time. This system provides early warnings for issues like data drift or adversarial attacks on all production models.

DSG.AI helps organizations design and deploy production-grade AI solutions tailored to their unique data and business processes. Learn more about our projects at https://www.dsg.ai/projects.