Written by:

Editorial Team

Editorial Team

Software predictive maintenance uses real-time data and AI to forecast equipment failures before they happen. This allows maintenance teams to schedule repairs proactively, preventing unplanned downtime and maximizing asset performance. It is a strategic shift in how a company manages its operational equipment.

Shifting From Reactive Repairs To Predictive Insight

For years, the default maintenance strategy was to wait for something to break. When a critical machine failed, production stopped, and teams scrambled for emergency repairs. This "run-to-failure" model is inefficient and creates operational risk and unpredictable budgets.

This reactive mode prevents maintenance teams from planning strategically. They are always managing the latest crisis, leaving no room for proactive work. The costs accumulate from overtime labor, expedited parts shipping, and revenue lost from an idle production line.

Moving Beyond Scheduled Fixes

The next step up was preventive maintenance, which works on a fixed calendar. While better than waiting for a complete breakdown, this schedule-based approach often leads to replacing functional parts simply because the calendar dictates. This strategy reduces some failures but introduces new costs and wastes resources.

To understand the shift, it helps in understanding the core differences between predictive and preventive maintenance. Software-driven predictive maintenance (PdM) offers a more intelligent path forward.

By analyzing continuous data streams from equipment, PdM software identifies subtle warning signs of a potential failure. It’s the difference between a doctor treating a heart attack versus using health data to prevent it from happening.

This foresight turns maintenance from a cost center into a competitive advantage. Instead of guessing when a machine might fail, teams can act with data-backed confidence. This precision allows for timed interventions that extend asset life and maintain smooth operations.

A Comparison Of Maintenance Strategies

This table breaks down the core differences in triggers, costs, and efficiency for each maintenance approach.

| Strategy | Trigger | Cost Impact | Operational Efficiency |

|---|---|---|---|

| Reactive | Equipment Failure | Very High: Unplanned downtime, emergency repairs, overtime. | Very Low: Frequent disruptions, production loss. |

| Preventive | Fixed Schedule (Time/Usage) | Moderate: Unnecessary parts/labor, some downtime. | Moderate: Reduces some failures but not all. |

| Predictive | Real-Time Condition Data & AI Forecasts | Low: Planned repairs, optimized parts usage, no emergencies. | High: Maximized uptime, extended asset life. |

The move toward a predictive model is a clear improvement in both financial and operational terms.

This data-first approach is built on a few key pillars:

- Real-time Data Collection: IoT sensors and system logs provide a constant stream of operational data, such as temperature, vibration, and pressure readings.

- Machine Learning Models: AI algorithms analyze the data to find patterns and predict an asset's remaining useful life (RUL).

- Actionable Alerts: When the system flags a potential failure, it sends a specific alert so teams can schedule repairs during a planned shutdown.

This approach enables leaders to make data-driven decisions, moving their organizations out of the break-fix cycle and toward a higher standard of operational efficiency.

The Real-World Business Impact of Predictive Maintenance

To get executive buy-in for new technology, you need to show tangible results. For software predictive maintenance, the business impact is direct and measurable, with positive effects on operations, finance, and workplace safety. The value comes from turning data into preventive action.

This shift from reactive repairs to data-driven foresight is driving market expansion. The predictive maintenance market, powered by intelligent software, is expected to grow from USD 10.6 billion to USD 47.8 billion by 2029, according to Fortune Business Insights. This growth reflects AI and ML delivering insights that prevent equipment failures. You can find more data on this market expansion and its drivers.

The most immediate financial gains come from reducing downtime and optimizing maintenance spending. Companies that implement PdM report up to a 40% reduction in maintenance costs and a 70% drop in unplanned outages, based on industry reports from sources like Deloitte and McKinsey. For asset-heavy industries, these percentages represent millions of dollars saved.

Putting a Number on the Financial Gains

Here is a synthetic example to illustrate the financial impact. Imagine a large manufacturing plant where a critical production line averages 10 hours of unplanned downtime per month. Each hour of downtime costs the business $150,000 in lost revenue. That is a $1.5 million loss every month.

Now, the plant implements a software predictive maintenance solution. In the first year, they reduce unplanned downtime by 60%. This saves six hours of downtime per month. The calculation is simple: $900,000 in recovered revenue monthly, which adds up to $10.8 million over the year. This figure does not include savings from reduced overtime labor and rush-shipping for emergency parts.

The Ripple Effect: Secondary Operational Benefits

Beyond the primary financial returns, software predictive maintenance creates other operational improvements. These secondary benefits often become the reason companies expand the program enterprise-wide.

Here are a few key operational wins:

- A Safer Workplace: Identifying a potential equipment failure before it happens improves employee safety. This proactive approach can reduce accidents and related liabilities.

- Smarter Inventory: The previous method involved stockpiling expensive spare parts. Now, maintenance teams can order components based on predictions, leading to an 8% to 15% reduction in spare parts inventory costs, as observed in multiple manufacturing case studies.

- Higher Overall Equipment Effectiveness (OEE): OEE measures asset productivity. By keeping equipment running at peak performance, predictive maintenance can boost OEE by 5% to 10% for critical assets within the first 18 months of implementation.

The true value of a predictive maintenance program is its ability to transform the maintenance function from a cost center into a strategic driver of operational excellence.

When you add up these benefits, the business case becomes clear. The hard numbers—from recovered revenue and lower costs to better safety and efficiency—provide the tangible proof needed to justify the investment.

The Architecture of a Predictive Maintenance System

A predictive maintenance system requires a cohesive technology stack where each component has a specific job. Think of it as a four-story building: each floor is distinct but must work together. For a CIO or CTO, designing this architecture correctly is fundamental to building a scalable and reliable system that integrates with existing enterprise tools.

It all starts with information. The foundation of this operation is collecting raw data to fuel predictions. Without a steady stream of high-quality data, even the most sophisticated algorithms are ineffective.

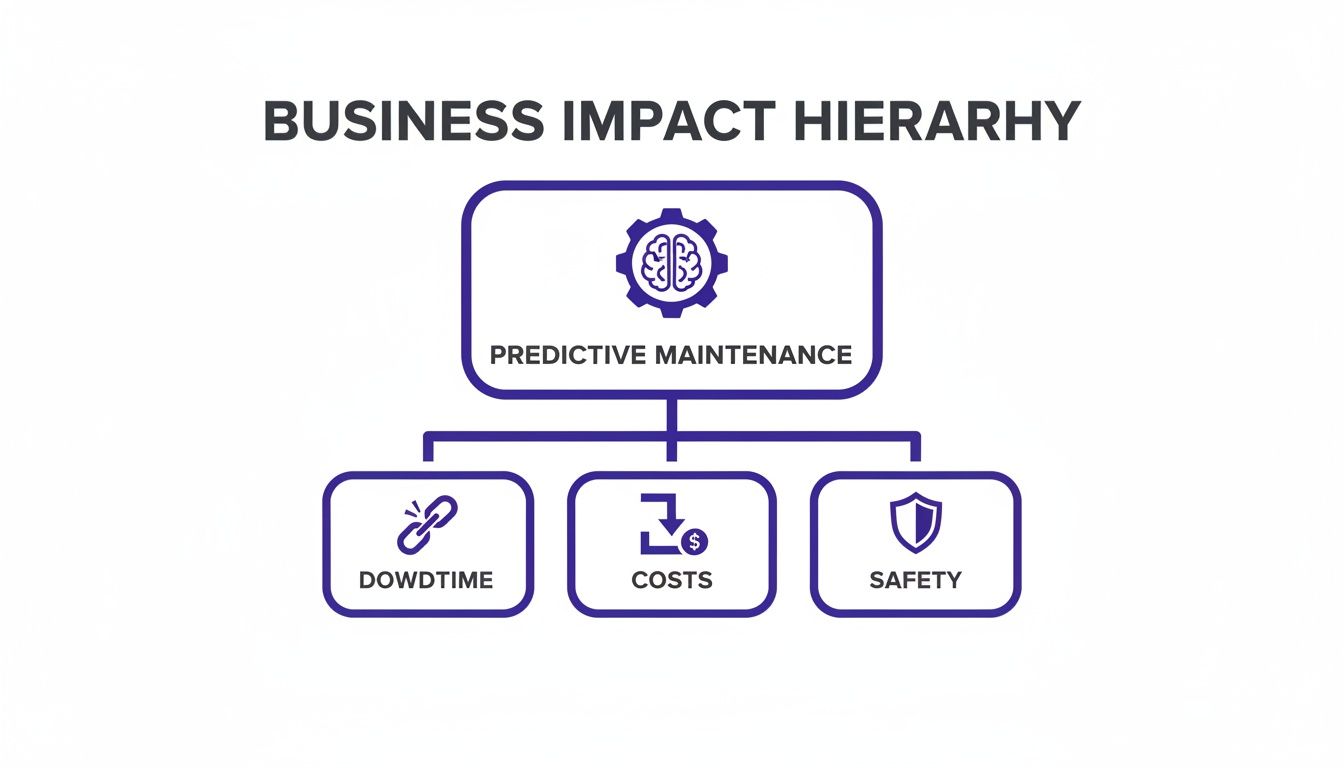

This strategic approach creates a ripple effect of positive business outcomes, starting with less downtime and leading directly to better cost controls and a safer work environment.

The diagram above shows how addressing operational disruptions at the source produces cascading benefits throughout the organization.

The Four Layers of a Modern PdM Stack

A modern predictive maintenance system is built on four distinct layers, each handling a critical stage of the process from raw data to business insight.

1. The Data Acquisition Layer This is the ground floor, where the system collects operational data directly from physical assets. It acts as the sensory network for the entire PdM program.

- IoT Sensors: Devices attached to machinery measure vibration, temperature, pressure, and acoustics.

- SCADA Systems: Supervisory Control and Data Acquisition systems provide operational context by monitoring and controlling industrial processes.

- Existing Databases: Historical maintenance logs, work orders from a CMMS, and asset specifications provide the historical backdrop for machine learning models.

2. The Data Processing and Storage Layer Once collected, the large volume of data needs to be stored and prepared for analysis. This layer is the central library and workshop.

- Cloud Platforms: Services like AWS, Azure, or Google Cloud provide scalable infrastructure to handle large data streams without a significant upfront hardware investment.

- Data Lakes: These repositories store structured and unstructured data in its native format, giving data scientists the flexibility to build and test models.

3. The Analytics and Modeling Layer This is the intelligence core of the stack, where raw data is transformed into predictive insight through machine learning.

This layer's algorithms can spot subtle anomalies in equipment behavior that are invisible to the human eye, flagging potential failures weeks or even months in advance.

Key components include:

- Machine Learning (ML) Models: These are specialized algorithms trained for tasks like anomaly detection, failure pattern recognition, and calculating an asset's remaining useful life (RUL).

- Analytics Engines: This is the environment where models are built, trained, and run at scale. To learn more about the tools available, it’s worth reviewing a comparison of the best predictive analytics software solutions.

4. The Visualization and Action Layer The final layer translates complex predictions into simple, actionable steps for maintenance and operations teams, connecting digital insights to physical actions.

- Dashboards: User-friendly interfaces display asset health scores, real-time alerts, and performance trends, giving managers a clear view of operational readiness.

- System Integrations: APIs link the PdM software with your CMMS or EAM. A predictive alert can automatically trigger a work order, creating a seamless workflow from forecast to fix. As these systems grow, it is vital to have robust governance; you can learn more about managing complex data and AI workflows to ensure performance and compliance.

A Phased Approach to Enterprise PdM Implementation

Rolling out software predictive maintenance across an enterprise is a strategic initiative, not a simple software installation. A methodical, phased approach proves value early, builds momentum, and systematically de-risks the investment. A "big bang" rollout across all assets at once often leads to delays, budget overruns, and disappointing results.

A more effective method is an agile, focused approach. It delivers measurable wins quickly, which proves the concept with hard data and builds a powerful business case for wider adoption. The goal is to move from a small, controlled pilot to a scalable, enterprise-wide program with confidence.

Phase 1: Identify High-Value Use Cases

First, resist the urge to monitor everything. Concentrate initial efforts where a predictive model will make the biggest financial or operational difference. This means collaborating with operations leaders to pinpoint the most critical assets.

Look for equipment where unplanned downtime creates the worst bottlenecks, incurs the highest costs, or introduces significant safety risks. A single production-critical CNC machine or a key pump in a chemical processing plant is a better starting point than one hundred non-critical motors.

This selection process should be guided by clear criteria:

- Business Impact: Quantify the cost of failure for each potential asset in lost revenue, repair expenses, and safety incidents.

- Data Availability: Determine if an asset already generates sensor data (like vibration or temperature) or if new sensors can be installed without a major project.

- Failure History: Prioritize assets with a known history of failures. If historical data shows problems a model could have plausibly forecasted, it is a strong candidate.

Phase 2: Run a Focused Proof-of-Concept

Once a high-value asset is identified, run a tightly scoped Proof-of-Concept (POC). The objective is to prove that predictive models can accurately forecast failures on a single piece of real-world equipment. An agile, six-week pilot program is a good framework for this.

This short timeframe forces the team to focus on a tangible outcome and avoids "analysis paralysis." During the POC, the team will connect data sources, build and train an initial machine learning model, and validate its predictions against actual events. Success is defined by the model's ability to generate accurate alerts that give maintenance teams enough lead time to act.

A successful POC moves the conversation from "what if" to "what's next." It replaces theoretical ROI projections with actual data, showing a direct link between the software investment and reduced operational risk.

Phase 3: Plan for Scalable Deployment

After a successful POC, the final phase is building a roadmap to scale the solution across the enterprise. This involves more than copying a model to other machines. It demands a thoughtful approach to data pipeline orchestration to manage data flows, model retraining, and alert routing efficiently as more assets are added. You can learn more about the importance of establishing a robust framework for managing complex data and AI workflows at scale.

This is where enterprise architecture is critical. Key activities include:

- Standardizing Data Ingestion: Create a reusable process for connecting sensors and integrating data from different equipment types.

- Developing a Model Library: Build and manage a portfolio of ML models tailored to different asset classes.

- Integrating with CMMS/EAM: Automate the workflow from a predictive alert to a work order created directly in existing maintenance management systems.

This phased rollout is becoming the standard. The global predictive maintenance market, driven heavily by software, is projected to surge from USD 4.27 billion to over USD 22.42 billion by 2028, according to reports from Verified Market Research. This reflects a massive shift toward predictive strategies. By starting small, proving value, and scaling intelligently, you ensure your PdM initiative delivers on its promise.

How to Measure PdM Success and Calculate ROI

A predictive maintenance program is only as good as its results. To secure and maintain executive buy-in, you must prove its value with clear metrics.

This requires tracking the right Key Performance Indicators (KPIs) and translating those wins into a clear Return on Investment (ROI) that is meaningful to financial leadership. You are essentially measuring the before-and-after picture—the financial impact of shifting from a reactive to a predictive model. The best KPIs draw a straight line from a predictive alert to a business outcome.

Key Performance Indicators for PdM

While specifics vary by industry, a few metrics are universally crucial for measuring the health of your predictive maintenance program.

- Reduction in Unplanned Downtime: How many hours did a critical asset sit idle before PdM versus after? A 25% to 50% reduction in the first year is a realistic goal for a well-run program.

- Mean Time Between Failures (MTBF): This metric indicates equipment reliability. A rising MTBF means assets are running longer without issues, a direct sign that predictive interventions are working.

- Maintenance Cost Reduction: This includes savings on overtime labor and carrying fewer spare parts because you know what you'll need and when. A calculation of cost-per-maintenance-hour before and after PdM can be very effective.

This intense focus on tangible results is why the market is expanding. The predictive maintenance software market in the U.S. is on track to hit USD 20.5 billion by 2034, according to IMARC Group, driven by an executive push for greater efficiency. Case studies in industries like mining have shown downtime reductions of up to 70%. This demonstrates how machine learning can directly boost reliability and output. You can explore more analysis of the growth of the US PdM market on imarcgroup.com.

A Simple Framework for Calculating ROI

Calculating the ROI of a PdM initiative does not require complex financial modeling. A straightforward framework is often the most effective way to communicate its value.

It starts with the classic formula:

ROI (%) = [(Financial Gain from Investment - Cost of Investment) / Cost of Investment] x 100

The key is knowing what numbers to use. Let's break down both sides of the equation.

Financial Gains: This is where the PdM program pays off.

- Cost Savings from Reduced Downtime: Multiply the hours of downtime avoided by the company's cost-per-hour of downtime.

- Maintenance Cost Savings: Subtract the new annual maintenance cost from the old one.

- Increased Production Value: Calculate the value of additional products made possible by increased uptime.

Costs of Investment: These are the initial and ongoing expenses.

- Software Licensing/Subscription Fees: The recurring cost for the PdM platform.

- Implementation and Integration Costs: The one-time professional services fees for setup.

- Hardware Costs: The price of any new sensors or other necessary equipment.

- Training and Personnel Costs: The time the team invests in learning and running the new system.

Using real-world numbers in this formula builds a data-backed business case that is difficult to dispute. It shifts the conversation from a technical project to a strategic business investment.

Navigating AI Governance And Compliance In Maintenance

When you embed software predictive maintenance into core operations, AI becomes a critical business function. Governance, risk, and compliance (GRC) can no longer be an afterthought; it must be a central part of the strategy. Without a solid governance plan, you could deploy models that are opaque, biased, or non-compliant with new regulations.

Good governance is about transparency and accountability. It is not enough for a model to flag a potential failure; your team needs to understand why it reached that conclusion. This is called model explainability, and it is essential for maintenance teams to trust and act on AI recommendations. If an operator cannot see the logic behind an alert, they are less likely to take it seriously.

Establishing A Framework For Responsible AI

As AI's role in operations grows, regulators are paying closer attention. A formal framework is a defense against risk. A responsible AI framework for predictive maintenance has three key pillars.

- Data Privacy and Security: The models run on sensitive operational data. Protecting that data and complying with regulations like GDPR is mandatory. This requires clear data lineage, strict access controls, and robust encryption.

- Model Monitoring and Performance: An AI model is not a set-and-forget system. Its accuracy can degrade as operating conditions change over time, a problem known as model drift. You need to monitor performance constantly to detect drift and retrain the model before it makes incorrect predictions.

- Clear Accountability: If a prediction triggers a major maintenance action, who is responsible? A well-defined governance structure clarifies roles for everyone involved, from data scientists to the engineers acting on the insights.

Preparing For Emerging Regulations

The rulebook for AI is evolving quickly. Getting ahead of new regulations is better than scrambling to comply later. New laws are placing strict requirements on AI systems that affect safety and critical infrastructure—which is exactly where industrial predictive maintenance operates.

Building a governance layer around your software predictive maintenance system is not about slowing innovation. It is about enabling sustainable, trustworthy AI at scale by embedding responsibility and compliance directly into your operational workflows.

Understanding these new rules is non-negotiable for a technology leader. As you scale AI programs, you must be ready for what's coming. For a detailed look at what this entails, our guide on achieving readiness for the EU AI Act breaks down the essential steps for enterprise compliance. A proactive approach ensures your predictive maintenance program not only drives operational value but also withstands scrutiny, protecting your company from financial and reputational damage.

Frequently Asked Questions

As you explore software predictive maintenance, questions will arise. It is a significant shift, and it is smart to understand the details. Here are some of the most common questions from technology and operations leaders, with straightforward answers.

What Kind of Data Do We Actually Need?

At its core, predictive maintenance relies on a mix of different data streams. The most valuable sources are:

- Historical Maintenance Records: This is the equipment's history: what broke, when, and how it was fixed. This data teaches the model what failure looks like.

- Live Operational Data: This is real-time feedback from assets, including sensor data measuring vibration, temperature, pressure, and sound.

- Asset Specifications: Basic information about the equipment—make, model, age, configuration—provides crucial context.

The quality of your data directly impacts prediction accuracy. A data readiness assessment is a crucial first step to identify what you have, what you need, and how to close any gaps.

How Long Until We See a Real Return on Investment?

The answer depends on your approach. You can see tangible results quickly. By starting with a focused proof-of-concept (POC) on a critical asset, you can often show value within the first business quarter. A quick win builds momentum and simplifies the case for a wider rollout.

While a POC can prove the concept fast, you'll typically see substantial, enterprise-wide ROI within 12 to 18 months. That's the point where the program has scaled across enough critical assets to compound the financial benefits from reduced downtime and optimized maintenance spending.

Can We Apply This to Any and All of Our Equipment?

Technically, yes, but that is not the most effective strategy. Software predictive maintenance delivers its biggest impact when focused on your most critical assets. These are the machines where failure causes major production stoppages, financial losses, or safety risks. The best candidates are pieces of equipment that already generate good operational data and have learnable failure patterns.

For low-cost, non-critical components, a simpler run-to-failure or scheduled maintenance plan is often more economical. The key is to aim predictive efforts where the return will be highest.

How Does This Fit With Our Existing CMMS or EAM Systems?

Modern predictive maintenance platforms are designed to integrate with your existing systems. They connect with your Computerized Maintenance Management System (CMMS) or Enterprise Asset Management (EAM) system, usually through standard APIs.

This integration is what makes the system work in practice. When the predictive model flags an upcoming failure, it can automatically create a detailed work order in your CMMS. This closes the loop, turning an intelligent alert into a scheduled action for your maintenance teams without manual steps.

Ready to move from theory to production? The team at DSG.AI specializes in designing, building, and operationalizing enterprise-grade AI systems that deliver measurable business value. Explore our real-world AI projects and see how our architecture-first approach can turn your data into a competitive advantage.