Written by:

Editorial Team

Editorial Team

Building a modern AI application is a structured process that turns a business problem into an operational system. This application development process connects business goals, data strategy, model creation, and governance. The objective is not just a functional app, but a solution that delivers measurable business impact.

A Modern Framework for Building AI Applications

Traditional software development methods are not designed for AI projects. They do not account for managing large datasets, the experimental nature of model training, or the complexities of governance and compliance. A modern application development process is built to handle these challenges, connecting a business problem to a production-ready AI system.

The global application development software market is projected to reach $172.94 billion by 2026, and it is estimated to grow at a compound annual rate of 21.60% through 2034. North America currently holds 33.60% of this market, with Europe growing due to a focus on digital innovation and data protection standards.

The AI Development Journey at a Glance

Building an AI application is an iterative cycle. It requires continuous communication between business leaders, data scientists, and engineers to align with strategic goals. The process takes an initial concept through to a live, monitored, and governed system.

I have observed that many AI projects fail in the final stages. The main challenge is moving a promising model from a development environment to a robust, production-ready application. Model development should be treated as one component of a larger engineering process that includes API integrations, drift monitoring, and governance from the start.

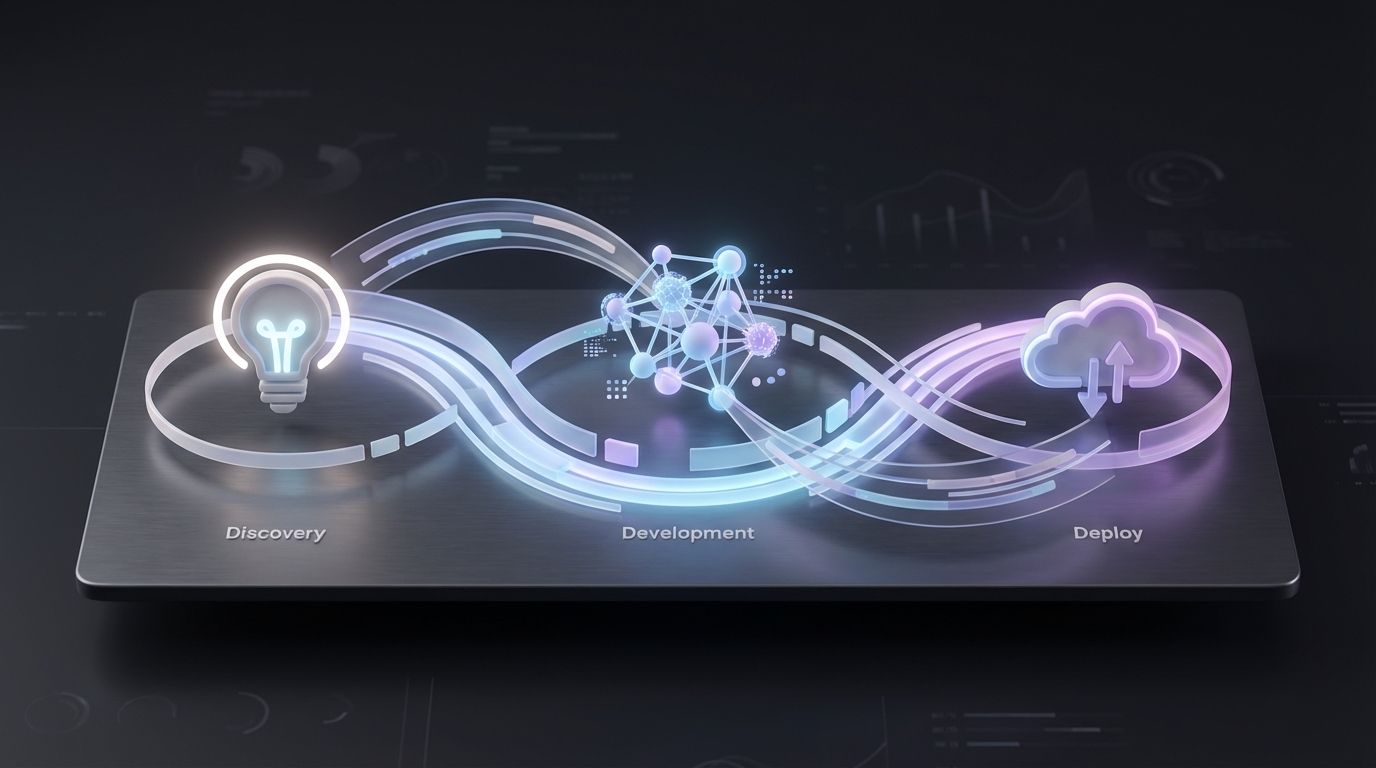

This diagram shows how the core phases are connected.

As the visual shows, Discovery, Development, and Deployment are distinct but interconnected stages. Each stage is critical for success.

The Seven Stages of Enterprise AI Development

To understand the full scope, the mobile app development lifecycle provides a solid foundation. For enterprise AI, a more specific seven-stage framework is effective because it integrates business value and responsible practices into each step.

Here is a breakdown of the seven stages:

- Discovery: Identify the specific business problem, define success with clear Key Performance Indicators (KPIs), and assess data feasibility.

- Data Preparation: Ingest, clean, and transform raw data into a reliable foundation for the model.

- Model Development: Experiment with different algorithms and train models to find a solution to the business problem.

- Validation: Test the model's performance against the business metrics defined in the discovery phase, not just technical accuracy.

- Deployment: Integrate the model into the production environment and make it accessible to users through APIs or applications.

- Monitoring: Track the model’s performance, monitor for data drift, and measure its ongoing business impact.

- Governance: Ensure the system is transparent, compliant with regulations, and follows the company's Responsible AI principles.

Following these stages provides a repeatable blueprint for turning AI experiments into value-generating assets.

Building a Strong Foundation with Discovery and Data

Every successful AI application begins with a business outcome, not an algorithm. This initial phase is where strategy meets technical reality. Rushing through discovery is a common reason AI projects fail to deliver a return on investment.

It starts with a focused problem statement. A goal like "improve customer satisfaction" is too vague. A strong problem statement is specific, such as: "Reduce customer support ticket resolution time for Tier 1 issues by 15% to 20% within six months." This provides a measurable target and a clear purpose for the project.

From Business Problem to Technical Plan

With a clear problem definition, you can develop a technical plan. This requires collaboration between business leaders, data scientists, and ML engineers to define the KPIs that will measure ROI.

For example, if the goal is to reduce scrap material in a manufacturing line, the real-world KPIs are what matter, not just the model's F1-score:

- Reduction in scrap material: Measured in kilograms or as a percentage decrease from the previous quarter's baseline.

- Increase in production uptime: Calculated by tracking minutes of unplanned downtime per shift.

- Early fault detection rate: The percentage of defects the AI identifies before they reach manual inspection.

This process validates the business case before model training begins. It forces you to answer critical questions: Do we have the necessary data? Does the potential benefit justify the cost and effort?

The Critical Role of Data Engineering

Once the business case is confirmed, the focus shifts to data. Data scientists can spend up to 80% of their time preparing data. A disciplined data engineering practice is a competitive advantage.

First, you need to ingest data from various sources like ERPs, CRMs, IoT sensors, and third-party APIs. Each source has different formats, latencies, and quality levels that need to be addressed.

The principle of "garbage in, garbage out" is fundamental in AI development. Without robust pipelines to clean, transform, and validate data, the project is built on an unstable foundation. The goal is to create a single, trusted 'golden record' dataset.

After ingestion, you build automated data pipelines to handle cleaning and transformation. This is a repeatable workflow that fills in missing values, corrects inconsistencies, and standardizes formats, such as converting all date fields to ISO 8601.

The final output is the ‘golden record’—a clean, aggregated dataset ready for modeling. Understanding how to measure and improve data quality is a core skill. You can learn more about key data quality metrics in our detailed guide. This foundation is essential for building a reliable AI application.

From Model Development to Business Validation

With a trustworthy data foundation, the process moves to the development phase. This is where ideas from discovery become tangible. It is an iterative cycle of experimentation, training, and refinement involving data scientists and engineers.

The process begins by revisiting the business problem, which determines the choice of algorithms. For example, predicting future sales may involve regression models, while flagging fraudulent transactions would use classification algorithms.

Model development is a methodical process. Data science teams often run many experiments, adjusting variables to find what works best with their specific data.

These experiments typically include three core activities:

- Feature Engineering: Creating new, more powerful signals from raw data. For example, combining a customer's total purchases with their last visit date to create a "recency" score.

- Hyperparameter Tuning: Adjusting internal algorithm settings, like learning rate or tree depth, to find the optimal configuration.

- Algorithm Selection: Comparing different algorithms, such as logistic regression versus a gradient-boosted tree, to determine which performs best for the specific problem.

The Challenge of Reproducibility

With many experiments, it is easy to lose track of which combination of data, code, and parameters produced a specific result. A structured process is necessary to avoid this.

Disciplined experiment tracking is essential. Modern MLOps platforms act as a digital lab notebook, automatically logging every run. This rigor ensures that any result is 100% reproducible. If a model from three weeks prior shows promise, the team can instantly recall the exact conditions that created it. You can see how this fits into a larger system in our guide on machine learning pipeline architecture.

Validating Against Business Reality

A model with strong technical metrics is only halfway complete. The next step is business validation, where many AI initiatives fail. A model can be technically sound but useless if its output does not align with business objectives.

Proper validation has two parts.

First, benchmark the model’s performance against the business KPIs defined during discovery. If the project's goal was to reduce scrap material by 8%, you must run simulations on historical data to confirm the model's predictions would have met that target.

A model's F1-score is not meaningful in a business context. The only validation that counts is whether its output improves a critical business metric. If a model is 99% accurate but its recommendations increase operational costs, it has failed validation.

Second, involve human experts. Subject matter experts (SMEs)—such as factory floor workers or supply chain managers—need to review the model's outputs. They provide a sanity check, confirming that the predictions are not only accurate but also logical, practical, and actionable.

This human-in-the-loop validation prevents the deployment of a model that is effective in theory but ignored by the people it is intended to help. A model is ready for deployment only after passing both technical and business validation.

Deploying, Monitoring, and Governing AI Systems

Launching a validated model into production is the beginning, not the end. The work continues to ensure the system delivers consistent value and operates safely. This phase involves disciplined deployment, vigilant monitoring, and solid governance.

Unlike traditional software, AI systems are dynamic. Their performance is tied to the data they process, and their reliability can change as data changes. This requires a different post-deployment mindset.

Strategic Deployment and Integration

Moving a model from a lab environment to production requires careful risk management. It is important to observe the model's behavior with live data before a full-scale rollout.

Two common deployment patterns are:

- Canary Releases: Roll out the new model to a small, controlled segment of users, such as 5% of traffic. This allows for performance monitoring in a live environment and bug fixing before a wider release.

- A/B Testing: Run the new model (Variant B) alongside the current one (Variant A), splitting traffic between them. This provides quantitative data on key business metrics to determine if the new model is an improvement.

In addition to the release strategy, a solid architectural plan is needed. Most enterprise AI systems must integrate with existing applications. This is typically handled by wrapping the model in an API (Application Programming Interface), which provides a standardized way for other systems to send data and receive predictions.

The Necessity of Continuous Monitoring

Once deployed, a model’s performance can degrade over time. This is often caused by data drift or concept drift.

- Data Drift: Occurs when the statistical properties of the input data change. For example, a fraud detection model trained on pre-pandemic data may become less effective as consumer spending habits change.

- Concept Drift: Occurs when the relationship between inputs and outcomes changes. A model predicting customer churn might become less accurate if a competitor launches a new product that alters customer loyalty patterns.

The assumption that a model working today will work tomorrow is a significant risk. Without automated monitoring, a model can silently degrade, reducing business value and creating operational risk.

Automated monitoring is non-negotiable. Dashboards and alerting systems should track technical metrics like accuracy and latency, as well as business KPIs like revenue impact. These systems provide early warnings of performance issues, allowing teams to intervene before they cause significant problems. You can explore a range of machine learning model monitoring tools to automate this process.

Establishing Strong Governance and Compliance

Governance transforms a powerful tool into a trusted, compliant business asset. This framework ensures transparency, accountability, and adherence to internal policies and external regulations. This is critical for all AI systems, including specialized applications like legal AI tools for lawyers.

Effective AI governance has several key components.

First, establish clear ownership. It must be clear who is responsible for the model's performance in production and who has the authority to intervene if problems arise. These roles and responsibilities must be documented.

Second, implement Responsible AI controls from the beginning. This includes testing for and mitigating bias, ensuring model decisions are explainable, and securing sensitive data. With regulations like the EU AI Act, these controls are becoming a legal requirement.

A "Model Card" is a useful tool for this. It is a document that provides a standardized summary of a model’s purpose, performance metrics, limitations, and ethical considerations. This transparency is valuable for internal audits, stakeholder reviews, and regulatory compliance, completing an enterprise-grade application development process.

Untangling Roles and Responsibilities with a RACI Matrix

An application development process can fail if roles and responsibilities are unclear. Unclear roles can lead to project delays, missed deadlines, and team friction. This is a significant risk in AI development, which requires close collaboration between business, data, and engineering teams.

A RACI matrix is a simple framework for assigning responsibilities and clarifying roles.

What RACI Actually Means

The acronym represents four distinct roles:

- Responsible (R): The individuals who perform the work. There can be multiple "R"s for a task.

- Accountable (A): The person ultimately answerable for the task's success or failure. There can be only one "A" per task.

- Consulted (C): Subject matter experts whose input is needed. This is a two-way communication.

- Informed (I): Individuals who are kept updated on progress. This is a one-way communication.

This structure forces clear conversations about ownership. Too many "A"s for one task can lead to conflicts. Too many "C"s can lead to slow decision-making.

The value of a RACI matrix comes from the discussions during its creation. Agreeing on who is Accountable for each stage of the AI lifecycle is a powerful alignment exercise for any team.

Mapping these roles across the seven-stage AI framework creates a practical playbook for the team. It defines ownership for all activities, from setting business goals to validating model accuracy and approving deployment.

Sample RACI Matrix for the AI Application Development Process

The table below provides a starting point for mapping roles to key activities in the AI application development process. This template should be adapted to your team's structure. The goal is to define these roles before the project begins.

| Activity / Stage | Product Owner | Data Scientist | ML Engineer | Business Analyst | GRC Officer |

|---|---|---|---|---|---|

| Define Business KPIs | A | C | I | R | I |

| Assess Data Feasibility | A | R | C | C | I |

| Develop Data Pipelines | I | C | A | I | C |

| Train & Tune Models | I | A | R | I | I |

| Validate Model vs KPIs | A | R | I | R | C |

| Deploy Model to Prod | C | C | A | I | A |

| Monitor for Data Drift | I | R | A | I | C |

| Document for Audit | I | C | C | R | A |

A clear RACI framework empowers team members by defining their roles. It reduces miscommunication, speeds up approvals, and provides a clear escalation path, making the development process more efficient and predictable.

Your Six-Week Implementation Roadmap

A disciplined, time-boxed plan can move an AI idea from concept to a production system that delivers value. This six-week roadmap is a framework for launching an AI initiative. An accelerated timeline helps build momentum and demonstrate a return on investment quickly, preventing projects from becoming stalled.

Weeks 1 & 2: The Discovery and Data Sprint

The first two weeks focus on building a solid foundation through alignment and data preparation.

- Week 1: The goal is a signed-off Project Charter. This document serves as the single source of truth, containing a clear problem statement, specific business KPIs (e.g., "reduce scrap by 8% to 12%"), a data feasibility assessment, and an initial RACI matrix.

- Week 2: The data engineering team begins building and testing initial pipelines for data ingestion and cleaning. By the end of this week, a "golden record" dataset—a clean, trusted version—should be ready for data scientists.

At the end of this sprint, the project's objectives and the quality of the data should be clear.

Weeks 3 & 4: Iterative Modeling and Validation

With clean data available, the process moves to rapid development sprints. The goal is iterative progress, not a perfect final model.

The data science and ML engineering teams will experiment with algorithms, tune hyperparameters, and generate candidate models. Each model version is tested against the business KPIs defined in Week 1. This stage also includes validation sessions with subject matter experts to ensure the model's outputs are useful and practical.

The target here is to produce a "minimum viable model." This is not the final version, but one that is technically sound and capable of delivering on the core business goal.

Week 5: Production Deployment

Week 5 is dedicated to moving the validated model into the live production environment. The ML engineering team packages the model into a scalable API for integration with other enterprise systems.

Deployment follows a controlled strategy. A common approach is a canary release, where a small fraction of traffic (e.g., ~5%) is routed to the new model for close monitoring. Monitoring dashboards and automated alerts for issues like data drift are also finalized this week.

Week 6: Establishing Governance

In the final week, the project transitions from a "build" to an "operate" phase. The goal is to ensure the AI application becomes a reliable, well-managed asset.

The team finalizes all documentation, including a Model Card that details the model's performance, limitations, and ethical considerations. Long-term monitoring protocols are activated, and ownership is formally transferred to the operations team. This completes the six-week application development process and sets the stage for continuous value delivery.

Questions We Hear from Leaders

I have discussed the requirements for launching an AI application with many business leaders. Certain questions frequently arise.

How Long Does This Whole Process Take?

Every project is different, but a structured process provides predictability. With a focused roadmap, an initial version (MVP) can often be delivered in under two months.

A more complex, enterprise-wide system may require a 3 to 6 month timeline.

The key is to start with a precise business goal. This focus helps avoid prolonged 'science projects' that consume resources without delivering a clear return.

Where Do Most AI Projects Go Wrong?

The most common failure point is the gap between a working model and a production-ready application. Many projects get stuck in development because deployment and monitoring were not part of the initial plan.

A successful application development process treats modeling as one component of a larger engineering lifecycle. API integration, drift monitoring, and governance must be considered from day one.

The most significant risk in AI development is treating it as a research task. Success requires engineering discipline that plans for operational realities—scalability, monitoring, and compliance—long before the model is deployed.

How Can We Actually Guarantee an ROI?

An ROI is established by defining it from the start. In the Discovery phase, you must define specific, measurable KPIs tied to a real business outcome. Instead of a vague goal like 'improve efficiency,' aim for a concrete target, such as 'reduce manual data entry by 8% to 15%.'

The model's success is measured by its impact on that business metric, not just its technical accuracy. A strong governance framework tracks the value of all AI initiatives, ensuring resources are directed toward high-impact projects and providing executives with clear data. This is how AI becomes a measurable value driver rather than an expense.

At DSG.AI, we specialize in implementing this disciplined process to build production-grade AI systems that deliver business value. See how our six-week implementation methodology can accelerate your next project at https://www.dsg.ai/projects.